If ai innovation means anything in production, it means making retrieval-augmented generation withstand traffic spikes without cratering latency or cost. This blueprint leans into the messy reality: sharded vector stores, cache warming strategies that don’t implode, and query paths that can honor a sub-200ms p95 even when the world is not tidy.

Executive pressure forces alignment before the architecture cooperates

Teams don’t chase sub-200ms p95 because it sounds sleek; they chase it because users bounce and downstream automation misses deadlines when retrieval drifts into the third second. You can’t pad latency with bigger instances when cost-reporting and SLOs land on your desk every Monday.

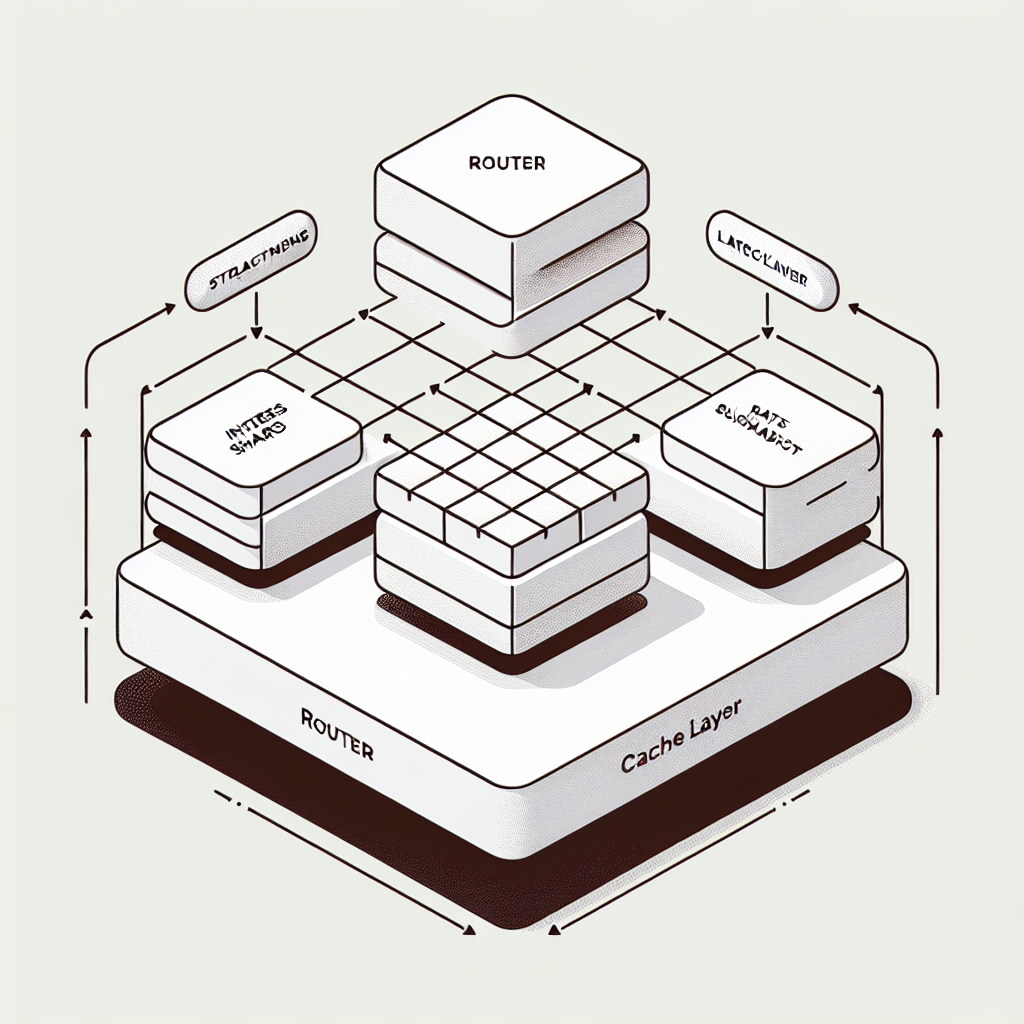

The arguments begin around sharding, but they end at the router: how you segment the vector store, how you pre-warm caches, and how you steer queries under load makes or breaks the system. This isn’t a diagram-level decision; it is a series of trade-offs that keep resurfacing whenever data or traffic changes shape.

Cache warming is not optional. Cold caches drag p95 into the red even when mean latency looks fine. The trick is warming without stampeding your own storage or indexers. Real systems punish naive warming schedules.

Indexes do not stay balanced. Hot keys, new domains, and uneven content sizes skew shards. You will re-shard, you will rebuild, and you will need to do it while serving traffic. The blueprint is less about the initial design and more about how you handle drift under pressure.

Introduction: revenue risk appears when RAG misses deadlines

The first time a high-traffic RAG path slows down, it’s usually not the model’s fault. A promotional spike or a new content batch lands, the vector store gets uneven, caches go cold, and the router starts sending queries into shards that are thrashing their memory and disk. Tail latencies go from tolerable to reputation-damaging in an afternoon. Operations moves fast, but every attempted fix has a blast radius.

AI Innovation Blueprint for High-Traffic RAG Systems: Sharded Vector Stores, Cache Warming, and Sub-200ms P95 Latency surfaced as a requirement when the retrieval side became the bottleneck. The model was stable, the prompts were controlled, but the data path wasn’t prepared for traffic elasticity. This is the kind of ai innovation that isn’t glamorous: put the index layout, cache behaviors, and routing logic under hard constraints, then keep them there while the system moves.

Latency pressure: what sub-200ms p95 actually looks like when shards drift and caches cool

In production, the path is simple on paper and adversarial in practice. A query arrives with a budget. The router picks shards based on a key, a learned distribution, or a hybrid scoring. Shards return nearest-neighbor candidates. A secondary retrieval step (filters, rerank, or both) settles the final context. The model consumes the context, ideally already sitting warm in memory. Every link is a latency risk, especially when shards hold uneven content or warming didn’t run recently.

Constraints appear fast. The index needs space for growth and rebuilds, not just for current vectors. The shard map must handle skew introduced by new domains or content classes. Cache layers (embedding cache, chunk cache, and results cache) must stay below memory ceilings while actually capturing the working set. You’ll add circuit breakers to stop pathological queries, and you’ll enforce query timeouts that protect p95 at the cost of recall. That’s a real trade: losing some relevant documents to save the tail.

Boundaries are unkind. Re-embedding entire corpora is rarely feasible on demand; you run rolling updates. Re-sharding during traffic windows risks cross-shard duplication or temporary holes. Background warmers can collide with live reads and turn your object store into a bottleneck. The habit of “just scale it” gets expensive and fails to tame tail latency because contention does not scale linearly.

Tail latency fights you in the router before it ever reaches the model

Routing is where latency either gets contained or multiplied. If the router fans out to too many shards to increase recall, the aggregation step becomes the tail. If it fans out too little, you under-fetch context and the model struggles. You tune fan-out based on real load patterns, not ideal math. The trick is to adaptively reduce the set under pressure and accept a smaller context when the system is hot.

Cache warming without self-DDoS

Warming strategies that pull aggressively from cold storage can tank overall latency. Smarter warming looks at the top queries by recent distribution, prefetches only index-specific metadata and small chunk headers, and opportunistically warms full chunks after quiet periods. You’ll stagger warming across shards and gate it behind simple utilization checks. It’s less precise than you want, but more survivable.

Shard balance erodes quietly and then all at once

Shards drift with content growth and popularity shifts. The moment one shard’s working set no longer fits into its intended memory band, p95 balloons. Detecting drift needs lightweight signals: hit rates by shard, cache evictions, and queue depth. When drift is confirmed, you pick your poison: migrate entries during off-peak with dual-write, or introduce a temporary overflow shard and accept fragmentation until a planned rebuild.

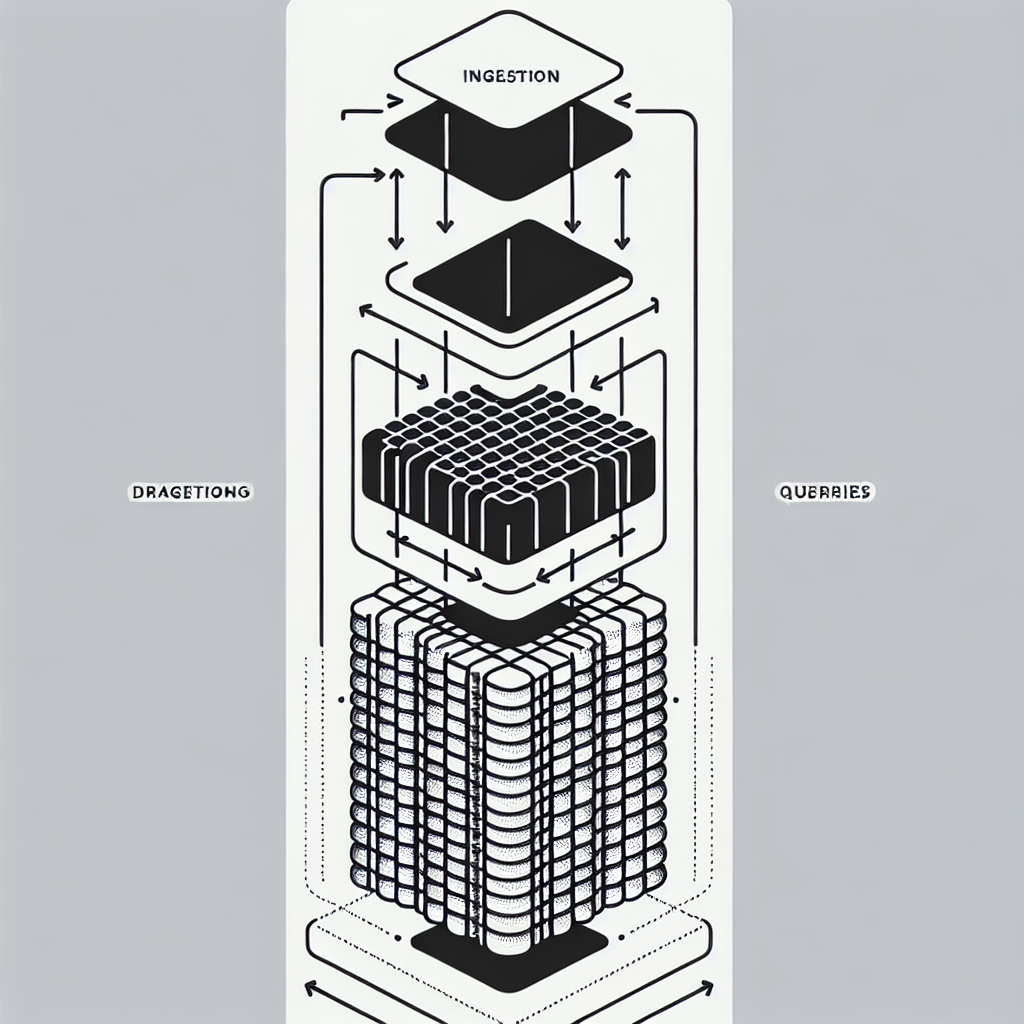

Sequencing under traffic: ingestion, partitioning, warming, routing, and rebuild cycles

This unfolds in loops, not one-offs. New content arrives through ingestion, embeddings are produced, and candidates get placed into shard partitions. Warmers pre-load metadata and the hottest chunks. The router uses the shard map to steer read paths, observes latency, and signals backpressure. Maintenance jobs rebuild or re-partition shards when drift passes thresholds. None of this happens in isolation; every step contends with others.

Handoffs bite. Ingestion can outrun embedding, leading to stale search against old vectors. Embedding catches up and suddenly the shard’s shape changes; caches now face cache-invalidation decisions that will either slow traffic or return older chunks momentarily. The router keeps working during all of this, ideally aware of index freshness so it can prefer shards with consistent embeddings for tight latency windows.

Dependencies carry friction. Shard metadata needs to be updated atomically enough to prevent double-fan-out. Warmers require admission control so they don’t devour bandwidth during incident mitigation. Observability spans query timing, cache hit rates, shard-specific queue depth, and error budgets; without these tied to routing decisions, you fix symptoms instead of causes.

Where teams slow down and redo choices

Three recurring stalls: choosing a shard key that looked clean in development but fails under real distribution, accepting an over-eager cache policy that backfires on costs, and picking an index configuration that meets lab benchmarks but trips on data skew. The redo happens under pressure, often during a partial outage. You strip polish, raise the simplest guardrails, and replace ideal logic with measured pragmatism: limit fan-out, hard-cap per-shard concurrency, and throttle warmers.

Routing as a living system, not a static layer

The router must observe the store like a scheduler observes nodes. It reacts to shard health, toggles recall versus latency, and prefers stable shards in peak hours. That’s a compass change: write routing as policy plus signals, not fixed code paths. You’ll still hit edge cases, but you’ll catch fewer tails.

Tooling choices dictated by latency budgets and blast radius

Tool selection is less about brand and more about constraints. The index engine needs approximate nearest neighbor with a configuration tuned to your recall-latency budget; graph-based layouts favor fast recall at memory cost, compressed layouts favor density but increase tail. The storage layer should tolerate high read concurrency without hammering throughput. Caches need simple eviction policies that don’t surprise you under contention; predictability beats theoretical optimality during incidents.

Embedding generation belongs on a pipeline that isolates spikes from online paths. Batching helps, but you avoid batch sizes that starve real-time updates. Warmers should be rate-limited and shard-aware. Observability tools have to surface p95 and cache hit ratios per shard, and couple them to routing decisions; dashboards that don’t inform action are decoration.

Examples under pressure: decisions with unintended consequences

A product discovery flow adopts broader fan-out to improve recall. Engagement rises, but the aggregation step grows. Under a surge, aggregation time becomes the tail, and p95 breaks the agreement. Rolling back fan-out regains latency but drops some relevance. The team keeps fan-out dynamic: lower during peak, higher during quiet. Users tolerate slightly worse results during peak hours more than they tolerate slow responses.

A support knowledge base rebuilds embeddings weekly. Freshness improves, but rebuild overlaps with traffic more than expected. Cache warming collides with user queries; object storage starts rate-limiting. Warming is rewritten to prefetch only metadata and defer full chunk warming until off-peak. Freshness regresses a bit, but latency stabilizes. The blueprint values stability above perfect freshness when SLAs are tight.

A code assistant introduces hard filters at retrieval to eliminate noisy files. Mean latency falls, but tail rises when filters cause multiple shards to be scanned sequentially after initial misses. The fix is counterintuitive: relax filters on the first pass, then rerank aggressively. Latency improves because the system avoids sequential shard misses.

Trade-off table: how choices land for newcomers versus seasoned operators

Pressure PointNewcomer ReactionExperienced ReactionImpact on p95Shard skew after content spikeScale instances, hope balance returnsLimit fan-out, throttle hot shard, schedule re-partitionTail contained, recall slightly reducedCold caches post-deployWarm everything immediatelyWarm metadata first, stagger chunks, rate-limit warmersFaster stabilization, lower burst costRouter facing intermittent shard timeoutsRetry aggressivelyFail fast, route to stable shards, degrade recallp95 protected, partial relevance lossIndex rebuild overlapping trafficPause rebuild, extend outage windowDual-write, background swap, notify router of freshnessMinimal disruption, longer consistency lagCost spikes from expanded fan-outTighten quotas globallyAdaptive fan-out by load, cache hot queriesCosts reduced without harsh user impact

FAQ: objections that surface before and during scale

How do we pick a shard key that doesn’t collapse under skew? Start with a key aligned to query distribution rather than document origin. Expect to add a learned or hybrid component that spreads hot categories. The first key will be wrong; build migration plans early.

Can we avoid cache stampedes when warming after deploys? Yes, by warming in layers: metadata first, then small chunks, then large chunks. Gate warming by shard health and read load. If the system is hot, defer full warms.

Do we precompute embeddings or generate on demand? Precompute where batch stability exists. On-demand belongs only in narrow surfaces with tight budgets and predictable spikes. Mixing both increases system complexity; isolate paths to control blast radius.

What if sub-200ms p95 remains elusive? Drop recall under pressure, reduce fan-out, and shift reranking to a cheaper heuristic. If latency still floats, revisit shard balance and cache hit rates; routing rarely fixes a starved shard.

How do we update content without full index rebuilds? Use rolling windows: append new vectors to overflow partitions, then compact during off-peak. Keep router aware of overflow so it can bias selection without doubling work.

Ownership shifts to routing and cache policy under growing traffic

Given how things behave today, this is what quietly changes next: retrieval success stops being a pure indexing problem and becomes an operational routing problem. Owners move from index correctness to latency-aware policy, balancing recall with stability every hour, not every quarter.

Ops-driven tuning -> Query-aware caching -> Cost-aware routing -> Hybrid indexes -> Automated SLA governance