Scaling AI from a few pilots to enterprise-wide use changes the work. Costs move from a line item to a material budget. Reliability becomes a contract, not a demo. Risk shifts from hypothetical to audit-ready. This article lays out a practical path to ai scalability that connects architecture, economics, and governance so you can scale with confidence, not surprise.

Introduction

Across industries, teams have proven value in constrained AI pilots. The pressure now is to serve thousands of users, hundreds of workflows, and variable demand without breaking budgets or service levels. Vendors ship new models monthly, regulators raise the bar, and internal data is both your edge and your liability. Ai scalability matters because every scale decision—API vs self-hosting, retrieval vs fine-tuning, synchronous vs batch—translates directly into throughput, latency, unit cost, risk exposure, and ultimately ROI.

What changes at scale is the operating model. You need explicit unit economics, capacity plans, shared platform components, and continuous evaluation. You also need guardrails that are enforced, observable, and auditable. The strategy below is designed for real systems under real constraints, not lab conditions.

Understanding the Topic

AI scalability is the ability to grow usage, workloads, and capabilities while keeping quality, latency, cost, and compliance predictable and within agreed service levels. In an enterprise context, it spans model access, data movement, orchestration, observability, and governance, all aligned to clear unit economics and risk controls.

Think in dimensions: volume (requests per second), breadth (number of use cases and tenants), depth (task complexity and tool use), and resilience (failover, vendor diversity, and change management). Horizontal scalability is serving more traffic and tenants. Vertical scalability is adding capabilities like retrieval, tool-calling, or fine-tuning without brittle rewrites.

Beginner-friendly framing without oversimplifying: define the service contract first. Set target p95 latency, target quality metrics per task, and a cost per successful task. Map these to system levers: model selection, prompt and retrieval strategy, caching and batching, routing, and evaluation. Your ai scalability plan should make these levers explicit and measurable.

Enterprise context

Enterprises operate with heterogeneous data, legacy systems, varied compliance regimes, and multi-vendor dependencies. Scalability is not only about throughput; it is about change tolerance, auditability, and budget clarity. Expect vendor shifts, model deprecations, regulatory updates, and workload seasonality. Build for portability, observability, and graceful degradation.

Key metrics and unit economics

Track cost per 1,000 tokens and per successful task, p95/p99 latency, success rate by task, hallucination rate, policy violations, and escalation rate. Tie these to business KPIs like deflection, cycle time reduction, or revenue lift. Use a standard unit economics model that converts demand scenarios into budget forecasts and capacity requirements.

How This Works in Practice

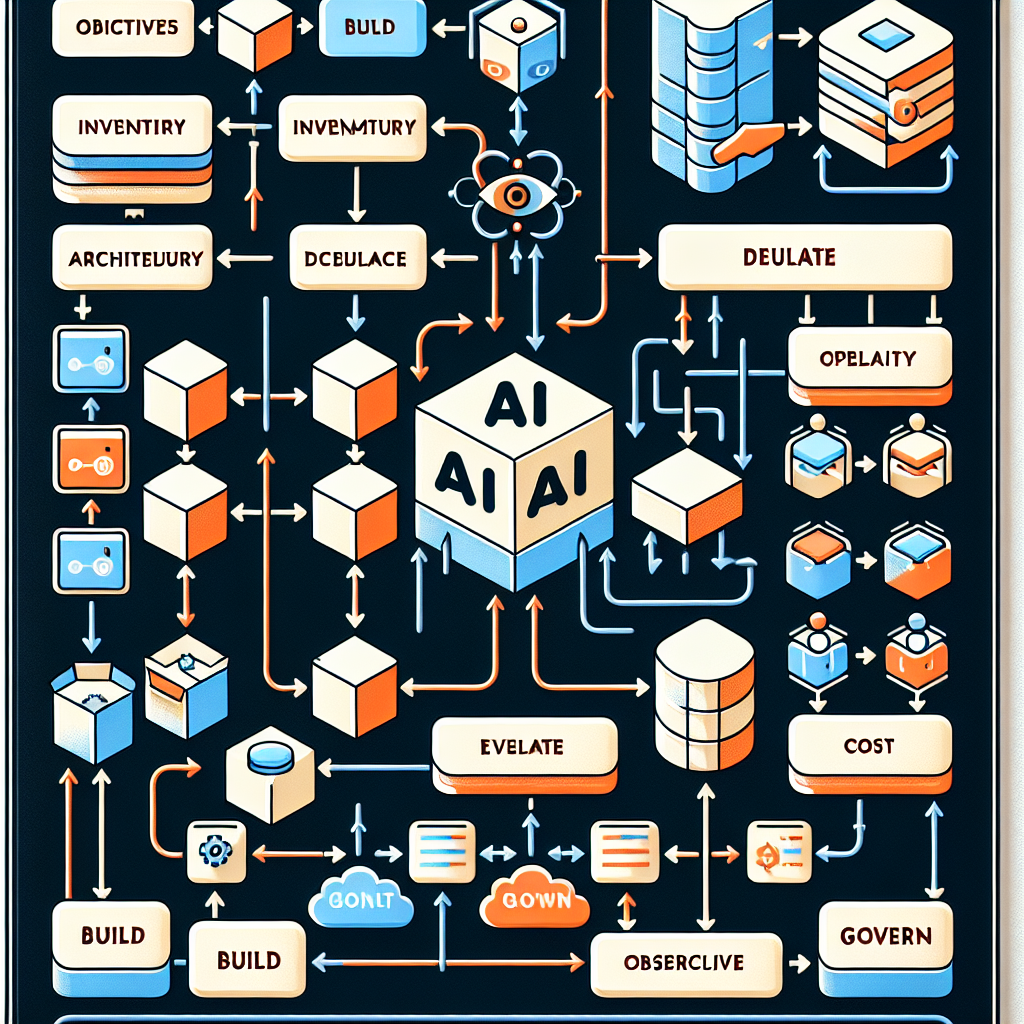

A scalable approach follows an end-to-end workflow that links objectives to operations.

1. Align objectives and metrics

Define business outcomes and guardrails. Choose task-specific quality metrics, latency targets, cost ceilings, and compliance requirements. Commit to unit economics (cost per success) as the primary financial metric.

2. Inventory use cases and dependencies

List candidate workflows, their data sources, privacy constraints, traffic patterns, and integration points. Group by similarity to maximize reuse of prompts, retrieval, and routing logic.

3. Choose access and architecture

Decide model access strategy: managed API, self-hosted open-source, or hybrid. Define your core path: prompt-only, retrieval-augmented generation, or task-specific fine-tuning. Establish a platform boundary for shared components: request router, prompt registry, retrieval services, policy engine, and evaluation services.

4. Plan capacity and demand shaping

Estimate traffic by use case and time. Implement tiered routing (premium vs standard), token caps, caching for frequent queries, batching for back-office tasks, and offline processing where latency allows. Design failover across models and regions.

5. Model the economics

Convert scenarios to spend: tokens, inference hours, storage, egress, and support. Compare reserved capacity with on-demand. Forecast variance and set alert thresholds for cost anomalies. Align budgets to rollout phases.

6. Build shared platform components

Implement the router, secrets management, prompt and template registry, retrieval connectors, content filtering and redaction, policy enforcement, evaluation service, and a feature store for reusable signals. Keep these vendor-agnostic to reduce switching costs.

7. Evaluate and test continuously

Create offline eval sets, run shadow traffic, and A/B tests. Include red-team scenarios and policy checks. Validate across latency tiers and data segments. Automate regression checks for prompts, retrieval, and model upgrades.

8. Deploy with controlled rollout

Use canaries and progressive feature flags. Enforce SLOs at the edge. Provide manual escalation paths and clear rollback procedures. Document change impacts and keep audit trails.

9. Observe quality, latency, and cost

Instrument logs, traces, and metrics for every request. Monitor quality outcomes, drift, and policy events. Track token usage and per-task costs. Visualize trends and trigger alerts on violation of SLOs or budgets.

10. Govern risk and compliance

Maintain a model and dataset inventory, data protection impact assessments, privacy and security controls, access reviews, and incident runbooks. Audit prompts, outputs, and decisions where required. Establish vendor risk monitoring and exit plans.

Tools and Technologies

Model access: managed APIs (major cloud providers and model platforms) for speed and reliability; self-hosting with inference servers (e.g., vLLM, TGI) for control and cost shaping; hybrids for flexibility.

Orchestration: lightweight service layers or frameworks that manage routing, prompt construction, retrieval, and tool-calling. Favor clear contracts and observability over feature sprawl.

Retrieval: vector options (pgvector, Milvus, Pinecone) and search-first options (OpenSearch, Elasticsearch). Choose based on data size, query complexity, and latency targets.

Observability and evaluation: request tracing, quality evaluation libraries, experiment management, and anomaly detection. Integrate with existing logging and APM tools.

Cost and capacity: token accounting proxies, caching layers (Redis), rate limiting (Envoy), and load testing (k6, Locust) to validate throughput and latency before broad rollout.

Governance and risk: policy engines, content safety filters, PII detection and redaction, data catalogs, and audit tooling. Ensure controls sit inline with the request path and are logged.

Examples and Applications

Customer support assistant

Baseline impact: retrieval-augmented responses reduce handle time and improve first-contact resolution. Scaled impact: add tiered routing (fast vs frugal models), escalation triggers on low-confidence answers, and batch summarization for overnight tickets to cut costs without hurting service.

Document automation

Baseline impact: classify and extract fields from contracts or invoices with prompt templates. Scaled impact: introduce schema-aware retrieval, fine-tune on edge cases, and implement quality gates with human-in-the-loop for exceptions to maintain accuracy at higher volumes.

Software delivery workflow

Baseline impact: generate code snippets and tests to speed routine tasks. Scaled impact: constrain generations with repository-aware retrieval, enforce security and compliance checks, and route complex tasks to stronger models while caching common patterns.

Risk analysis

Baseline impact: summarize incidents and highlight control gaps. Scaled impact: integrate structured risk taxonomies, add policy validation on outputs, and keep audit-ready logs that tie recommendations to evidence sources.

Tables and Comparisons

Compare common model access strategies to choose the right fit for ai scalability.

ApproachBenefitsTrade-offsPractical considerationsManaged APIFast start, high reliability, low ops overheadVariable cost, vendor lock-in risk, limited tuning controlUse for spiky demand and early scale; negotiate volume pricing; design for portabilitySelf-hosted OSSCost control, customization, data localityOps complexity, capacity planning burden, upgrade cadenceAdopt for steady workloads; invest in observability and autoscaling; validate quality parityHybridFlexibility, resilience, performance tieringMore integration work, routing complexityUse routers and common contracts; define failover; align SLOs across providers

FAQ

How do I measure that ai scalability is working?

Track cost per successful task, p95 latency, task-specific quality, and policy violations. Improvements should show stable or falling unit cost, stable latency under load, and maintained or improved quality.

How can I control cost as usage grows?

Use tiered routing, caching, batching, and token caps. Choose model classes by task complexity, and move steady workloads to reserved or self-hosted capacity. Monitor cost anomalies in real time.

Should I use managed APIs or self-host?

If demand is uncertain and time-to-value matters, start with managed APIs. For predictable loads or specific control needs, add self-hosted capacity. Many teams end with a hybrid that routes by task and SLA.

How do I handle latency spikes at scale?

Pre-warm capacity, set rate limits, batch where possible, and use regional failover. Cache frequent queries and degrade gracefully to lighter models when needed.

What risks require governance?

Data privacy, security, misuse, bias, hallucinations, and vendor dependency. Enforce inline policy checks, keep audit trails, and review access and model changes regularly.

Conclusion

Scalability is an operating discipline. When you tie architecture choices to unit economics, SLOs, and governance, you get predictable cost, reliable service, and defensible risk posture. The path is repeatable: set objectives, design for portability and observability, evaluate continuously, and scale the platform—not just the models. That is how ai scalability translates from proof-of-concept momentum into durable enterprise ROI.