TLDR

AI SaaS combines machine learning, data engineering, and cloud-native delivery to offer scalable, multi-tenant AI capabilities as a product.

It matters because it turns experimentation into repeatable outcomes with reliability, compliance, and unit economics.

It helps teams move from pilots to production by aligning architecture, operations, and growth into one system.

Executive Summary

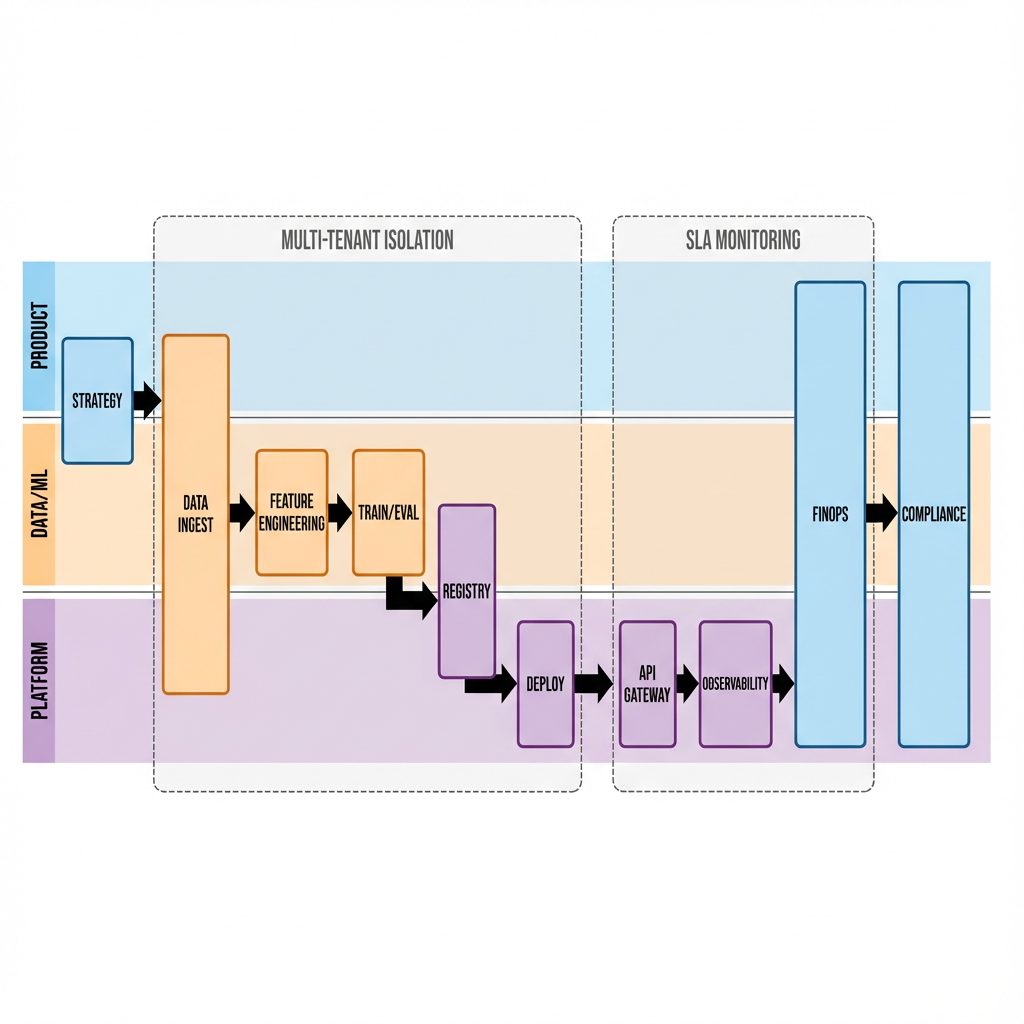

AI SaaS is the operating model that turns models and datasets into dependable, measurable, and secure services. Success depends on multi-tenant isolation, robust data pipelines, model lifecycle management, observability, cost control, and customer-centric SLAs. This piece outlines the architecture, workflow, and trade-offs enterprises face when building AI SaaS—and how to balance time-to-value with long-term maintainability.

Introduction

Across industries, the shift from isolated pilots to production-grade AI has exposed a hard truth: models are easy to demo, hard to operate. AI SaaS solves this by packaging AI capabilities as a cloud service with clear APIs, SLAs, and governance. Teams that adopt AI SaaS patterns shrink deployment friction, improve reliability, and create repeatable business outcomes while managing cost, compliance, and scale.

Understanding the Topic

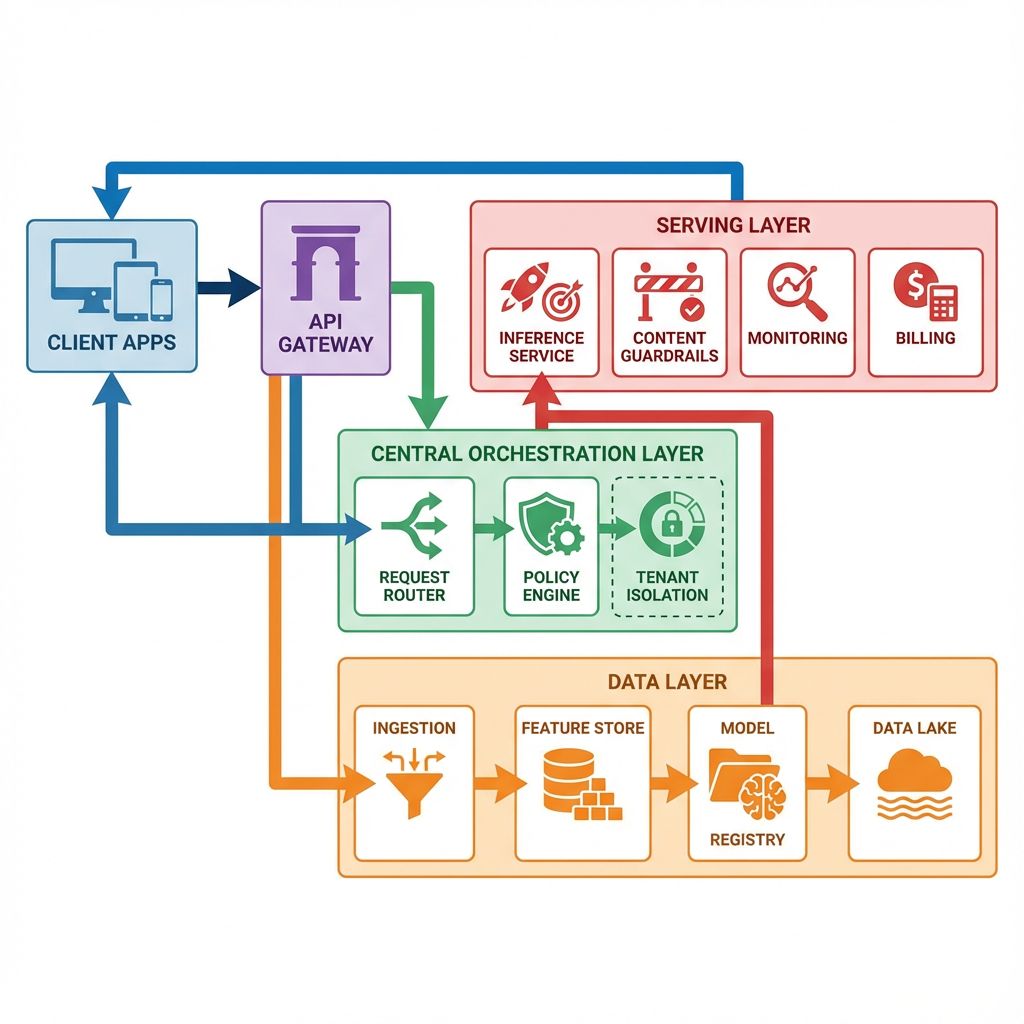

AI SaaS is the delivery of AI capabilities—such as predictions, recommendations, summarization, or automation—through a cloud-based, multi-tenant software service. It merges data engineering, ML/LLM operations, and product engineering to offer customers consistent APIs, usage-based pricing, and enterprise controls.

In an enterprise context, AI SaaS provides standardized ingestion, feature computation, model training and selection, inference at scale, and post-deployment monitoring within a governed, auditable framework. Customers consume AI functions via SDKs or REST/GraphQL endpoints, while the provider maintains isolation, security, and uptime across tenants.

Beginner perspective: think of AI SaaS as a reliable utility—feed data and configuration into a secure API, receive predictions or generated content, and pay for the usage. Experienced perspective: think of AI SaaS as a platform layer handling policy-as-code, lineage, versioning, cost attribution, and performance SLOs across diverse models and data sources.

How This Works in Practice

1. Product Strategy and Outcomes

Define the smallest valuable AI capability (e.g., fraud scoring, personalized recommendations, document summarization) and the measurable business outcome (conversion, resolution time, revenue lift, cost savings). Establish SLA targets, compliance requirements, and pricing strategy.

2. Data Foundation

Set up controlled ingestion from customer sources (files, streams, APIs), apply schema validation, PII handling, and lineage tracking. Build feature pipelines with versioned definitions, a feature store, and quality checks. Ensure tenant isolation at storage and processing layers.

3. Model Development and Selection

Use reproducible training pipelines with tracked datasets, parameters, and metrics. Maintain a model registry with approvals, test coverage, and policy gates. For LLMs, manage prompts, fine-tunes, and adapters as first-class artifacts alongside traditional ML models.

4. Deployment and Inference

Package models into scalable inference services behind an API gateway. Apply autoscaling, request quotas, and latency budgets by tenant. Implement content filters, guardrails, and structured outputs for LLM endpoints. Use blue/green or canary strategies for low-risk rollouts.

5. Security, Compliance, and Isolation

Enforce encryption at rest and in transit, key management, secret rotation, and role-based access. Apply tenant-aware policy checks, data residency, consent and deletion routines, and audit logs. Integrate model governance: approvals, audit trails, and risk classifications.

6. Observability and Quality

Instrument end-to-end telemetry: data quality, feature drift, model performance, latency, and error rates. Track user-level outcomes and feedback loops. Configure automated alerts and fallback behavior when quality or costs deviate.

7. FinOps and Cost Control

Attribute compute, storage, and third-party API costs per tenant and per feature. Optimize batching, caching, quantization, and routing to cheaper models when possible. Align pricing with actual resource consumption and value.

8. Customer Experience and Growth

Offer SDKs, sandbox environments, and usage dashboards. Provide self-serve onboarding, quotas, and upgrade paths. Maintain a release cadence, migration notes, and clear deprecation timelines to reduce customer friction.

Tools and Technologies

Data and Features

Data lakes and warehouses; streaming platforms; schema enforcement; feature stores; data quality validators; lineage trackers.

Model Lifecycle

Experiment tracking; model registries; training orchestration; evaluation frameworks; prompt management for LLMs; artifact versioning.

Serving and Orchestration

API gateways; service meshes; serverless and container orchestration; autoscaling; request routing; content moderation and guardrails.

Security and Compliance

Identity and access management; secrets management; KMS; audit logging; data masking and tokenization; policy-as-code; compliance reporting.

Observability and FinOps

Metrics, logs, and traces; drift and bias detection; performance dashboards; cost attribution and budgets; capacity planners.

Developer Experience

SDKs; CLI tooling; CI/CD pipelines; testing harnesses; documentation systems; sandbox environments.

Examples and Applications

Customer Support Automation

LLM-powered summarization and response suggestions delivered via an AI SaaS endpoint can reduce handle time and increase consistency. Starter implementations focus on templated intents and guardrails; advanced teams add retrieval augmentation, feedback loops, and per-tenant fine-tunes.

Revenue Intelligence

Predictive scoring and opportunity insights exposed through APIs help sales platforms prioritize actions. Early users apply standardized models; mature users integrate custom features, domain adapters, and scenario testing.

Risk and Compliance Monitoring

Stream-based anomaly detection, policy checks, and audit trails served as AI SaaS minimize manual review load while improving coverage. Initial deployments track high-level signals; scaled programs incorporate granular lineage, explainability, and human-in-the-loop escalation.

Document Processing

Extraction, classification, and summarization delivered as services accelerate onboarding, claims, and KYC workflows. Teams begin with robust parsers and structured outputs; later they introduce adaptive routing and cost-aware model selection.

Tables and Comparisons

ApproachBenefitsTrade-offsBest FitSingle-tenant AI SaaSSimpler isolation; tailored configsHigher costs; slower scaleStrict compliance, small customer baseMulti-tenant AI SaaSEconomies of scale; faster iterationComplex isolation; noisy neighbors riskBroad market, usage-based pricingManaged LLM-firstFast time-to-value; minimal infraVendor lock-in; variable costsRapid prototyping, mixed workloadsSelf-hosted modelsCost control; customizationOperational burden; talent needsHigh-volume, stable demandHybrid routingQuality/cost balance; resilienceComplex orchestrationDynamic traffic and SLAs

FAQ

How is AI SaaS different from traditional ML platforms?

Traditional platforms focus on internal model development; AI SaaS focuses on externalized, multi-tenant delivery with SLAs, billing, compliance, and customer-facing APIs.

Do I need fine-tuning to launch?

No. Start with robust base models, retrieval augmentation, and guardrails. Add fine-tuning or adapters when you have clear data advantages and measurable gains.

How do I control costs as usage grows?

Implement per-tenant quotas, intelligent routing, caching, batching, and model selection. Continuously attribute and review costs against pricing and value.

What are the minimum governance requirements?

Policy-as-code gates, audit logging, data lineage, approval workflows for changes, and documented SLAs. For regulated industries, add residency controls and human-in-the-loop review.

How do I measure success?

Track both technical SLOs (latency, error rates) and business KPIs (conversion, savings, satisfaction). Tie model changes to outcome deltas with controlled rollouts.

CTA

If you are moving AI from pilots to a dependable service, align architecture, governance, and economics early. A clear AI SaaS foundation will shorten time-to-value, reduce risk, and create room for innovation.