AI integration sounds straightforward until it’s running against live traffic. The stack isn’t just models, prompts, and an endpoint. It’s policy, data contracts, observability, and blast-radius control packed into tight latency budgets. Without a plan for governance and infrastructure, the feature works on day one and becomes a liability on day thirty.

Executive Summary

Governance and infrastructure become unavoidable the first time a model change silently alters outputs, a vendor throttles you mid-campaign, or an audit asks for evidence you never collected. The work is not about control for its own sake. It’s about preserving predictability under variability.

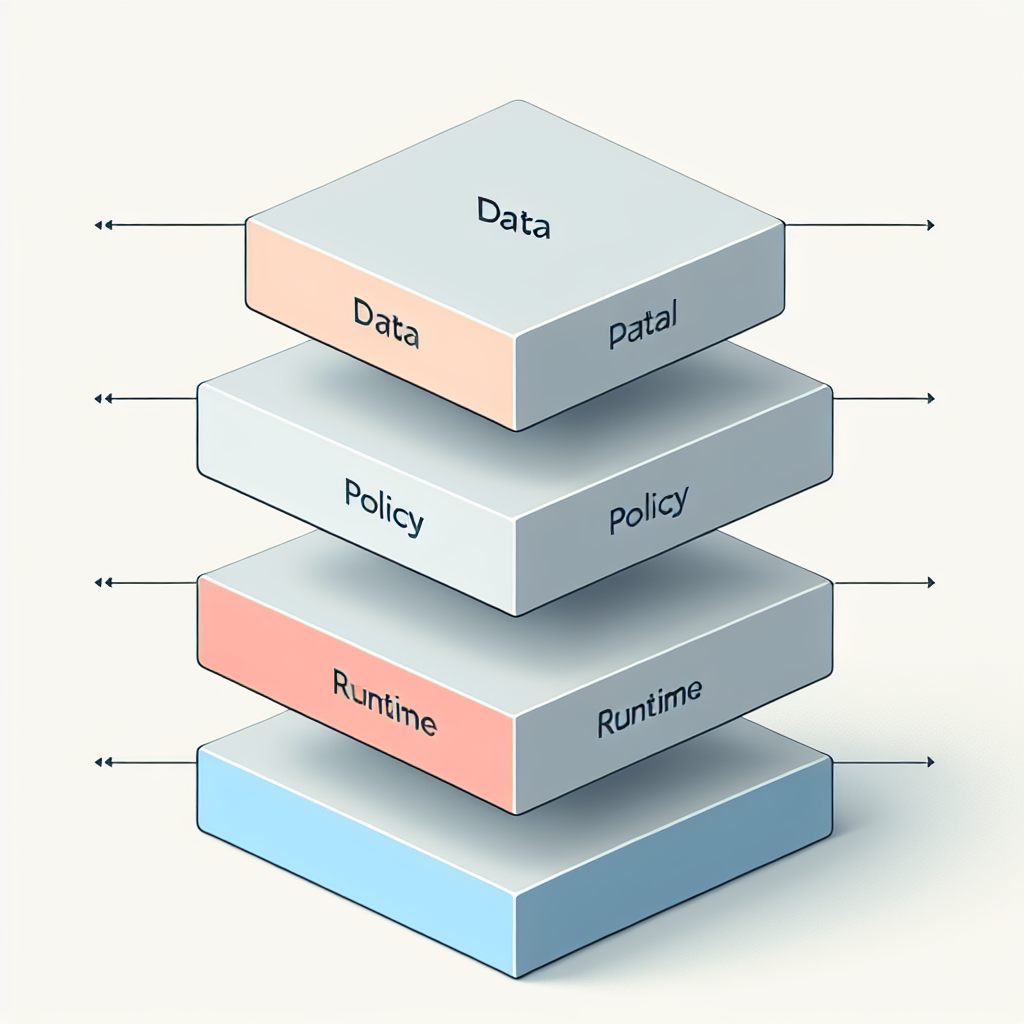

In real systems, policy doesn’t sit above the stack. It lives alongside request routing, caching, data lineage, and cost caps. The design goal is to make the safe path the fastest path: the shortest route to production also produces logs, applies guardrails, and can be rolled back without drama.

Sequencing matters more than tooling. Decide prompts without data contracts, and you hardcode assumptions you’ll rip out later. Lock in a vector index before deciding retention policy, and you inherit storage obligations you never priced. The stack is a negotiation between product ambition and operating friction.

The result won’t be elegant. The teams that win ship a stack that tolerates mess: reversible choices, quarantine lanes for risky calls, and controls that degrade gracefully when the world changes underneath them.

Introduction

Picture a launch week: the new AI-powered assistant is live, traffic doubles, and the weekend on-call sees cost spike and latency wander. A routine model upgrade lands, intent classification shifts by a few points, and refund workflows trigger incorrectly. Support escalates. Product asks for a hotfix. Security asks who approved the data use. No one has a single place to answer any of it.

That’s how Building the Modern AI Integration Stack: Governance & Infrastructure shows up as a requirement, not an architecture diagram. The ai integration didn’t fail; the system around it was never built to hold it. Once you’ve lived through an outage caused by a policy hole or a misaligned index refresh, you stop treating this as a research problem. You build the rails.

Operational weight changes everything: latency budgets collide with policy and cost

In production, “AI feature” means a request enters untrusted, touches curated data, runs through model calls that can change behavior daily, and must exit constrained by policy, cost, and time. The integrity of the request is only as strong as the weakest link: tokenization mismatches, stale embeddings, brittle prompt routing, or missing rate-limit backstops.

The hard boundary is your SLO. Users don’t care why the answer took 4 seconds instead of 700 ms. Governance adds checks you must afford. So every policy—PII scrubbing, output filtering, jurisdiction routing—needs a latency price tag and a failure mode. Do you fail closed and risk user drop-off, or fail open and risk legal exposure? If you don’t choose, the system will choose for you during an incident.

Data governance and lineage become daily work. Whatever you embed is now subject to retention and revocation. A takedown request doesn’t just remove a file; it invalidates a slice of your index and the downstream caches. You can either rebuild continuously with incremental compaction or run on stale knowledge and accept wrong answers with confidence.

Cost control is not a dashboard; it’s an architectural primitive. You need per-tenant quotas, request shaping (early summarization, adaptive context), and a budget-aware router that can downgrade or short-circuit expensive paths under load. If a spike hits, you want the system to degrade to “good enough” without paging five teams.

Observability is the only way to tell policy from superstition. Not just logs, but correlating prompts, inputs, policy decisions, model versions, and outcomes in a timeline keyed to user and cohort. When outputs drift, you need to answer: did the prompt change, the data change, the model change, or the policy path change?

Sequencing work to avoid whiplash between governance and delivery

The order you make decisions decides how many you’ll redo. Start with product boundaries: which user actions invoke AI, what must never leave the trust boundary, and how wrong the system is allowed to be per path. That sets constraints on data contracts and routing. Only then shape prompts and retrieval strategies.

Handoffs that actually stick

Policy authors need a runtime to express norms as code—redaction, jurisdiction routing, safe output checks—without waiting for a backend rewrite. Platform needs that policy to compile into the same execution graph the rest of the request uses. If policy lives in a wiki, engineers will bypass it under pressure.

Data teams own lineage, but they can’t block the release train. The compromise is a catalog with tiers of acceptability and automated gates that fail fast when a dataset moves between tiers. This avoids late-stage reversals where an index rebuild invalidates a week of prompt tuning.

Where friction piles up

Retrieval strategies get re-litigated when latency meets reality. It’s common to see a move from per-request embedding to batched preprocessing once bills arrive. If you don’t have a path for background jobs with the same policy enforcement, you’ll split the stack and double your risk.

Model choice flips under load. A path that used a larger model in tests gets routed to a smaller one in production to hit P50 targets, and quality metrics wobble in certain cohorts. Without a router that explains its decisions and a feedback loop that ties outcomes to routing, you end up chasing ghosts.

Security finds gaps after go-live. Output filtering that worked in staging can fail in production logs if a single service skips redaction. That’s not a policy error; it’s a propagation problem. You need a single redaction primitive applied at ingress and egress, versioned, and tested like any other dependency.

Tools that constrain decisions more than they accelerate them

Tooling choices should follow the shape of your constraints. If your top risk is data leakage, invest in a policy engine that runs in the same process boundary as your inference calls. If your risk is cost volatility, emphasize routing, caching, and adaptive context tools over more advanced model features.

Vector storage is not interchangeable. Some options optimize recall; others optimize write speed or memory footprint. The decision binds you to an index maintenance strategy: continuous ingestion with compaction versus scheduled rebuilds with windowed freshness. That choice dictates how quickly you can honor deletions or schema shifts.

Orchestration frameworks are useful until they hide failure modes. A system that auto-retries model calls without surfacing the partial work will inflate cost and bury signals you need during incidents. Prefer tools that expose idempotency hooks and make compensation explicit.

Observability stacks that treat prompts as strings rather than first-class objects will age poorly. You need to version prompts, track parameterization, and correlate to cohorts and model versions. If your tool can’t show these on the same timeline, you’ll answer every question with “it depends.”

Where seemingly simple ai integration moves create new liabilities

Case: adding retrieval to improve answers. It works, then a deletion request arrives. The index rebuild lags behind, and the system serves content that should be gone. Legal gets involved. The fix isn’t more retrieval; it’s a deletion-aware pipeline that can surgically invalidate segments and propagate tombstones through caches.

Case: switching models to save cost. The smaller model keeps P50 under budget, but long-tail queries degrade. Support volume rises for a specific market where language nuance matters. The router needs to learn from outcomes, not guesses. Without feedback grounded in user or business impact, you’ll ping-pong between models weekly.

Case: “harmless” internal usage of production data for prompt examples. The examples leak into logs that sync to analytics storage without redaction. Months later, audit asks for proof of masking at capture, not at query time. Retrofitting pipeline-level redaction is harder than doing it at the perimeter from day one.

Case: aggressive client-side caching to mask latency. A thank-you page shows stale AI-generated content that includes outdated pricing. The fix isn’t only cache TTL; it’s tagging content with a lineage stamp so you can invalidate by origin change, not just time.

Trade-offs that surprise newcomers vs. patterns veterans already price in

Decision PointNewcomer ConsequenceExperienced ConsequencePolicy placementPolicies live in docs; engineers implement ad hoc; gaps appear under loadPolicies compiled into runtime; single redaction primitive; fewer path-specific hacksIndex refreshNightly rebuilds; stale answers and deletion lagIncremental updates; tombstones propagate; predictable freshness windowsModel routingManual switches; quality whiplash and blame gamesBudget-aware router with cohort outcomes; measured downgradesObservabilityToken counts and latency only; blind to prompt/data driftVersioned prompts, datasets, and policy decisions on a single timelineError handlingRetry storms and spiky billsIdempotent steps and durable compensations

Failure modes worth planning for before they pick you

Silent model drift causing policy bypass. A slightly different output format slips past a brittle checker. Fix: schema-aware validators and tests that fail closed with human escalation for ambiguous cases.

Cost runaway due to cache key explosion. Prompt personalization and context tagging produce unique keys. Fix: canonicalize prompts and cap context permutations per tenant, with backpressure that degrades gracefully.

Data revocation that never converges. Legal deletion lands, but derived artifacts persist. Fix: derivation maps and purge workflows that treat embeddings and caches as first-class citizens, not side effects.

Vendor outage masking. Failover kicks in but uses a different model that breaks downstream assumptions. Fix: contract tests against failover models and a routing layer that records intent and outcome.

How this unfolds when teams grow and the blast radius expands

As ownership fragments, the incentives drift. Product wants faster shipping, security wants fewer exceptions, platform wants fewer bespoke lanes. The stack absorbs this stress when each concern has a concrete interface: policies as code, data as contracts, routing as a service with explainable decisions.

The leadership choice is whether to centralize governance or push it to edges. Centralizing avoids drift but becomes a bottleneck. Federating speeds delivery but invites inconsistency. The middle path is to centralize the primitives—policy engine, identity, lineage—while letting teams compose them locally with guardrails.

Questions teams ask when the first fire drill happens

How do we roll back a bad model change without losing the last week of prompt tuning? Keep model versioning and prompt versioning independent, and route by config so you can pin either dimension.

What’s the cheapest way to add safety checks without blowing latency? Co-locate lightweight checks at ingress and heavier ones post-response, then cache validated outputs when possible.

Do we need a vector index for everything? No. If your data has stable keys and low recall requirements, deterministic lookups plus templated prompts beat retrieval complexity.

Who owns deletions end-to-end? The team that owns user trust. Centralize the deletion API, decentralize the execution with a ledger that proves completion across derived stores.

How do we test policy paths? Treat policies like code: unit tests on rules, contract tests on datasets, and canary traffic that exercises edge cases continuously.

Operating posture that contains risk without freezing delivery

Set an explicit error budget not just for uptime but for wrongness by surface. Some surfaces tolerate approximation; others (billing, legal) do not. Route accordingly.

Keep a quarantine lane. When something looks risky—odd input, suspicious output—shunt to a slower but safer path with enhanced checks and optional human review. This avoids all-or-nothing releases.

Prefer reversible infrastructure over cleverness. Use config-driven routing, per-tenant feature flags, and data contracts you can evolve with deprecation windows. You will need to undo things under pressure.

Accountability drifts from model cleverness to system discipline

Given how things behave today, this is what quietly changes next: the center of gravity moves from picking models to governing flows, from chasing quality in isolation to measuring outcomes tied to policy, and from hero debugging to systems that explain themselves.

scratch prototypes -> policy-aware runtimes -> budgeted routers -> lineage-first data -> auditable outcomes