When ai platforms move from demos to revenue-bearing traffic, retrieval times stop being interesting and start being contractual. Keeping p95 below 200 ms is not a tuning exercise; it’s a series of architectural decisions that show up as cost, operational risk, and uncomfortable constraints on how and when you ship changes.

Executive pressure: p95 budgets force architecture to give up convenience

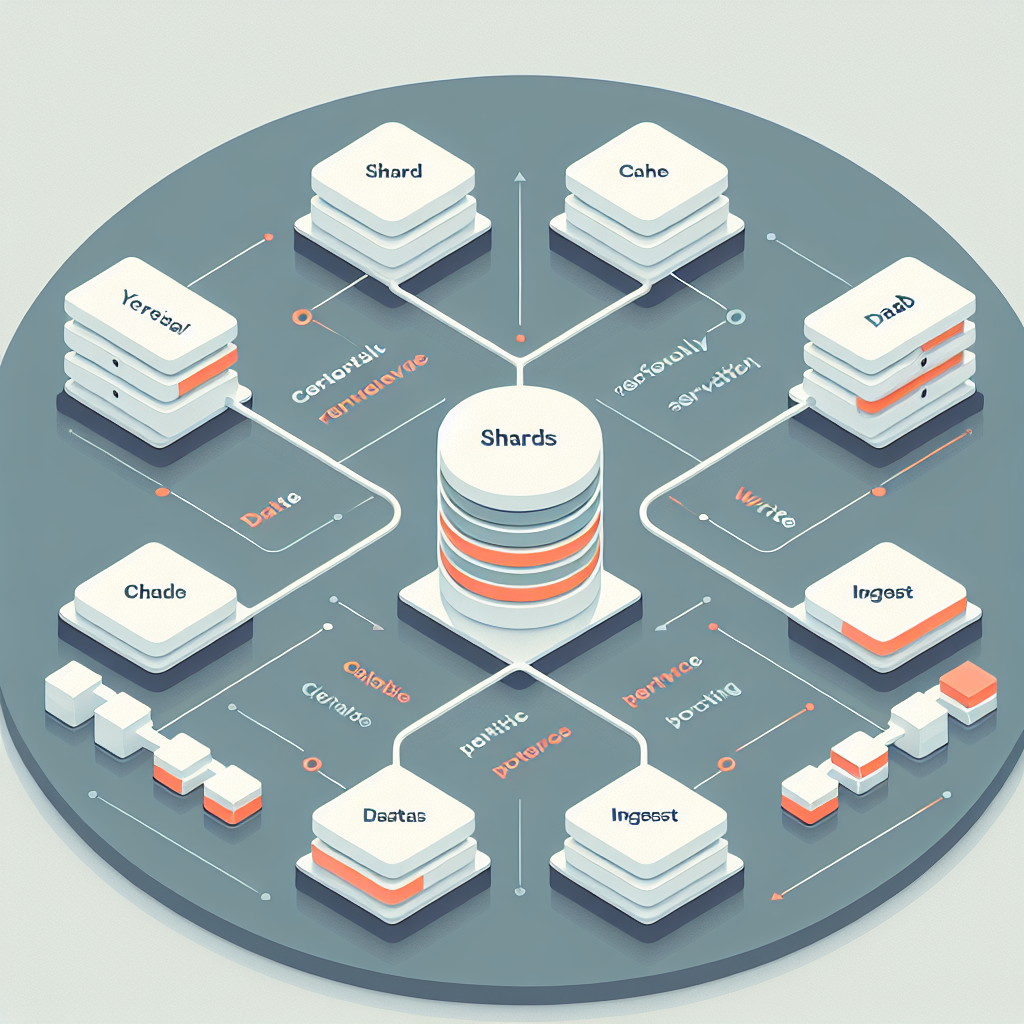

In production, the latency budget is carved up by network hops, embedding lookups, vector search, ranking, and the language model call. If retrieval slips, everything downstream fights for oxygen. Sharding, async ingestion, and caching aren’t features; they are the guardrails that keep the system from collapsing when usage spikes or data shifts.

The hard part isn’t making it fast once. It’s keeping it fast while indexes rebuild, tenants onboard, and embeddings evolve. The brittleness shows up in tail latency—p95 and p99—that punishes every missed cache, every shard hotspot, and every noisy neighbor.

Sharding splits the pain. Async ingestion keeps writers off the read path. Retrieval caching makes the common case cheap. Together they buy headroom. None are free: write amplification, invalidation complexity, and operational overhead will tax the team that owns the platform.

This is unavoidable because RAG couples search performance with model behavior. If retrieval drifts, answers degrade. If the platform can’t protect p95, product teams respond with bigger models and longer prompts, compounding costs and latency. The only sustainable move is engineering the retrieval tier like a core capability.

Introduction: latency budgets break first under live traffic

The moment real customers lean on a RAG feature, incidents don’t read like lab reports. They read like pagers going off: a launch doubles queries at lunchtime, index rebuilds overlap with batch ingestion, and the cache goes cold after an ill-timed deploy. The pressure is familiar to anyone who has operated ai platforms—slack vanishes, on-call fatigue rises, and tail latency becomes the only number that matters.

The topic surfaced as a requirement after a release where p50 looked fine in staging, but p95 exploded in production. The combination of unsharded vector search, synchronous ingestion, and naive caching turned retrieval into a bottleneck. That’s how Engineering AI Platforms for Production-Grade RAG: Achieve p95 < 200 ms with vector index sharding, async ingestion, and retrieval caching moved from an idea to a priority. The decision paths here are shaped by breakage: they reflect what failed, what slowed down, and what had to be rethought.

Where the latency budget collapses under realistic load

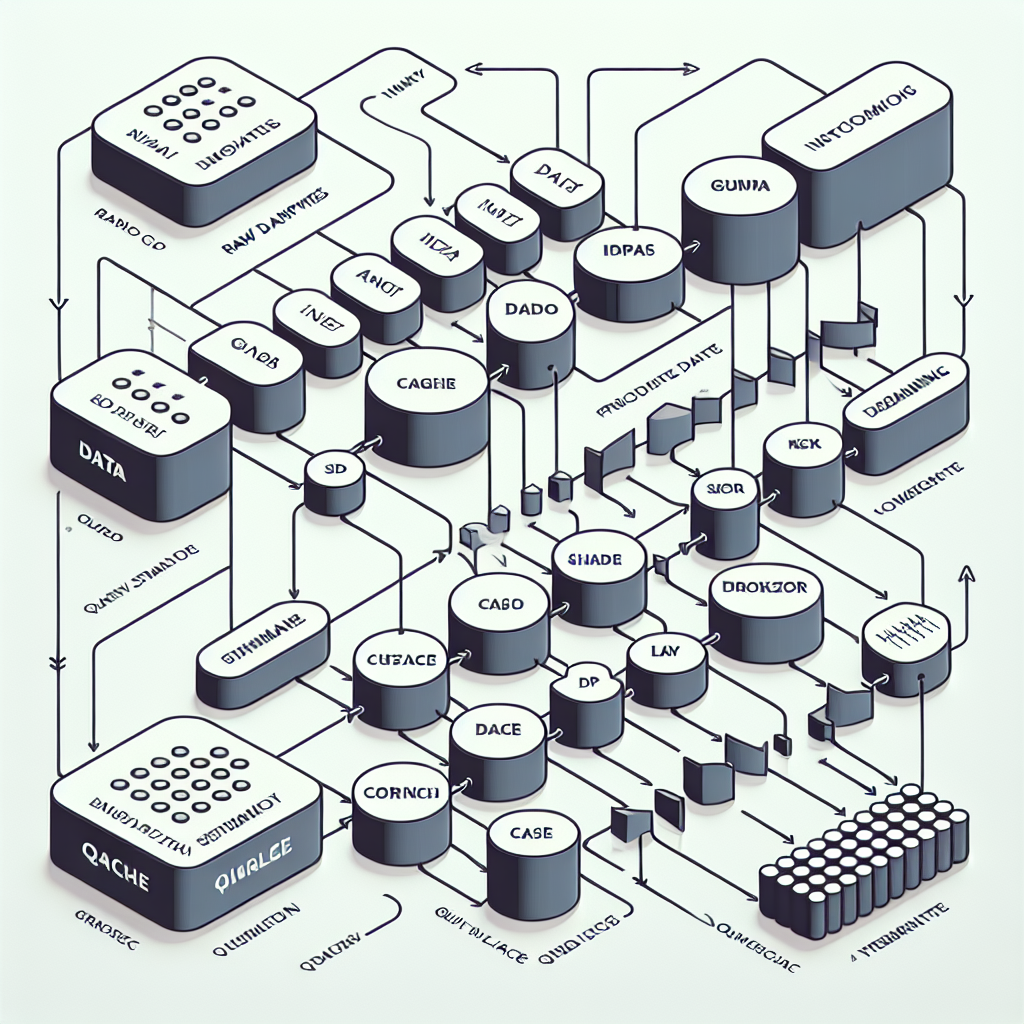

Production RAG is not one system; it’s a set of subsystems that interfere with each other. Retrieval time looks acceptable until documents churn, tenants multiply, and hot keys dominate traffic. Sharding, async writers, and caching are chosen because they contain this interference. They also raise new failure modes you wouldn’t meet in a demo.

Constraints show up as boundaries: the index format limits how you shard; the memory model dictates how much caching you can keep resident; the queue depth caps ingestion concurrency before you drown the read path. Each boundary has a failure mode. GC pauses on shard nodes surface as p99 spikes. Cache warm-up after deploy drains CPU exactly when traffic peaks. Embedding drift breaks cache locality and amplifies cache miss penalties.

Shard to isolate heat, then deal with unevenness

Sharding the vector index lets you isolate hotspots and scale horizontals, but it pushes you into skew management. One shard gets hit by a viral document or a popular category and tail latency blows up. The fix is usually over-sharding and rebalancing with consistent hashing, then pinning ultra-hot partitions to faster storage or memory, accepting cost as the price of stability.

Keep writers off the read path or accept tail risk

Async ingestion breaks the coupling between ingest storms and read latency. It doesn’t solve correctness. Backpressure policies decide whether you drop, delay, or degrade. The queue becomes a control surface: tune retry windows too aggressively and you thrash shards; too conservatively and freshness evaporates. The choice isn’t technical purity—it’s picking which consequence your business tolerates.

Cache the retrieval, not only the answer

Answer-level caching misses the point when prompts vary. Caching retrieval results—top-k candidates keyed by a normalized query fingerprint—protects the most expensive part of the pipeline. You pay with invalidation complexity. Document updates force selective cache busts; embedding changes invalidate broadly. You’ll end up with multi-tier caches: short TTL on hot queries, longer TTL on stable knowledge, and a fallback to index for edge cases.

Sequencing under pressure: where handoffs introduce tail latency

The pipeline looks neat in diagrams and gets messy in operations. Documents arrive unevenly, embed jobs pile up, indexes rebuild halfway, caches warm slowly, and queries don’t wait. The sequencing matters because every handoff can either buffer or amplify trouble.

Document arrival vs. embed throughput

Ingestion spikes don’t care about your embed throughput. Async queues buffer the shock, but they turn latency into a backlog. If your queue policy prioritizes recent documents, you’ll protect freshness at the cost of starving older jobs. If you prioritize fairness, the platform drifts stale. Teams revisit this weekly because product priorities change faster than capacity planning.

Index build windows collide with launch windows

Rebuilding or expanding shards can’t align perfectly with traffic patterns. Maintenance windows help until an external event—email campaign, outage elsewhere—moves traffic into your window. Live-reindex strategies reduce blast radius but invite consistency bugs. The consequence is operational choreography: freeze risky operations near known spikes and plan rollouts that tolerate partial index states.

Cache warm-up is not a switch, it’s a negotiation

Retrieval caches don’t warm themselves. The naive approach waits for organic traffic; p95 suffers until enough hits accumulate. Prewarming with replayed queries helps but can pollute caches with yesterday’s distribution. Teams add guardrails: sample-based prewarm, adaptive TTL based on hit rate, and eviction policies that resist a single client’s burst. This is where platform and product teams argue—not about correctness, but about whose latency budget gets hurt.

Multi-tenancy multiplies the surface area

Shared ai platforms host multiple tenants, each with different query shapes and data churn rates. Sharding by tenant seems clean until small tenants subsidize large ones on shared nodes. Resource isolation through quotas and separate shard pools keeps p95 sane, but raises per-tenant cost. Someone pays: either in dollars or in degraded UX.

Tools and technologies when the constraint dictates the tool

Tool choices follow constraints, not fashion. If you need tight tail latency, index structures with predictable memory access patterns beat clever features. Hierarchical graphs and inverted lists behave differently under skew; pick the one that lets you cap worst-case traversal steps on your hardware. That decision appears again when you choose shard counts: too few and hotspots form; too many and you drown in cross-shard coordination.

Async ingestion depends on the queue’s failure semantics. Exactly-once sounds nice until throughput collapses; at-least-once with idempotent writes fits better when you own both embed and index code paths. Storage tiers matter because caching lives or dies by memory pressure. Keeping hot retrieval sets in RAM is the obvious move; pinning to fast disk is the compromise when cost bites. Network distance between compute and index nodes quietly becomes the dominant variable if you split them across zones—latency budgets don’t forgive cross-zone round trips.

For retrieval caching, the tool is less important than the key design. Normalize query fingerprints to collapse trivial variations. Include a version for embeddings so you can invalidate by batch without nuking everything. If your cache can’t expose hit/miss distributions and age, you’ll fly blind. That telemetry drives decisions more than any advertised feature.

Examples and applications where the trade-offs surface visibly

Support search under bursty incident traffic

A support portal using RAG sees traffic spike during outages. Without sharding, the incident keyword floods a single index node and p95 doubles. Splitting the index by category and recentness tames the hotspot, but retrieval relevance drops for long-tail queries during the incident. Cache the incident term aggressively with short TTL and accept slightly stale content for the duration. After the burst, clean up selectively to avoid cache pollution.

E-commerce recommendations with seasonal skew

Holiday campaigns redirect attention to narrow product ranges. Async ingestion keeps new catalogs flowing, but embedding drift from updated descriptions invalidates large swaths of the retrieval cache. The team tightens TTLs on affected categories and broadens shard pools temporarily. Costs rise because hot shards run higher-spec nodes. Business accepts the bill over degraded recommendations; after the season, shards are rebalanced and TTLs lengthened.

Developer assistant in CI with high concurrency

A code assistant wires RAG into CI to answer build questions. Concurrency spikes during nightly runs, and retrieval hits shared shards. The fix is coarse-grained: isolate CI traffic into dedicated shard replicas and pin a small cache tier near the CI runners. Freshness suffers for a subset of queries. Engineers tolerate slightly dated answers in exchange for predictable job times. This outcome is not clean but it’s operationally stable.

Tables and comparisons where experience changes the moves

AreaNewcomer approachExperienced approachImpact on p95Index shardingShard evenly by sizeShard by heat and recency, over-shard then rebalanceReduces hotspots, exposes rebalancing overheadAsync ingestionSingle queue, FIFOPriority queues with backpressure and idempotent writesProtects retrieval during spikes, increases write latencyRetrieval cachingCache full answersCache top-k candidates with embedding-versioned keysCuts expensive search, raises invalidation complexityLatency measurementTrack p50Track p95/p99 with per-shard breakdownSurfaces tail risk, drives shard isolation decisionsMulti-tenancyShared poolQuota-based isolation, separate hot poolsProtects SLOs, increases cost per tenant

FAQ: the doubts that appear during adoption

What if one shard still gets all the traffic? Over-shard and use a routing layer that tracks recent hit rates. When a shard heats up, route to replicas or split the partition. Accept temporary cost inflation to keep p95 stable.

How do we avoid stale or wrong cached retrievals? Version cache keys by embedding batch and invalidate on document update signals. Pair short TTLs on hot queries with longer TTLs on stable domains. Prefer selective busting over global flushes.

Do we rebuild embeddings synchronously for correctness? Not under load. Embed asynchronously with idempotent writes and a freshness SLA. If correctness is critical for a subset, mark those documents for prioritized embed and index refresh.

Where does cost spike first when chasing p95? Memory for caches and compute for hot shards. Track cache hit-rate vs. node cost and shift capacity accordingly. Tail latency reduction is rarely cheap; plan budgets around predictable hotspots.

How do we handle tenants with drastically different query shapes? Isolate them. Separate shard pools and distinct cache tiers. Shared pools look efficient until one tenant’s traffic pattern punishes everyone else.

Ownership shifts as retrieval becomes a platform SLO

Given how things behave today, platform teams end up owning the retrieval SLO the way they own databases. Product teams can’t pull p95 under 200 ms by prompt tweaks alone. The responsibility shifts toward capacity planning, shard policy, and cache governance as first-class levers, with product accepting controlled staleness when needed.

Index build → Query heat concentration → Caching governance → Platform ownership