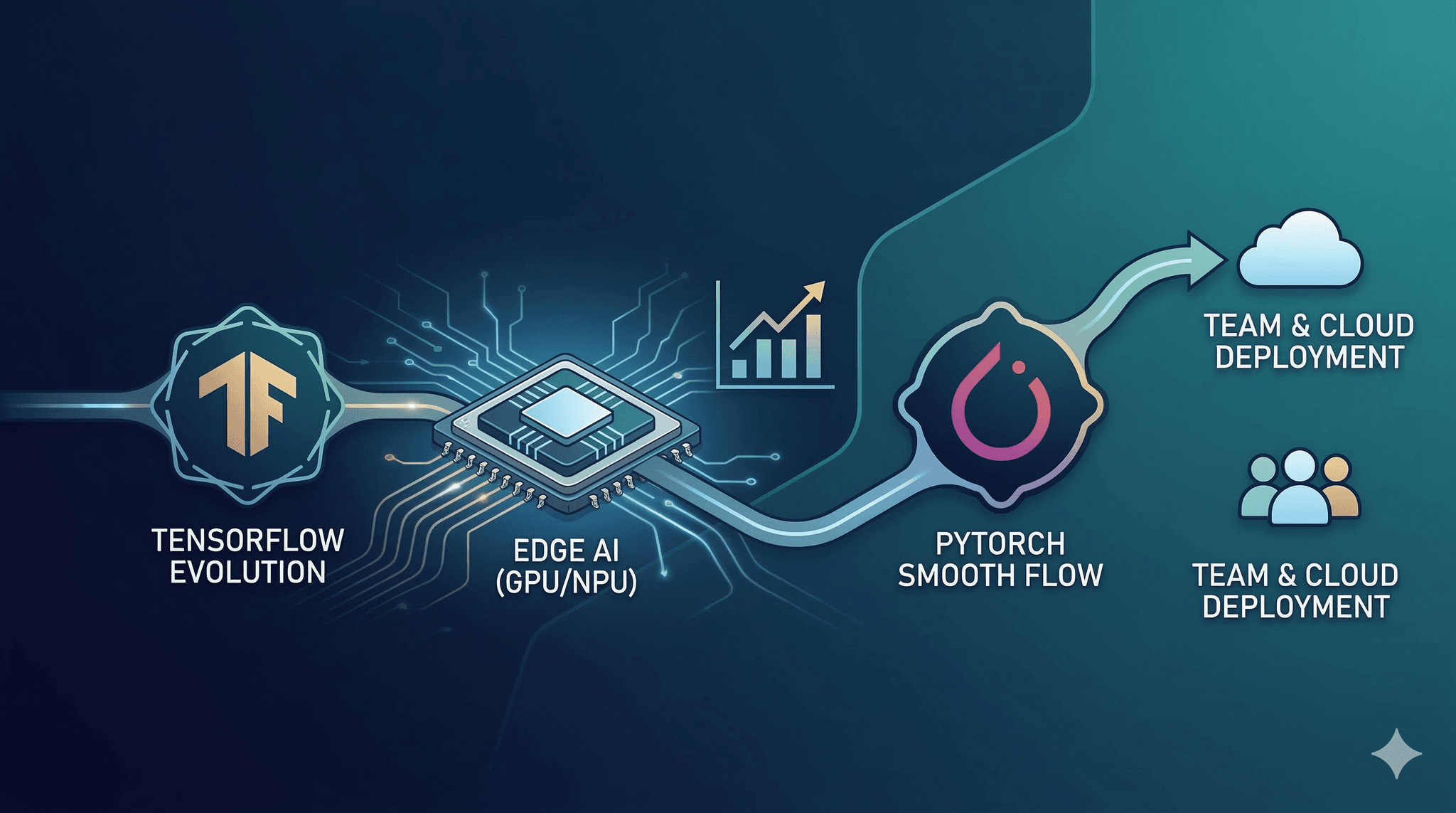

What's new in TensorFlow 2.21 touches the same pressure points many teams feel right now. Faster GPU paths, practical NPU usage, and smoother PyTorch edge deployment are converging into one operational question: where do you place compute when constraints are real and changing.

Executive Summary

Teams balancing training, inference, and edge releases want more speed without brittle pipelines. TensorFlow 2.21 lands with upgrades that favor GPU throughput, better NPU delegation, and cleaner handoffs into lean edge runtimes. In parallel, PyTorch edge packaging continues to reduce shipping friction.

You will leave with a mental model for when these upgrades help, where they crack under pressure, and how to set guardrails so gains survive scale.

Where faster GPU paths matter, and when they become memory-bound

How NPU acceleration behaves with partial op coverage and fallbacks

Why PyTorch edge upgrades change packaging, not just latency

What to instrument so improvements hold up outside the lab

Introduction

A product deadline is seven days out. The last build missed target latency by just enough to trigger a battery drain flag on certain devices. You have one mid-range GPU for validation, a small set of edge units, and no time for big rewrites.

This is where Faster GPU Performance, New NPU Acceleration, And Seamless PyTorch Edge Deployment Upgrades cross paths with real constraints. What's new in TensorFlow 2.21 leans on tighter kernel scheduling and more stable delegate behavior. Edge runtimes for PyTorch trim packaging overhead and reduce surprises during cold start.

It’s trending because gains are no longer coming from one place. Training optimizations bleed into export. Edge delegates are getting smarter about falling back. Teams running mixed stacks need predictable handoffs, not rewrites.

It’s becoming necessary because the baseline moved. Devices ship with NPUs you can’t ignore. GPUs deliver more only if you protect memory headroom and avoid launch churn. And edge packaging must be small, predictable, and testable under shaky thermals.

Speed is only useful if it survives real workloads

What's new in TensorFlow 2.21 will look great on a stable bench. Real workloads are uneven, inputs vary, and devices heat up. The promises are real, but gains survive only if you control memory, precision, and fallbacks.

Compute routing under changing constraints

GPU speedups often hinge on launch patterns. If your model throws tiny ops in sequence, launch overhead can dominate. If 2.21 reduces that overhead, you still need to batch small ops or fuse paths in your graph, or the headroom disappears when input shapes drift.

NPU acceleration lives at the edge of op coverage. It’s fast when all layers map cleanly. One unsupported op can push you back to a slower path or split execution between engines. That split has a cost. Every handoff hits memory and sometimes thermal edges.

Edge deployments see steady-state change into failure patterns. Thermals clamp throughput after a burst. Memory fragmentation creeps in after many inferences. A faster allocator in the core helps, but model size, tensor lifetimes, and precision control whether the allocator can do its job.

Failure patterns to expect:

Speed gains vanish on long runs due to allocator churn

NPU delegation works in tests but drops to CPU under skewed inputs

GPU acceleration wins at batch size N, loses at N+1 from memory spikes

Edge cold starts improve, but warm paths regress when logs stay on

None of this means avoid the upgrades. It means treat them like conditional wins. Build the conditions.

From notebook wins to edge deploys that hold up

Development to edge without undoing performance

The implementation story rarely goes linearly. You’ll get one fast run locally. Then a regression on a device that wasn’t in the first batch. The path through TensorFlow 2.21 and newer PyTorch edge packaging is workable if you isolate moves and measure small.

Start by stabilizing precision. Mixed precision helps on GPU and sometimes on NPUs, but only if numerics settle across your inputs. Calibrate on a slice of real data, not just unit tests. If accuracy drifts, lock a few sensitive layers to higher precision. Small sacrifices here prevent chasing heisenbugs later.

Next, route compute intentionally. For TensorFlow 2.21, check graph capture coverage and any new fused kernels along your hot path. If capture is partial, pin the rest to eager only where it’s unavoidable. Reducing context switches pays back more than squeezing one more percent out of a single op.

On the edge, prefer a single primary delegate with predictable fallback. Split execution is fine, but set guardrails. If an op falls back, you want it to fall back consistently, not only in rare shapes. That usually means tightening input shape ranges and avoiding dynamic layers that balloon memory.

Packaging matters as much as latency. PyTorch edge upgrades are making bundles smaller and operator sets clearer. Smaller builds reduce cold start jitter and leave more memory for your tensors. When you trim operators, your model must align with that set. Audit layers before packaging, not after an on-device crash.

At scale, everything amplifies. A small memory leak across thousands of inferences becomes a reboot loop. A rare delegate fallback turns into a sustained CPU path in hot weather. Instrumentation catches this faster than manual tests. Simple counters for device temperature, delegate usage, and max resident memory save days.

GPU improvements that matter when the input stream shifts

What's new in TensorFlow 2.21 points to cleaner execution on GPUs. You feel this when input shapes vary and batch sizes wobble. The scheduler’s ability to keep work on the device longer reduces host round trips. If your pipeline builds on that, you’ll see stability. If you repeatedly recompile graphs by accident, the benefits drain away.

Two practical moves:

Normalize batch boundaries so shapes stay inside a small set of profiles

Prewarm critical paths during service start to avoid first-hit stalls

Memory is still the ceiling. Faster kernels don’t help if activations blow past the budget. Track peak usage, not average. Clip sequences if your input distributions occasionally spike.

NPU acceleration that respects partial coverage

Edge NPUs are tempting because they offload heat and save battery. Delegates in 2.21 era stacks are better at mapping chunks of graphs and being explicit about what stays off the NPU. Plan for partial wins. If your model uses custom layers, you may get split execution. Make the split predictable.

Keep a clean fallback path. Test the entire model on CPU on the device. Then flip the delegate on and compare outputs against a tolerance. Mismatches often appear in post-processing layers. If discrepancies are acceptable, bake that tolerance into your QA and move on. If they’re not, lock those layers off the delegate.

PyTorch edge packaging that avoids slow, hard-to-debug starts

Smoother PyTorch edge deployment upgrades mainly reduce uncertainty. Operator registries are clearer. Build outputs are leaner. The payoff is faster cold starts and fewer surprises when inputs trigger uncommon code paths.

Before leaning on smaller builds, confirm your model only uses the kept operators. Run a dry build that lists unresolved ops. Replace or re-implement anything that pulls in large optional stacks. The goal is a boring startup sequence with predictable memory and no background JIT on constrained devices.

Examples and applications that mirror real trade offs

A vision model hits latency once after startup, then drifts above the target after a few minutes on an edge unit. After moving to TensorFlow 2.21, GPU bursts are faster, but thermals clamp. You switch a subset of layers to the NPU delegate. Latency becomes consistent, but accuracy drops on rare frames. You lock the final post-processing on CPU at higher precision. Latency remains stable and accuracy holds, though peak throughput is a hair lower than the fastest bench run.

A language model exported for mobile runs fine in the lab on PyTorch’s edge runtime. In the field, a cold start occasionally stalls. Packaging reveals an extra operator set pulled in by a rarely used path. You rework that path to a supported operator and shrink the bundle. Cold starts stop stalling, but one device model still shows a blip. Telemetry points to file I/O, not compute. You move initialization outside the hot path and the blip disappears.

A recommendation model gains from batched inference on GPUs. With 2.21, launch overhead is lower, so you try smaller micro-batches to reduce tail latency. It works until traffic spikes and memory fragmentation creates extra GC pressure. You pin micro-batch sizes to a small set and pre-allocate buffers. Tail improves again and stays put.

Where beginners and operators diverge

Decision point Students / Beginners Experienced practitioners Adopting TensorFlow 2.21 GPU paths Flip flags and trust default gains Profile kernels, normalize shapes, prewarm graphs NPU delegation Enable delegate and hope for full coverage Map op coverage, lock sensitive layers off, set fallbacks PyTorch edge packaging Accept larger bundles to avoid changes Trim operator sets, refactor rare paths, test cold starts Thermal and memory behavior Validate once on a cool device Soak test, track thermals, enforce memory caps Accuracy vs precision Use mixed precision everywhere Calibrate, pin unstable layers to safer precision

FAQs

Does What's new in TensorFlow 2.21 help without code changes

Sometimes, but durable gains usually need shape normalization, prewarming, and light graph cleanup.

Are NPUs ready for all models

No. Plan around partial coverage and keep a tested fallback route.

Will PyTorch edge upgrades reduce my app size

They can, if your model aligns with the trimmed operator set. Audit before shipping.

Can I rely on mixed precision everywhere

Not safely. Calibrate and protect layers that show sensitivity.

What should I monitor in production

Delegate usage, peak memory, cold start time, and device thermals.

Rising pressure to make runtime choices observable

As GPU speedups and NPU delegates improve, the burden shifts to the pipeline owner to prove when each engine runs and why. Black boxes lose you time during regressions.

Build thin observability into the runtime, not bolted on after a miss. The progression from lab wins to field stability now depends less on raw speed and more on showing that speed occurs where and when you expect.