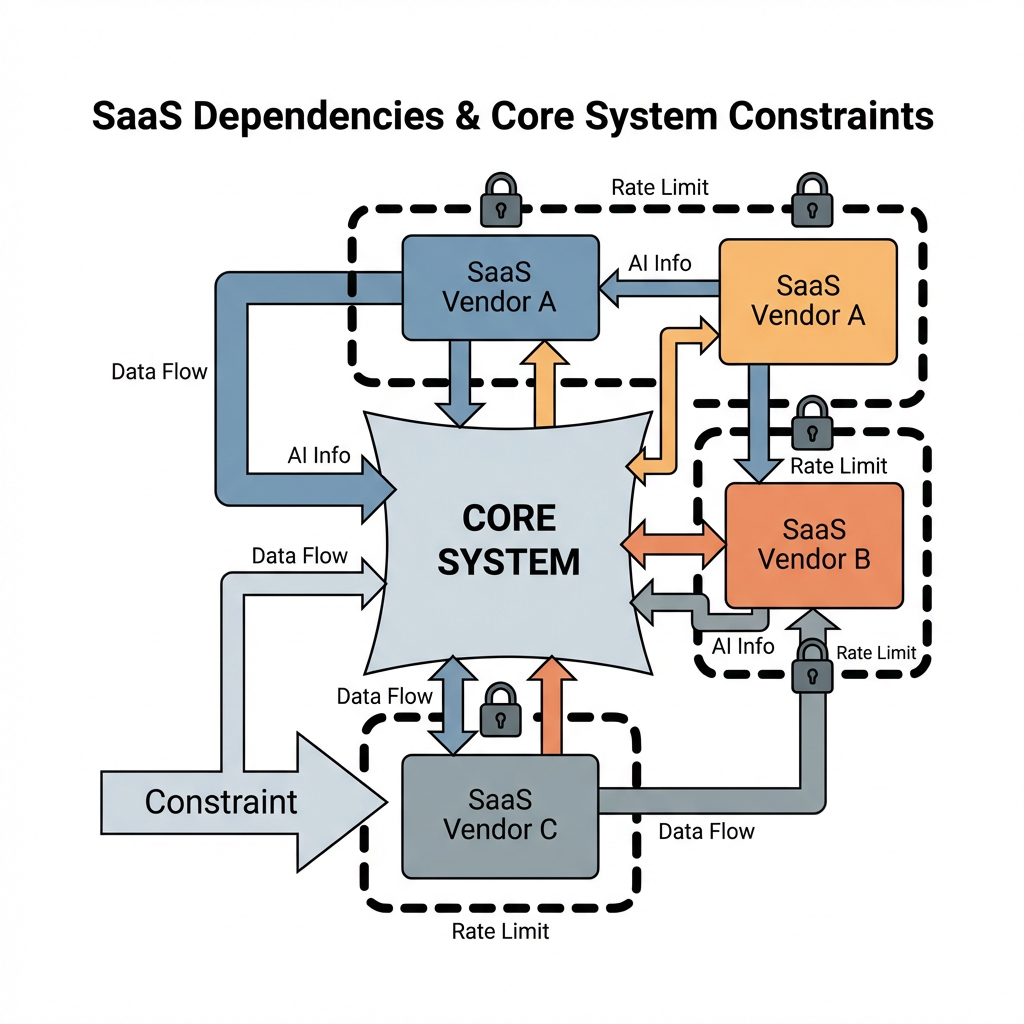

Teams keep adopting SaaS because the clock never stops and budgets do not grow on demand. The lure is speed, predictable cost lines, and built-in capabilities like ai information that would take months to replicate in house. The friction is control, data boundaries, and the awkward moments when a vendor constraint becomes your operational constraint.

Executive Summary

SaaS has moved from convenience to backbone. Once it sits in the core path of customer flow, every business objective becomes dependent on how well you can stitch external systems together and recover when they wobble.

The biggest change is not feature velocity, it is accountability moving from owning components to orchestrating behaviors across APIs, contracts, and multi-tenant limits. That shift forces different decisions about data modeling, incident response, and governance of ai information passing through vendor tools.

Real gains happen when you design for rate limits and propagation delays, accept partial visibility, and set budgets and SLOs that reflect vendor realities. Pain shows up when integration sequences are optimistic, when data assumptions are brittle, or when upgrades roll through at the vendor’s cadence rather than yours.

This piece walks through what breaks, how teams adapt, and where decisions carry consequences. No silver bullets, just operating patterns that reduce risk without grinding momentum.

Introduction

We were mid release, a quiet Tuesday, and a basic authentication change upstream turned our customer lifecycle into a slow leak. Sign up flowed, billing posted, but profile enrichment stalled for hours because the third party risk check throttled harder than usual. The dashboard showed green, our logs showed retries, and support started getting emails about missing confirmations. That is where How SaaS Products Are Changing the Business Landscape? stops being a strategy statement and becomes a production reality. The system’s health depended on a vendor we did not own. Our ai information pipeline paused too, since downstream scoring waited for those profiles. You can plan for latency, you still feel it.

This surfaced as a requirement because our revenue path now crosses multiple external systems. Many are solid. None are under our full control. Decisions about architecture, contracts, and operational playbooks suddenly carry more weight than a feature spec. The business landscape has shifted from building everything to selecting, sequencing, and absorbing external behavior without breaking your own commitments.

Core constraints when SaaS sits in the critical path

Once SaaS is embedded in core flows, you inherit its rate limits, upgrade schedule, logging granularity, and ideas about data governance. Multi-tenant platforms are built for average usage, not your peak marketing day. Error modes change from a single component failing to a dependency chain slowing to a crawl. You can switch vendors, you rarely do mid incident.

Vendor APIs shape your data model whether you like it or not. If an identity service only returns certain attributes, your ai information layer must infer the rest or operate with a thinner context. Backfills mean money and time. Retry logic that looks safe during testing can cascade into rate limit violations under load. You need a circuit breaker, not just exponential backoff.

Observability gets weird. You can instrument your code, you cannot instrument a vendor’s internal hops. Trace spans stop at API boundaries. Synthetic checks help but only show surface availability. When a vendor has a partial outage, your metrics look like noise unless you built signals that highlight dependency health separate from application health. That matters when support asks for a timeline and finance asks why refunds spiked.

Compliance pulls in new responsibilities. If your workflow passes personal data through third party tools, you carry contractual obligations, audit trails, and deletion paths that span systems. You do not have to be an expert in every regulation, you do need a predictable data lifecycle. That includes model behavior if you are feeding ai information into decision points. Drift and bias do not respect contract terms.

Where sequencing creates drag and rework

The first slowdown happens at integration sequencing. Teams wire sign up, identity, billing, and messaging in a straightforward flow. Then reality adds retries, asynchronous updates, and divergent clock speeds across vendors. A confirmation that arrives two minutes late can invalidate a risk score generated earlier. The naïve fix is more polling. The better fix is events with idempotency and a state machine that tolerates out of order arrivals.

Handoffs get political. Product wants to ship. Security wants guardrails. Legal wants enforceable clauses. Operations wants runbooks. Each new SaaS brings a review cycle, procurement friction, and a sandbox timeline that drifts. If you do not define a gate, you end up with tools sneaking in through shadow usage then becoming indispensable without operational cover.

Dependencies create revisits. You might select a vendor because the SDK looks clean and the pricing matches a spreadsheet. Six months later, a feature flag change or data residency requirement forces rewiring. The cost is not just engineering time, it is the pause in roadmap while you untangle API contracts, migrate historical records, and recalibrate your ai information processing to the new schema.

Teams slow down when error handling is centralized. If the platform that orchestrates your flows becomes the only place with retry logic, every integration waits for that team to ship fixes. Decoupling helps, but then you need consistent failure semantics and an agreement on what counts as a recoverable error. Those arguments are healthy. They prevent 3 am page outs that could have been absorbed.

Tools and Technologies under real constraints

Tool choice is rarely about features, it is about the pain you are willing to carry. A customer support platform with limited export paths might be fine until you need to run global audits. A feature flag service that batches updates might save cost yet introduce visible lag during a rollout. A cloud data warehouse can centralize logs, but the ingestion window matters when your incident report depends on timely traces.

For ai information, the decision often hinges on how inference is triggered and governed. If model calls sit in request threads, you trade latency for simplicity. If they move to async pipelines, you add operational complexity, this buys resilience and cost control since you can batch and degrade gracefully. The model serving layer matters less than how you monitor outputs and constrain side effects.

Observability stacks explain more decisions than vendor brochures. If you cannot correlate a vendor outage with user impact, you will either overreact or underreact. Tools that give you dependency health, rate limit visibility, and accurate replay capabilities make incident response survivable. Without them, you guess, then you write apologetic emails.

Examples and Applications without neat endings

Marketing ran a successful campaign. Sign ups tripled for a day. The identity verification service held up until mid afternoon, then rate limited harder. Our retries were polite. Too polite. New users waited, some bounced, and downstream enrichment piled up. We added a capped queue for sensitive steps with backpressure signals to the frontend. It did not fix conversion, it stopped the bleed and gave us room to negotiate higher thresholds.

We added a new analytics connector to track user behavior. The connector transformed events into a schema we could not fully map to our warehouse. Dashboards looked great until finance tried to reconcile revenue against usage. The mismatch cost us a week of manual stitching. The fix was boring, a canonical event schema and a nightly consistency job. It forced product to give up some flexibility, it paid back during quarter close.

An AI powered support triage flagged critical tickets correctly most of the time. The misses hurt because they were silent. We built a shadow evaluation path using anonymized samples and tracked drift. This surfaced subtle changes after a vendor updated their model without loud release notes. We did not get perfect accuracy. We got confidence to catch regressions early and a process to switch to manual triage under defined thresholds.

A compliance audit asked for deletion proofs across every system where personal data could land. SaaS made the map longer. We built a deletion ledger that records requests, confirmations, and retries per system. Some vendors offered webhooks, others offered reports, a few required support tickets. It was not elegant. It passed the audit and gave us a reason to push for better clauses next renewal.

Tables and Comparisons that clarify choices

Decision Area Newer teams tend to Experienced teams tend to Business impact API rate limits Trust vendor defaults Negotiate thresholds and add circuit breakers Fewer spikes, predictable degradation Data modeling Mirror vendor schemas directly Define canonical events and map per vendor Easier audits, cleaner migrations Error handling Centralize retries in one service Distribute with consistent semantics and budgets Faster fixes, fewer bottlenecks ai information usage Inline inference in request path Async pipelines with evaluation and fallback Better reliability, controlled latency Vendor selection Optimize for features and price Optimize for observability and contract terms Smoother incidents, clearer accountability

FAQ Section

How do we handle vendor outages without overbuilding?

Define degradation modes in advance. Decide what you will show users, where you will queue, and when you flip to alternate paths. Practice with game days, not just documents.

What is the smartest way to manage data passing through third party tools?

Keep a canonical schema and explicit contracts for sensitive fields. Track lineage, add deletion ledgers, and avoid hidden copies. If a tool cannot export cleanly, reconsider its role.

How do we keep costs from spiking with usage bursts?

Set budgets per integration and enforce them with backpressure. Batch noncritical work. Negotiate rate tiers and alert early when thresholds approach.

Where does ai information fit without breaking SLAs?

Place inference where latency is tolerable. Add evaluation paths and fallbacks. If a model result gates a critical flow, make it asynchronous or cache decisions with expiration.

How do we avoid lock in while still moving fast?

Abstract at the boundary you are willing to maintain. Map events, isolate vendor specific logic, and keep data portable. Switching remains costly, it becomes possible.

Responsibility shifts from ownership to orchestration

Given how things behave today, this is what quietly changes next. Teams that succeed treat SaaS like living infrastructure, not static services. They invest in event design, dependency signals, and contract negotiations that match their operational risk, then they accept imperfect visibility and move anyway.

Procurement to trial to integration to governance to pruning