Executive Summary

Intelligent automation is shifting from task execution to AI decision making at scale. This guide explains how to design the governance and infrastructure layers that make those decisions safe, compliant, observable, and cost-effective. You will learn the essential architecture components, how the lifecycle works end-to-end, and which tools to choose for real-world deployments today.

Introduction

Most enterprises now run pilots that combine workflow automation with large language models and predictive services. The challenge is no longer proof-of-concept accuracy; it is building a production-grade foundation where AI decision making happens with clarity, trust, and control. In this context, Building the Modern Intelligent Automation Stack: Governance & Infrastructure is about more than picking an LLM. It is about institutionalizing policies, engineering guardrails, and operating a platform that can withstand audits, scale across business lines, and improve continuously without surprise regressions.

Understanding the Topic

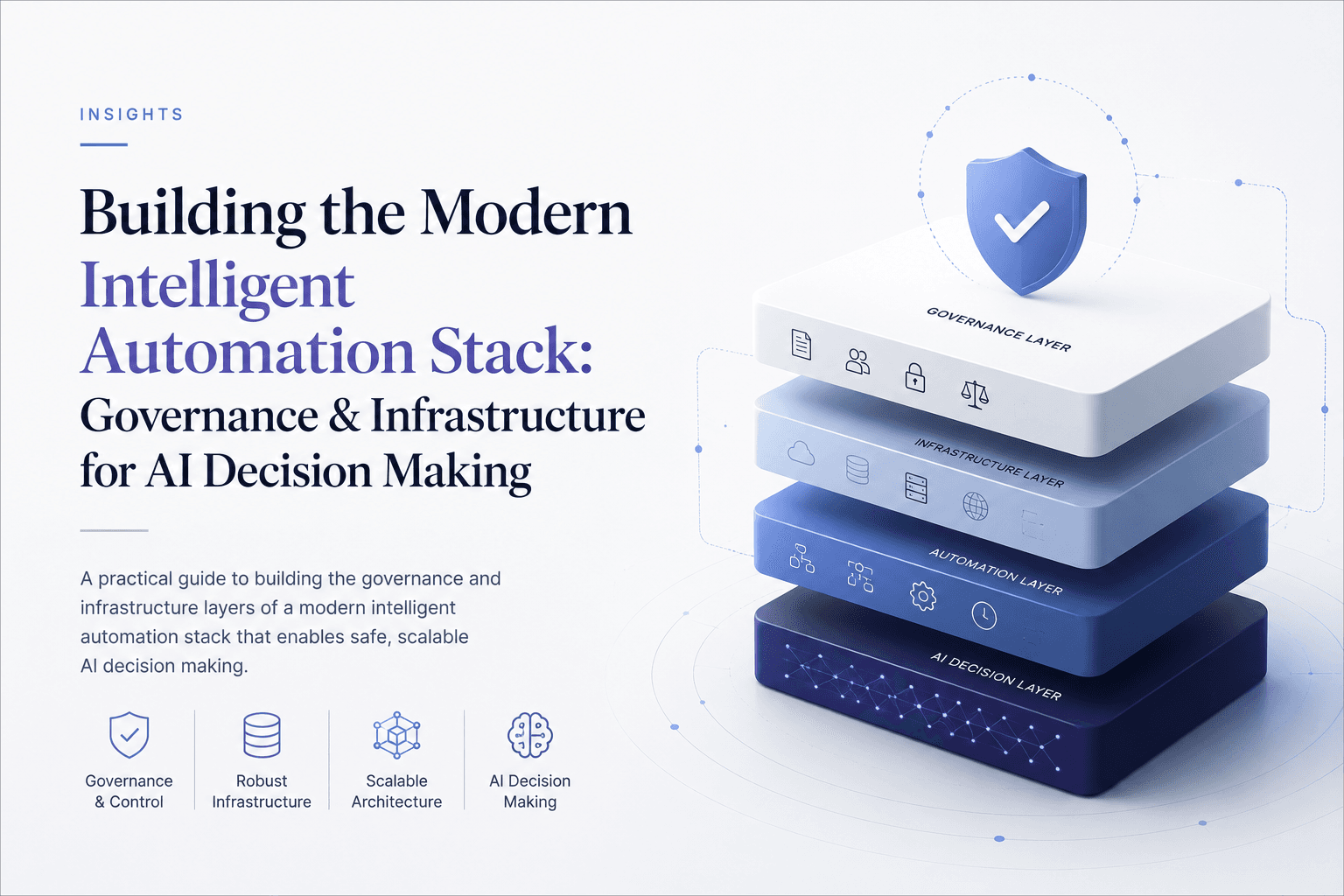

The modern intelligent automation stack is the set of platform capabilities that enable software and AI systems to make and execute decisions reliably. It brings together the data plane, the model and prompt plane, policy and guardrails, orchestration, observability, and security so AI decision making can be governed, measured, and improved.

Practically, this stack has six foundational layers. First, the data plane manages sources, quality, lineage, and access controls for both structured and unstructured inputs. Second, the model and prompt plane provides retrieval-augmented generation, fine-tuned models, prompt templates, and evaluation harnesses. Third, the policy and guardrail plane encodes constraints—acceptable use, safety, PII redaction, and decision thresholds—enforced in real time. Fourth, the control and orchestration plane schedules jobs, manages workflows, and provides human-in-the-loop routing. Fifth, the observability and evaluation plane captures traces, tokens, metrics, and outcomes to quantify quality and cost. Finally, the security and compliance plane handles identity, secrets, encryption, audit logs, and evidence management.

What distinguishes this approach from legacy automation is the explicit separation of decision logic and execution mechanics. Business policies live independently of models and prompts, so change control is faster and safer. Every decision can be traced to inputs, versions, policies, and approvals, enabling defendable AI decision making under regulatory scrutiny.

How This Works in Practice

The lifecycle starts with intake and scoping. Define the decision: what action is being taken, under what constraints, and what is the acceptable risk. Translate that into measurable success criteria: latency, accuracy, coverage, fairness, and unit economics (e.g., cost per decision).

Next, perform risk and compliance assessment. Classify data sensitivity, identify in-scope regulations, and determine oversight needs. High-impact use cases may require stronger guardrails, human approvals, or pre-deployment validation steps.

Data readiness follows. Curate retrieval corpora, establish schemas, configure feature stores for structured inputs, and enable document governance. Add policies for PII handling, retention, and masking.

Design the decisioning approach. Choose between pure rules, statistical models, LLMs with retrieval, or hybrids. Create prompts, constraints, and a decision schema. Build evaluation pipelines with golden sets, adversarial tests, and cost-latency profiles.

Implement guardrails and policies. Configure content filters, tool permissioning, policy engines (e.g., allow/deny lists, thresholding), and redaction. Define escalation paths for uncertain or high-risk decisions and human-in-the-loop checkpoints.

Orchestrate and integrate. Deploy as APIs or workers behind a gateway. Connect to upstream event streams and downstream systems of record. Externalize secrets and enforce least-privilege access.

Deploy with controls. Use environment promotion, versioned artifacts, rollout strategies (canary, shadow), and automated rollback. Capture a full bill of materials for each decision service: data snapshots, prompts, policies, and model versions.

Monitor and improve. Track quality, cost, drift, safety incidents, and user feedback. Re-evaluate prompts and retrieval configurations. Automate post-incident reviews and feed learnings into policy updates and retraining cycles.

Tools and Technologies

Languages

Python for orchestration, evaluation, and ML pipelines; TypeScript/Node.js for API services and gateways; SQL for data prep, lineage queries, and analytical validation.

Libraries

LangChain or LlamaIndex for retrieval and tool orchestration; MLflow for experiment tracking and model registry; Great Expectations and Evidently AI for data and model quality; Ray for distributed workloads; Open Policy Agent (OPA) or Cedar-compatible engines for policy enforcement; Pydantic or Marshmallow for schema validation.

Platforms

Kubernetes for container orchestration and isolation; Airflow or Prefect for pipelines; Kafka or Pub/Sub for events; Snowflake, BigQuery, or Databricks for data; Vector databases such as Pinecone, Weaviate, or pgvector; Model endpoints via Azure OpenAI, AWS Bedrock, or Vertex AI; Hugging Face Inference Endpoints for open models; Feature stores like Feast; Observability with OpenTelemetry, Prometheus, and Grafana; Secrets and KMS via Vault or cloud-native equivalents; API gateways and service meshes such as Kong or Envoy; CI/CD with GitHub Actions, GitLab, or Argo.

Examples and Application

Insurance claims adjudication

Strategic: Reduce cycle time and leakage while keeping explainability for regulators. Practical: Use retrieval-augmented LLMs to summarize evidence and propose decisions; enforce policy thresholds; require human approval above exposure limits; log every recommendation with source citations.

Supply chain replenishment

Strategic: Balance service levels and working capital across regions. Practical: Combine predictive demand models with rule-based constraints for MOQ, lead time, and supplier risk; let LLMs generate rationale summaries; implement canary rollouts by product class to manage risk.

Customer support triage

Strategic: Improve first-contact resolution and CSAT at lower cost. Practical: Classify intents, route tickets, and generate initial responses with retrieval; apply content filters and tone guidelines; escalate uncertain cases; continuously evaluate accuracy and hallucination rates.

Tables and Comparisons

The table below summarizes core components that enable trustworthy AI decision making, with benefits, limitations, and practical considerations.

ComponentBenefitsLimitationsPractical ConsiderationsGuardrails & Policy EngineConsistent enforcement; auditability; faster change controlOverly strict rules can degrade utilityExternalize policies; version them; test with adversarial casesLLM GatewayCentralized costs, routing, and safety filtersSingle-point bottleneck if under-provisionedAdd circuit breakers, per-tenant quotas, and token budgetingRetrieval Layer (RAG)Grounded answers; reduces hallucinationsQuality hinges on indexing and chunkingContinuously evaluate retrieval hit rate and citation coverageFeature/Prompt StoreReusability and reproducibilityGovernance overheadTrack lineage; tie versions to evaluation scoresHuman-in-the-LoopRisk control and learning from feedbackThroughput and latency impactThreshold decisions for review; measure reviewer agreementObservability & EvaluationQuantifies quality, cost, and driftMetric selection can bias improvementsUse task-specific metrics and periodic blind audits

Design Principles That Scale

Separate policy from prompts and models. This lets you change decision thresholds without redeploying the model. Use a policy-as-code engine and treat policy changes like code changes with reviews and tests.

Make every decision reproducible. Log inputs, versions, and outcomes. Store the exact prompt, retrieval context IDs, and policy versions used for each decision to enable replay and root-cause analysis.

Engineer for financial governance. Track unit economics—cost per 1,000 tokens, per request, and per decision path. Implement quotas and circuit breakers to avoid runaway spend during incident conditions.

Design for multi-tenancy and access control. Isolate by business unit and environment. Apply least privilege for data and tools. Use a gateway to enforce safety and usage policies centrally.

Close the loop. Treat evaluation as a product feature. Run scheduled regression tests, shadow traffic, and post-incident reviews, feeding insights back into prompts, retrieval corpora, and policies.

Compliance and Risk Posture

Map controls to recognized frameworks such as the NIST AI Risk Management Framework and ISO/IEC 23894. For regulated decisions, maintain model and prompt cards, conduct impact assessments, and ensure human oversight where mandated. Build audit artifacts directly into your pipelines so evidence generation is automatic, not an afterthought.

Common Pitfalls

Overfitting to happy paths. Evaluate with adversarial prompts, out-of-domain data, and edge cases. Underestimating data governance. Poor document hygiene and access policies undermine even the best models. Treating evaluation as a one-off exercise. Without continuous testing, performance drifts silently. Ignoring cost controls. Token spend can explode; implement budgets and dynamic routing to lower-cost models when possible.

Putting It All Together

A mature platform is a set of contract-bound services: a decision API behind a gateway, retrieval indices with ownership and SLAs, a policy engine with versioned rules, an evaluation service with golden sets, and observability that ties every token to a trace. With this foundation, AI decision making becomes a managed capability rather than a risky experiment.

Call to Action

If you are aligning multiple teams behind a common approach to intelligent automation, start with a short governance and infrastructure baseline. Inventory what you already have, pick one critical decision flow, and stand up the guardrails and observability first. If you need a pragmatic blueprint or a second set of eyes, explore our guides and reach out for a working session.

Links

Internal resources: Intelligent automation use cases, AI decision making patterns, Prompt governance, AI governance assessment, LLM platform engineering, Data engineering.

External references: NIST AI RMF, ISO/IEC 23894, EU AI Act overview, Kubernetes docs, MLflow, Open Policy Agent, OpenTelemetry, Evidently AI.