Executive Summary

Once you move past demos, retrieval-augmented generation stops being a pattern and starts being an operational discipline. The moment answers touch contracts, policies, or customers, the pipeline shape is dictated by latency budgets, governance rules, and brittle integrations you didn’t choose.

Production RAG Pipelines for Enterprise AI Solutions show up because static prompts can’t hold context. Systems either retrieve the right evidence at the right moment or they drift, and drift gets expensive. Retrieval makes you confront data quality, fragmentation, and the practical cost of keeping indexes current while the business keeps moving.

The hard parts aren’t novel models; they’re boundaries. Who owns the document truth? What qualifies as sufficient evidence? How do you stop the pipeline from leaking sensitive data during a hot incident? The answers live in runbooks, queues, and budgets, not in slides.

Most improvements don’t come from clever tricks; they come from watching failure modes in production and tightening the handoffs. Expect slow progress redistributing responsibility from prompts to data contracts and from explorations to durable behaviors.

Introduction

A team shipped a knowledge assistant for a global operations org, scoped as a lightweight win. It worked in pilot. Then a quarter-end surge hit, and answers slowed to a crawl. Index builds collided with downstream sync windows. Compliance flagged a response that quoted an outdated policy. SRE throttled the model gateway to keep capacity for customer-facing APIs. The assistant felt fine in testing; under load, it exposed every dependency the team had glossed over.

That incident made Production RAG Pipelines for Enterprise AI Solutions shift from interesting to required. It wasn’t about rolling back; it was about making retrieval accountable to latency, accuracy, and governance under real usage spikes. Any enterprise AI solutions stack that touches regulated knowledge or complex workflows discovers the same tension: retrieval must be fast, correct, and safe, while the underlying data is messy and continuously changing.

Latency pressure meets governance: what production RAG actually becomes

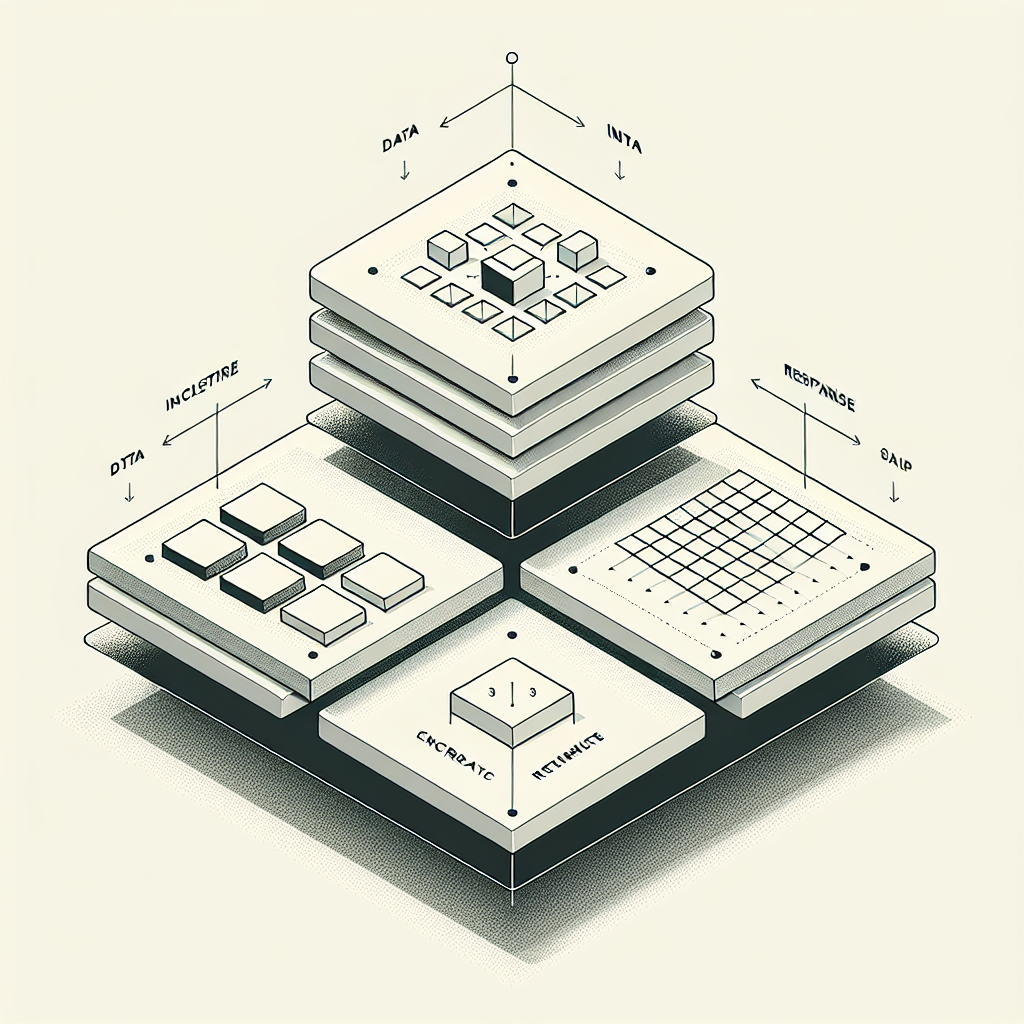

In production, RAG isn’t a single model plus a vector store. It’s a set of services that have to survive schedule conflicts, partial failures, and policy checks. There’s ingestion that chunks and tags content, enrichment that calculates embeddings and rules, an index that supports concurrent writes and cache-aware reads, a retriever that negotiates recall vs cost, and a response layer that assembles evidence under token and compliance limits.

Constraints show up fast. Index freshness competes with query speed. Long documents split unpredictably; chunk boundaries decide answer quality. Metadata becomes your safety net—without strong typing and lineage, your filters either under-include or leak. Observability can’t be model-only; you need to track what was retrieved, why, and whether the answer would have changed with newer source data.

Boundaries are practical: retrieval must operate within tenancy, classification, and consent rules. You can’t rely on downstream masking if upstream allowed sensitive chunks to land in an index shared across departments. Failure modes look familiar—stale indexes, silent ingestion drops, embedding skew after a model refresh, tail latency spikes under burst traffic, and policy mismatches when documents carry conflicting tags across systems.

Operators eventually introduce circuit breakers: skip enrichment when queues back up, fall back to a smaller index under load, cap retrieval depth per question type, and degrade answers to citations-only when confidence drops. None of that is elegant, but it keeps the pipeline from turning one bad ingestion event into widespread answer contamination.

Sequencing and handoffs dictate reliability more than any single component

The friction lives in the order of operations. Source data arrives at odd intervals; some feeds are batch, others event-driven. Ingestion normalizes structure, applies classifications, and queues for enrichment. Enrichment has dependencies—embedding model version, language detection, entity extraction. Indexing writes collide with read-heavy periods. Retrieval layers carry query routing rules that choose between sparse and dense search based on question shape. The response assembly applies templates, confidence checks, and policy redactions before it hits the model gateway.

Teams tend to slow down in two places: enrichment versioning and retrieval filters. Embedding changes carry subtle drift; answers look fine until a niche topic surfaces and recall collapses. Filters seem harmless until governance requirements shift and the allowed metadata fields don’t align with what the index supports. Those revisits aren’t optional; they’re where reliability is earned.

When environments disagree, the pipeline breaks at the seams

Development uses small datasets that behave well under low load. Staging gets more realistic but often lacks the ugliest sources. Production introduces noisy PDFs, multilingual content, and nightly batch jobs that compete with daytime traffic. Without environment-specific safeguards, you end up testing a fast path and shipping a slow path. The fix is awkward: isolate ingestion windows, introduce canary indexes for new embeddings, and enforce query budget checks that stick even when leadership asks for instant acceleration.

Hand-offs between owners define your failure recovery speed

Legal owns classifications, data engineering owns lineage, platform owns the index, and application teams own retrieval templates. Incidents cross these lines. A broken classification taxonomy won’t be found by platform alerts; it looks like retrieval getting worse “for no reason.” Running a RAG pipeline means treating taxonomies and policies as versioned artifacts with rollback plans, not as static configurations buried in a doc.

Tools and technologies only matter where they set hard edges

The tool choices are secondary until they create hard constraints. A document parser that can’t preserve layout will force chunking strategies that hurt recall. An enrichment service that doesn’t track embedding versions makes drift invisible and postmortems weak. An index that handles concurrent writes poorly dictates nighttime rebuilds and daytime staleness. A model gateway with uneven rate-limits pushes you toward more local summarization to avoid bursting in the wrong window.

Observability needs to sit at the retrieval boundary. Capture which chunks were selected, their timestamps, classifications, and how they influenced the final tokens. Log reasoning signals only where they help decisions: confidence bins, retrieval depth, cache hits. Anything else adds noise during incidents. A workflow orchestrator helps when queues back up, but it’s only useful if the pipeline can skip non-essential enrichments without corrupting the index state.

For data management, contracts outlive tools. If downstream systems disagree on document IDs or classification semantics, the pipeline becomes a polite liar. This is where enterprise AI solutions either stabilize or stall. Aligning on identifiers and lineage makes retrieval predictable; the specific storage or embedding tech becomes a replaceable part rather than the thing you fear touching.

Examples and applications under real pressure

A procurement assistant pulls clauses from thousands of vendor agreements. It works until an acquisition drops a new batch with different section markers. Chunking based on old assumptions shatters the clauses and retrieval starts returning half-statements. The team toggles a fallback: use structural cues when present, otherwise rely on regex heuristics. It fixes recall but increases latency. Legal asks for stronger citations; the response layer adds stricter evidence thresholds and returns fewer answers during spikes. Users complain about “unhelpful” outputs; leadership prefers safe over fast.

A support bot uses internal runbooks and past tickets. Fresh tickets carry sensitive details that indexing should avoid. A temporary mask misconfiguration lets a handful slip through. Observability picks it up only because retrieval logs include classifications. The fix is not a new model; it’s moving masking upstream, adding a quarantine index, and forcing delayed visibility for volatile sources. Accuracy improves; costs rise due to dual indexing. Finance challenges the spend. The compromise: reduce retrieval depth for high-frequency queries that already sit in a cache, and reserve deeper retrieval for rare, complex issues.

A compliance Q&A references policy documents that change monthly. The ingestion pipeline rebuilds nightly, but users need answers during updates. The team adds a transitional state: mark documents as pending and let retrieval avoid mixed versions. During the switch, a rare query returns empty because all relevant documents sit in pending. Operators override with a time-bounded fallback index. It’s messy, but it avoids contradictory citations while keeping service available.

Tables and comparisons that help decisions under uncertainty

DecisionNewcomers’ LeaningExperienced AdjustmentOperational ImpactChunk sizeUniform small chunks for recallHybrid sizing tied to structure and metadataBetter precision, lower token waste, more complex ingestionIndex freshnessNightly rebuildsIncremental writes plus canary rebuildsLower staleness, higher write complexity, safer rollbacksRetrieval depthMax depth for safetyDepth caps based on query type and confidenceStable latency, lower cost, occasional conservative answersEmbedding updatesUpgrade universallyShadow indexes and sampled A/B retrievalControlled drift, extra storage, slower rolloutPolicy filtersApply late in responseApply early at indexing and retrievalFewer leaks, stricter outputs, higher upfront governance work

FAQ: questions that surface during adoption and scale

How do we balance speed with correctness when users are impatient? Set query budgets and depth caps by intent. Cache the obvious, and let complex queries buy latency with stricter evidence requirements. It’s not fair; it is predictable.

What breaks first when we scale? Ingestion and enrichment queues. Watch classification lag and embedding version skew. Retrieval issues are often symptoms of upstream drift.

Do we need human review? Not for every answer, but for policy changes and tricky domains. Use sample audits tied to document freshness and high-risk classifications, not blanket approvals.

Can we rely on one index? Not for long. Split by tenancy, sensitivity, and sometimes by embedding version. A single index invites conflicts between correctness and availability.

How do we measure success without gaming metrics? Track incident rate and mean time to correctness after a data change. Token counts and accuracy scores help, but recovery behavior tells you if the pipeline is operationally sound.

Operational responsibility shifts from model cleverness to data contracts

Given how things behave today, this is what quietly changes next: teams stop treating retrieval as a model accessory and start treating it as a governed data interface. The steady work is not new tricks; it’s tightening identifiers, policies, and lineage so the model can safely assemble answers under pressure.

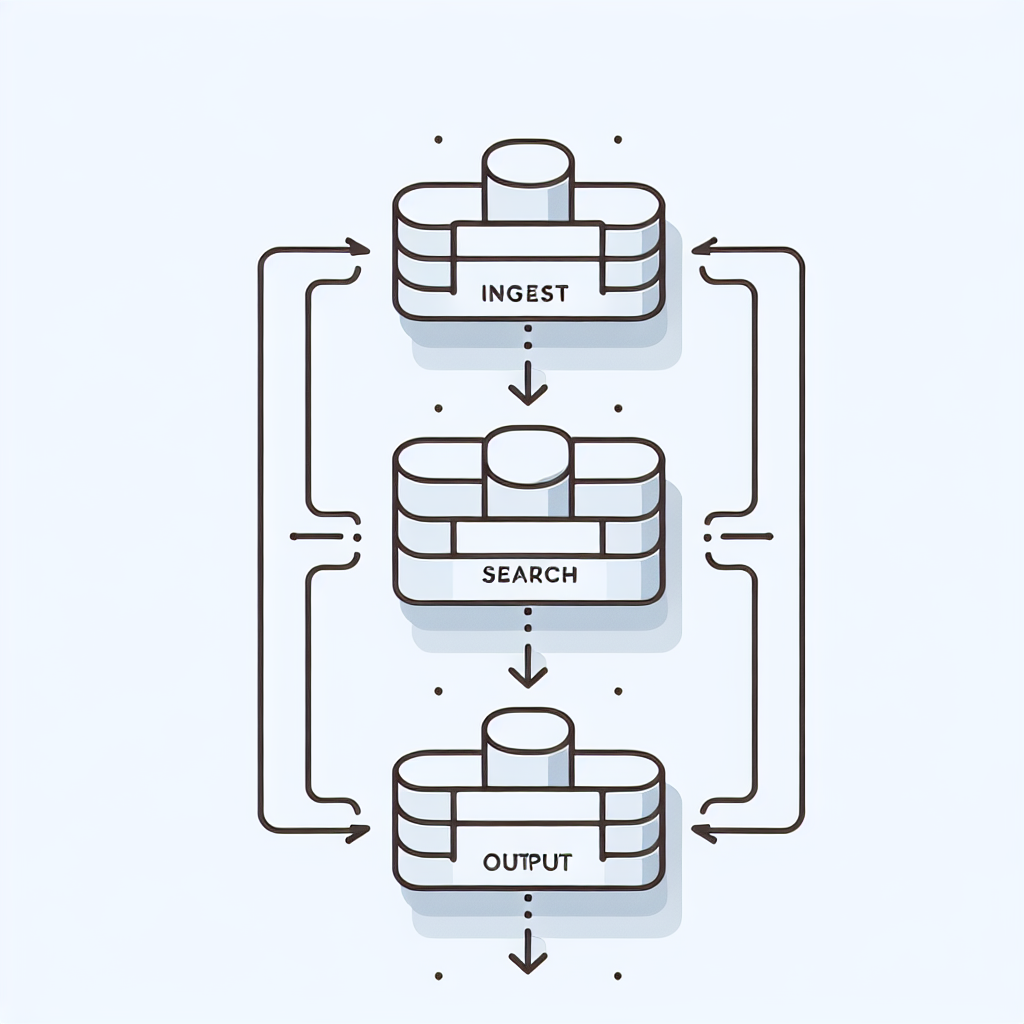

Raw docs → CATALOG → VECTORS → RETRIEVAL → ANSWERS