Agentic AI stops being theoretical as soon as you need a system to navigate messy inputs, make decisions, and move work forward without waiting for a human every few steps. The future of Agentic AI is shaped by budgets, permissions, latencies, and the unglamorous parts of production. That future is not clean, but it's coming into focus.

Executive pressure: autonomy becomes a cost and risk lever

Agentic AI is not a silver bullet; it’s the lever we reach for when complexity and handoffs turn small tasks into slow, expensive projects. It’s unavoidable the moment coordination beats computation as the dominant cost driver. That’s when autonomy earns its keep.

In practice, autonomy collides with governance. You don’t get agents unless you accept new kinds of failures: unbounded loops, permission misfires, and action cascades that wake up teams at 2 a.m. The attraction is not speed; it’s reducing the number of times work stalls waiting for context or approval.

The Future of Agentic AI: Trends and Predictions. matters because ownership shifts. Once an agent touches production systems, your policies and observability are now runtime contracts, not dashboards. The conversations move from model accuracy to blast radius, rollbacks, and cost envelopes.

We build this because real systems are brittle under manual glue. If you’re seeing queues grow, audits pile up, and backlogs stall on missing context, Agentic AI becomes a requirement, not an experiment. That’s where trade-offs start to surface.

Introduction: autonomy shows up when human handoffs stall delivery

The friction shows up in routine operations: a workflow splits across services, each requiring a ticket, a check, and a manual nudge. Something small—updating a customer record, reconciling a shipment, triggering a fraud review—drags for days. Not because the logic is complex, but because the glue fails: people are busy, states drift, policies aren’t encoded as code. We added more dashboards and got more red lights.

We tried to script the work. The scripts broke on the third edge case. Then the edge cases became the norm. That’s when Agentic AI enters the room. The Future of Agentic AI: Trends and Predictions. is not about smarter models; it’s about systems that can decide, ask for help, replan, and keep moving without creating new queues. The pressure is operational, not ideological.

Production reality: autonomy meets governance and failure budgets

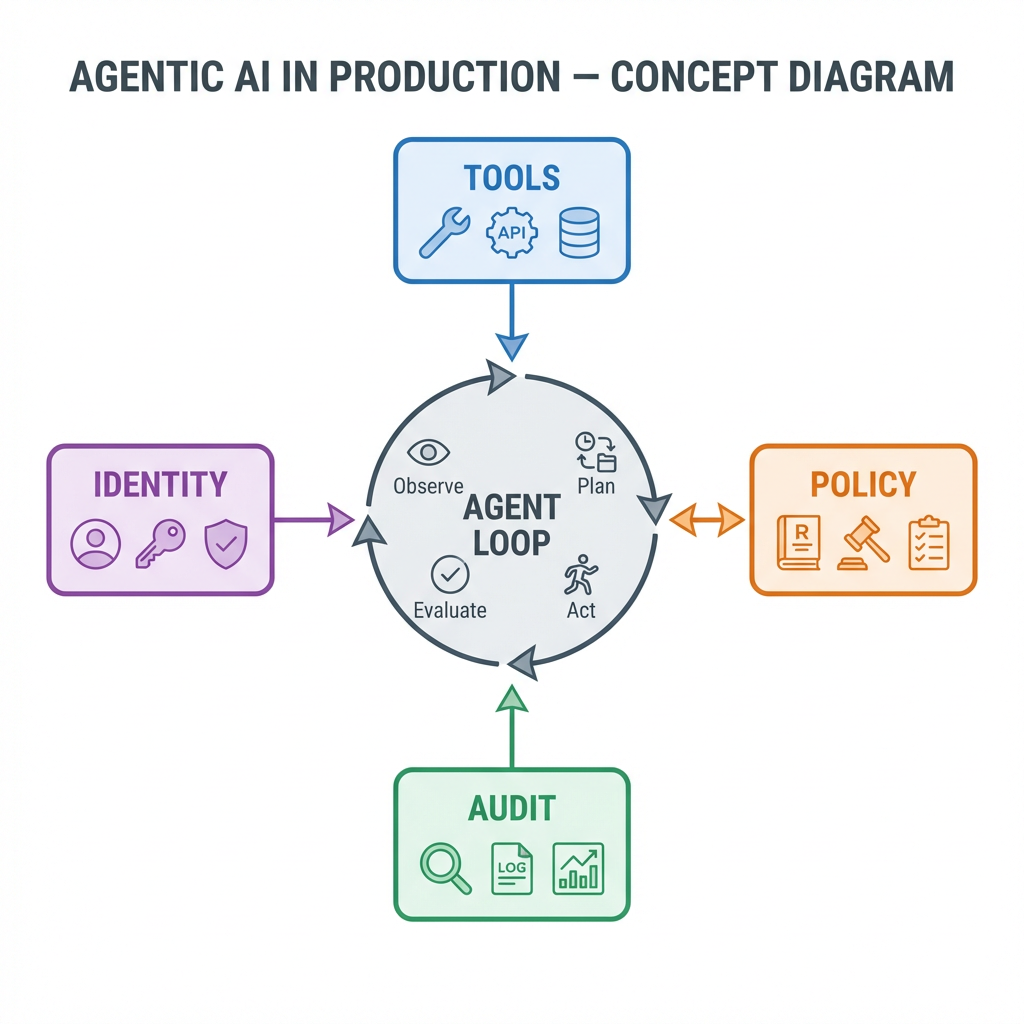

In production, an agent is a decision loop wrapped in guardrails. It has access to a constrained set of tools, operates under identity and policy, tracks state in a log, and must justify each action. It’s more like a cautious intern with a strict checklist than a genius solving everything. That’s the only way this works in real environments.

The boundaries are non-negotiable: explicit scopes for credentials, rate limits that enforce a fixed failure budget, timeouts to prevent infinite replanning, and kill switches for human override. Agents get a quota of actions per unit of time and a ceiling on downstream cost. If they hit a wall, they must ask—through a structured escalation channel—rather than brute-force their way forward.

Failure modes are predictable: tool misbinding when a schema changed without updating the adapter; prompt injection or data poisoning seeping through a new integration; agents chasing stale state because the event bus lagged; cost spikes from repeated retries under jitter; and “escalation storms” where multiple agents ask for help simultaneously, overwhelming the same human queue they were meant to relieve.

State management becomes a first-class concern. Durable, append-only logs are preferable to mutable stores, because you will replay what happened. Memory is more than embeddings; it’s action history, decision rationales, and permission checks, all audit-ready. Shadow mode—where the agent simulates actions and writes proposed changes—is not a nice-to-have; it’s the only safe way to introduce autonomy without burning weekends.

Where autonomy actually moves through the stack: orchestration, handoffs, and rollback pressure

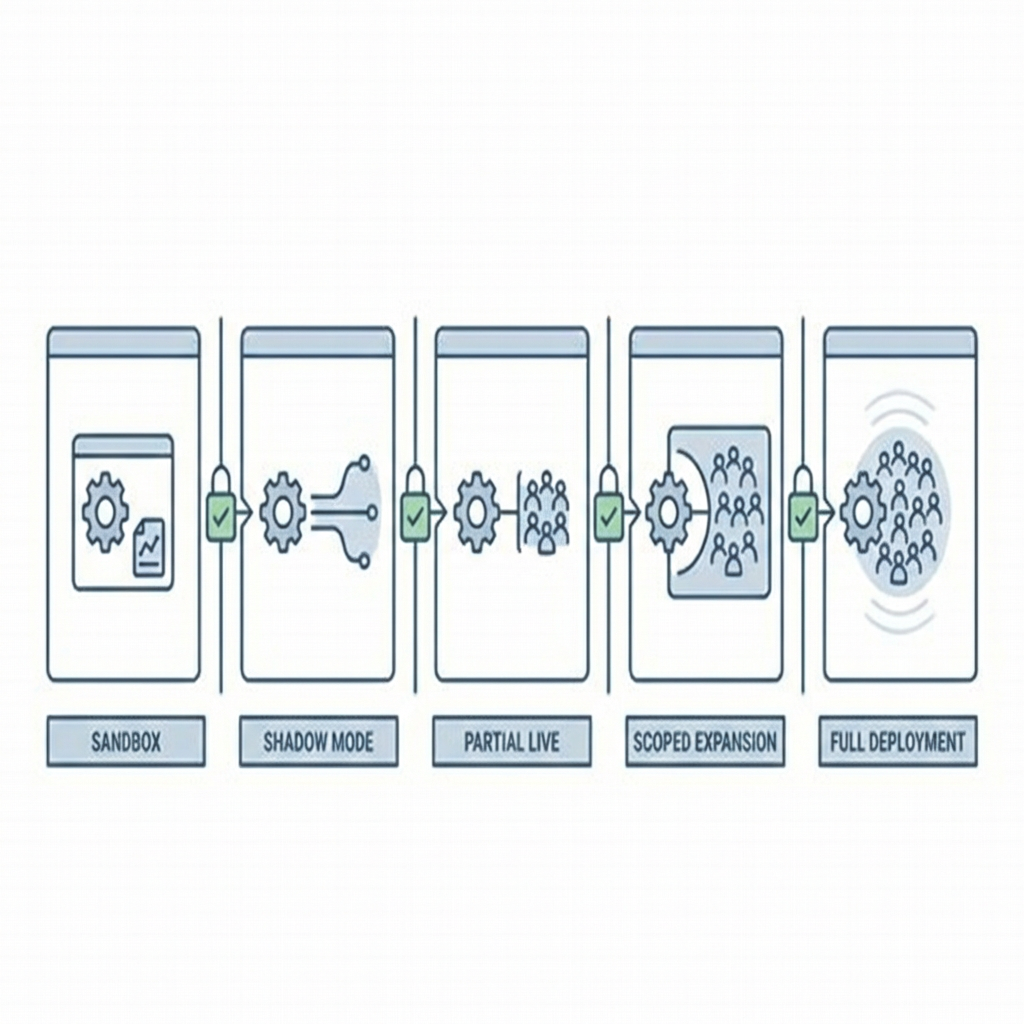

Deployment is a sequence, not a switch. Agents begin with a narrow tool set in a sandbox, then progress to shadow mode in staging, then partial live in production with tight limits, and only later generalize beyond the starting slice. Each step forces a new decision: what actions are allowed, what evidence is logged, where rollbacks land, and who signs off on widening the scope.

Identity friction forces redesign of the action space

Identity and permissions dictate the action space. If a tool requires human SSO and the agent can’t hold that identity, you don’t give it the tool; you introduce an API proxy with scoped tokens and audit fields. Teams often revisit the first design once they realize that implicit permissions in a CLI don’t translate to policy engines. The work shifts to building adapters that enforce least privilege in code.

Data contracts drive the pace of adoption

Agents fail if schemas drift or fields contain surprises. Onboarding a single tool takes longer than expected because you need contracts with explicit defaults, null behavior, and versioning. The future trend is clear: agents will force cleaner contracts, not because they prefer structure, but because you can’t debug autonomy without reproducible inputs.

Observability becomes narrative, not metrics alone

Latencies and error rates matter, but you need the narrative: which plan the agent took, why it pivoted, which tools were considered, and what confidence it had at each step. Handoffs to humans include the agent’s reasoning and the state snapshot. If your system only surfaces a status code, you’ll be blind during incident response. The debugging target is the decision loop, not just the endpoint.

Rollback paths must be cheaper than pushing forward

When an agent proposes a multi-step change—say, updating a record, notifying a customer, and triggering a downstream adjustment—you need atomics or compensating actions. If your rollback costs more than the forward path, incidents become political. Teams end up revisiting database transaction boundaries, event deduplication, and idempotency not for elegance, but because agents amplify the expense of confusion.

Tools and technologies under pressure, not preference

Tool choices aren’t fashion; they’re answers to constraints. Policy engines appear because you need declarative approval rules that ops can read and change without redeploying. Vector memory stores show up only when the agent benefits from flexible recall; many tasks require crisp, structured state instead. Event-sourced logs beat ad hoc auditing because you want to replay decisions and reconstruct exact sequences.

Runtime sandboxes are favored when the action set includes anything risky—file manipulation, external calls, or schema migrations. Adapters outnumber native integrations because most tools weren’t designed with agent identities in mind. People get hung up on model choice, but the decisive gains often come from a well-built tool layer and strict cost governance: per-agent budgets, per-action ceilings, and backpressure on retries.

Monitoring stacks expand to include decision traces and policy evaluations. You’ll tag actions with correlation IDs, link them to tickets, and enrich logs with context so humans can intervene with minimal friction. Alerting shifts from endpoint failures to abnormal decision patterns—too many replan loops, excessive escalations, or a sudden spike in tool denials.

Examples where autonomy helps, and where it bites

Fraud review in payments: an agent gathers evidence across accounts, explains why the pattern is suspicious, and proposes a limited hold. Trade-off: if tool access is broad, the agent can touch sensitive data; tighten scope and it may miss subtle links. Failure case: schema change in the transaction feed leads to false positives; the agent escalates too often, consuming analyst time. Resolution: add a preflight data contract check and a quota on repeated alerts.

Customer support triage: an agent looks up the customer’s recent interactions, checks known issues, and drafts a response with links and next steps. Trade-off: autonomy speeds first response, but policy exceptions pile up. Failure case: the agent confidently suggests steps that no longer apply after a backend upgrade. Resolution: tie the agent’s playbooks to service versioning and enforce a revalidation step when versions roll.

Data pipeline remediation: the agent detects a failing job, analyzes logs, suggests a fix, and runs a safe test in isolation. Trade-off: remediation saves time, but a bad fix can propagate noise. Failure case: the agent resets a job repeatedly, causing downstream delays. Resolution: throttle remediation attempts, require a human approval after N retries, and log diffs to keep the blast radius small.

Comparisons that influence real decisions, not slide decks

Decision Surface Newcomer Impact Experienced Practitioner Impact Tooling scope Tends to over-permission for demos; risk grows fast. Starts narrow; expands only after audit patterns stabilize. Risk controls Focus on model filters; misses policy enforcement gaps. Prioritizes identity, quotas, and compensating actions. Observability Graphs and error counters; poor decision traces. Human-readable narratives tied to action logs. Deployment strategy Single leap to prod; surprises lead to rollback pain. Shadow, partial, limited scope; cheap exits at each stage. Cost governance Budgets per environment; misses per-agent spikes. Per-agent envelopes, per-action ceilings, backpressure. Change management Tool updates break prompts silently. Contracts, version tags, and adapter tests gate releases.

FAQ under operational heat

How do we prevent agents from spiraling into costly loops? Set hard ceilings on actions, retries, and downstream cost. Log decision branches and declare a fallback path that escalates with context instead of retrying blind.

What’s the first boundary to enforce? Identity. Agents must operate with scoped credentials and explicit policies. Most incidents trace back to fuzzy permissions, not clever exploits.

Do we need specialized memory stores? Only if the task benefits from flexible recall. Many production use cases prefer structured state plus event-sourced logs. Pick based on the decision loop’s needs, not hype.

How do we introduce agents without risk spikes? Shadow mode with proposed actions, then partial live with tight quotas. Expand only after the audit trail shows stable behavior and cheap rollbacks.

What changes in the on-call rotation? Pages shift from broken endpoints to ambiguous agent behavior. Provide traces, rationale, and quick disables. On-call needs authority to shrink scope immediately.

Ownership shifts from models to policies and budgets

Given how things behave today, this is what quietly changes next: the center of gravity moves from model quality to action design, policy governance, and cost envelopes. Teams that ship agents own contracts, not just code, and they optimize for clean exits over perfect automation.

playbooks -> machine-readable policies -> constrained agents -> multi-agent ecosystems