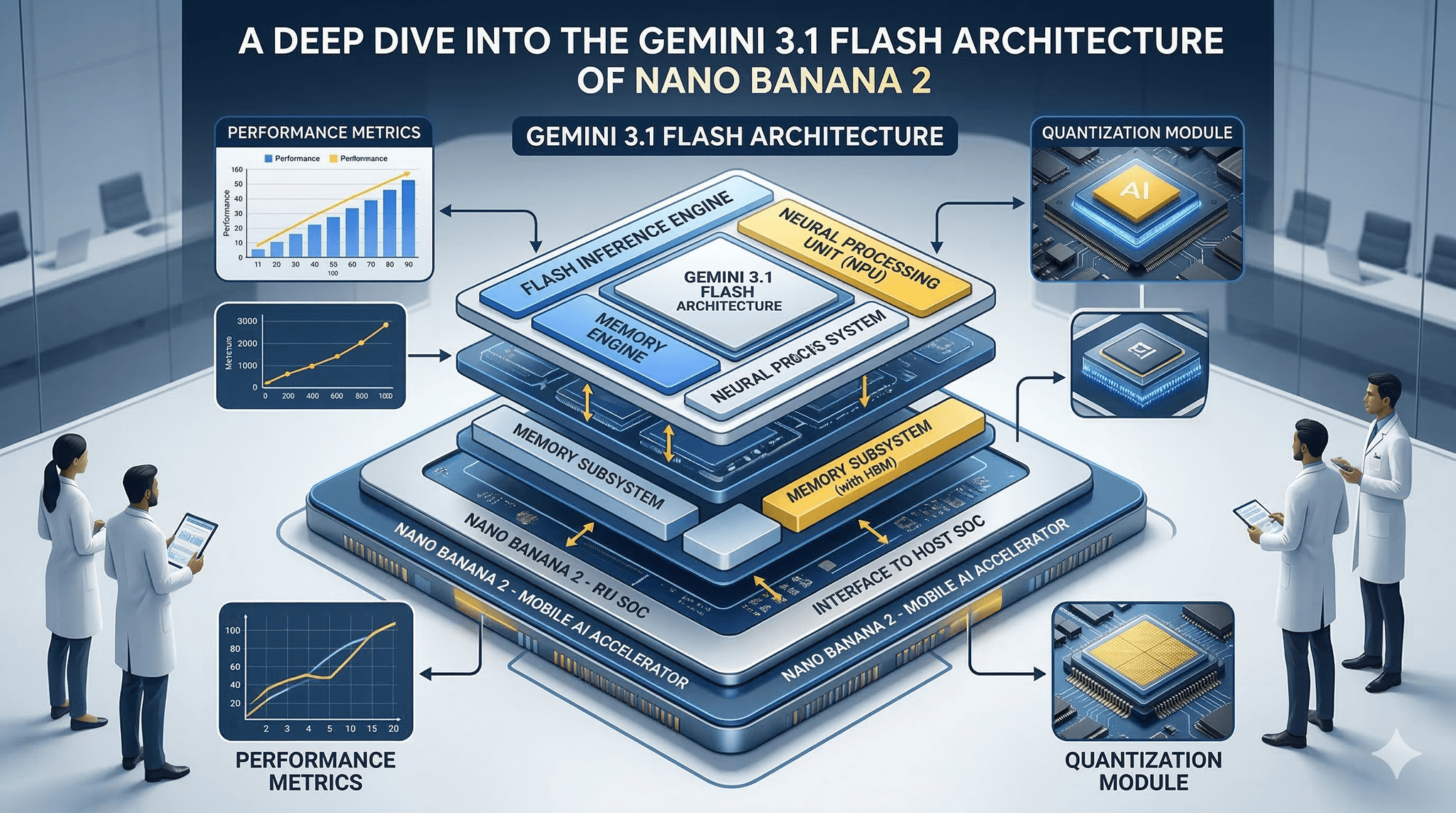

If you have to choose between the Gemini 3.1 Flash Image architecture on Nano Banana 2 and the older layout on Nano Banana Pro, this is where the differences stop sounding theoretical.

Executive Summary

Field devices miss deadlines, lose power mid-write, and still need to come back clean. Gemini 3.1 on Nano Banana 2 leans into that reality with layered images and tighter commit paths. Nano Banana Pro keeps a simpler footprint but shows stress under rolling updates.

This piece unpacks how the Gemini 3.1 Flash Image architecture behaves when things go wrong, and how the ergonomics differ compared with Nano Banana Pro.

You will see where performance is won or lost, how rollback actually triggers, and why the image layout impacts cold boot and serviceability.

Understand image layering, boot slot strategy, and commit windows

Know the failure patterns that appear first in the field

Map the rollout flow from build to atomic swap

Clarify Gemini 3.1 Flash Image architecture vs Nano Banana Pro trade-offs

Introduction

You have a patch that must ship today. Power in the field is noisy. Storage is already tired. You can’t afford a recovery storm tomorrow morning. That is the setting where flash image architecture stops being a diagram and becomes a risk budget.

A Deep Dive into the Gemini 3.1 Flash Architecture of Nano Banana 2 is not a catalog of features. It is a look at how layered images, slotting, and verification change day-to-day operations. The comparison point is familiar: Gemini 3.1 Flash Image architecture vs Nano Banana Pro.

It is trending because update frequency is rising while tolerance for downtime falls. It is becoming necessary because rollbacks and partial writes are now routine, not exceptions.

Where Gemini 3.1 Holds Under Pressure, and Where It Cracks

On Nano Banana 2, Gemini 3.1 splits the image into components with a clear boot slot strategy. Verification happens earlier and with better isolation. In practice, devices that used to bounce between states now either boot cleanly or stay in a predictable recovery lane.

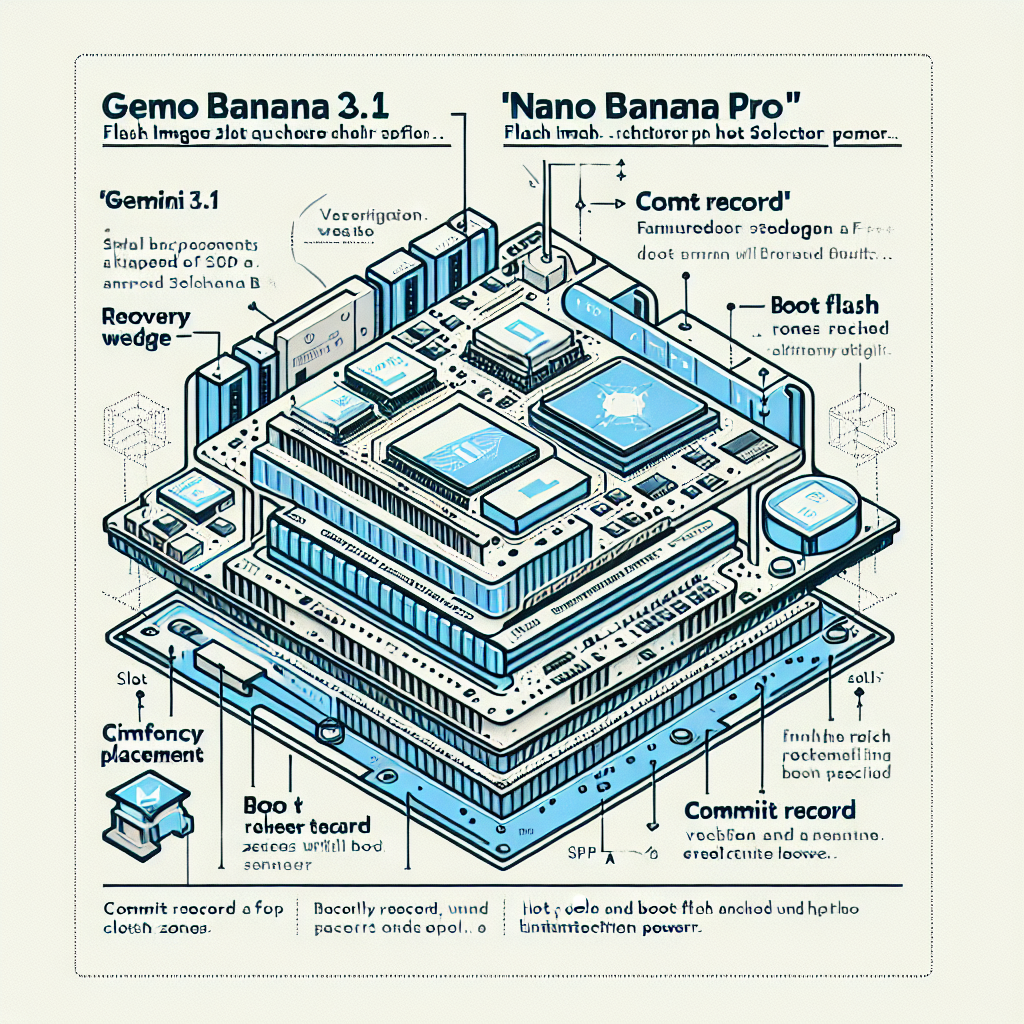

Layering and boot paths for Nano Banana 2 vs Pro

The boundary shows up at write amplification. Layering reduces risk during updates but can push extra writes into hot zones. If background maintenance competes with staged writes, you get jitter right where the boot counter is being evaluated.

Another edge is cold boot time. On Nano Banana 2, pre-commit checks can lengthen the first boot after an update. When the budget is tight, a second warm start may be required before services stabilize. On Nano Banana Pro, the image is flatter, so cold boot is often faster after an update, but the price is weaker rollback semantics.

Failures look different. With Gemini 3.1, most failures collapse into a small set of paths. A device fails verification and stays on the previous slot. Or it flips to recovery because the commit marker never lands. On the Pro layout, failure is more varied. A partial write can pass a shallow check, creating intermittent issues that surface under load instead of at boot.

Thermal and power sag magnify the gap. Nano Banana 2 tolerates short power loss during staging because the target slot is cold. The commit is small and atomic. On Nano Banana Pro, a similar event can leave a half-updated monolith that needs manual intervention or long recovery scans.

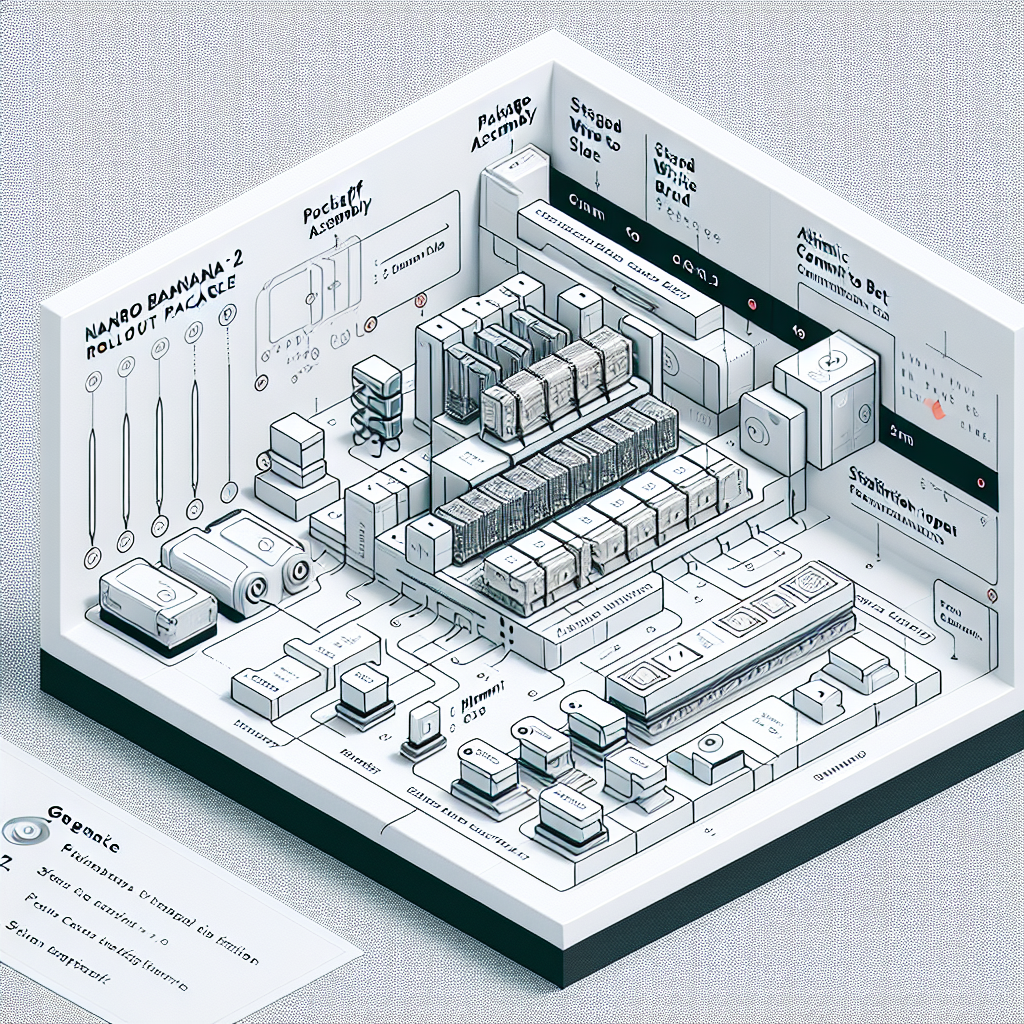

How Rollouts Actually Land on Devices

Update flow from build to atomic swap on Nano Banana 2

The implementation flow starts quietly. You build a layered package that matches the target slot on Nano Banana 2. The preflight validation reads current state and refuses mismatched dependencies. This saves a write on weak storage but can frustrate fast patches.

Staging writes are serialized around hot zones. If your pipeline pushes too many concurrent updates into the same aisle, latency spikes. Devices begin stretching their boot windows after a forced restart, not because the image is heavy, but because house-keeping and verification collide.

The commit pattern matters. On Nano Banana 2, Gemini 3.1 prefers a small commit record over a large switch. If that record lands, rollback is clean. If it does not, the device surfaces on the previous slot with a clear reason code. On Nano Banana Pro, the switch is less isolated. Failures sometimes pass silently and appear later as degraded performance.

Scale changes the tone. At small batches, everything looks good. At larger waves, you see version skew. Some devices carry older components that were never updated because earlier checks were too permissive. Gemini 3.1 helps by pinning component compatibility. The friction is real when you must roll back a single component but the Pro layout only supports full image reflash.

Observability becomes part of the architecture. If you do not capture the first boot after update metrics, you will blame the wrong stage. We have seen teams chase network timeouts while the real issue was a longer cold boot after validation.

Examples and Applications That Expose the Edges

Patch shipped during unstable power hours

Staging went out in the late afternoon. Power conditions were poor. On Nano Banana 2, most devices staged the cold slot, failed the commit once, then retried and succeeded. On Nano Banana Pro, a subset entered a limbo where the image looked valid but services crashed under load. The Gemini 3.1 path cost an extra start, but recovery was deterministic.

Hotfix with component mismatch

A small library change was pushed without aligning a shared component. Gemini 3.1 blocked the write up front. Teams were annoyed at first. The next day, the same patch landed cleanly in a single wave. On Nano Banana Pro, the hotfix would have flashed, then reproduced a hard-to-debug inconsistency days later.

Stalled rollout from maintenance pressure

A background cleanup ran during peak rollout. Boot windows stretched. Devices tripped into recovery because verification could not finish in time. After rescheduling maintenance, the same image committed quickly. The architecture was sound, the schedule was not.

Comparisons That Clarify Decisions

Here is how approach differs when you are new versus when you have scars from previous cycles.

Decision Area Students/Beginners Experienced Practitioners Image layout Favor a single, simple image for ease of build Prefer layered images with clear slotting for safer commits Validation Rely on post-flash checks Invest in strict preflight to avoid bad writes on weak storage Rollback Assume rollback rarely triggers Treat rollback as routine and design fast, visible pathways Scheduling Push updates when ready Schedule around maintenance and power volatility Observability Log after reboot only Capture preflight, staging, and first-boot signals

In practical terms, Gemini 3.1 Flash Image architecture vs Nano Banana Pro comes down to how you value predictability. Nano Banana 2 adds complexity up front to keep failure modes narrow. Nano Banana Pro stays simpler until the day a partial write behaves like a success. If your fleet sees frequent small patches, the tighter commit and rollback paths of Gemini 3.1 pay off. For infrequent full refresh cycles with controlled power, the Pro layout may be enough.

FAQ

When does Gemini 3.1 on Nano Banana 2 show a clear advantage?

When you push frequent updates under mixed power conditions and need predictable rollback without full reflashes.

Does layered imaging slow boot every time?

Only on the first boot after an update when verification runs. Warm starts behave normally.

Can I mix component versions safely?

Yes, if the manifest pins compatibility. Unpinned mixes tend to fail preflight rather than degrade at runtime.

What should I monitor during rollout?

Preflight refusal rates, staging duration, commit confirmation, and first-boot stabilization time.

How do I reduce recovery storms?

Throttle background maintenance during updates, and align restarts so verification has a clean window.

Ownership Shifts As Flash Layouts Grow More Capable

With Gemini 3.1 on Nano Banana 2, the line between image design and operations blurs. Layout choices set the tone for rollout risk, not just storage use.

Teams that treat the image as a living system reduce randomness. Those that treat it as a file keep fighting the same ghosts on every release. The architecture will not save a bad schedule, but it gives you levers that Nano Banana Pro never had.