Introduction

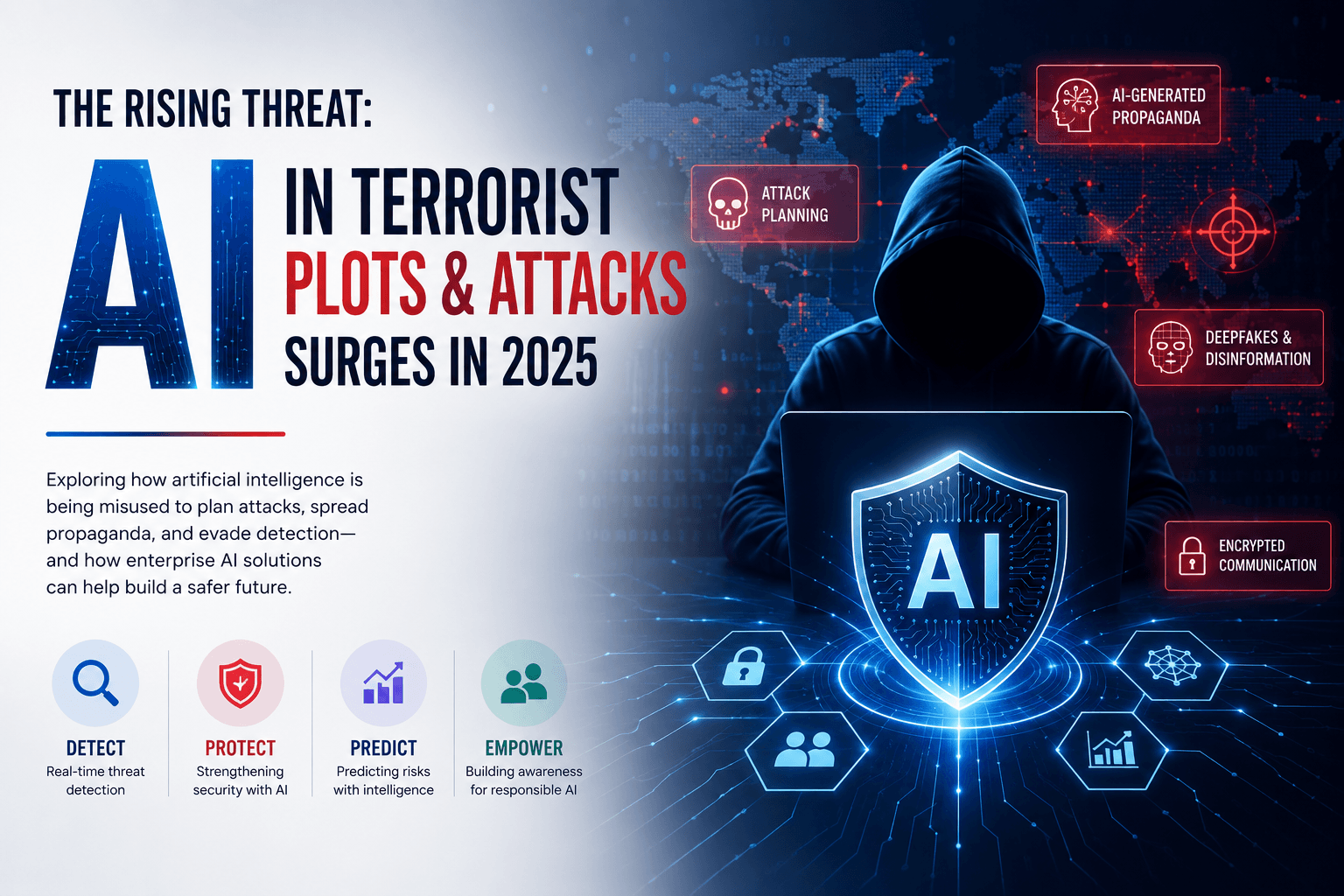

The evolution of artificial intelligence (AI) in recent years has sparked numerous discussions about its implications—not just in the realm of business and technology but also in the context of security. By 2025, reports indicate a troubling surge in the application of AI technologies in terrorist plots and attacks. This alarming trend compels us to consider not just the risks of misuse, but also the proactive measures that companies like Devot.ai can implement to counteract such dangers while advancing enterprise AI solutions.

Understanding the Landscape

The integration of AI into various sectors has proven to be transformative, revolutionizing how businesses operate, and enhancing customer experiences. However, with great power comes great responsibility. The potential of generative AI and large language models (LLMs) can be exploited for malicious intents. Terrorist organizations are reportedly harnessing these technologies to not only plan sophisticated attacks but also to disseminate propaganda more effectively. This evolving landscape poses significant challenges not only for national security but also for businesses and tech developers.

The Role of Generative AI in Security Threats

Generative AI, which includes technologies that can create text, images, and even video, can be misappropriated by wrongdoers to produce convincing misinformation or sophisticated deepfakes. The ease of generating realistic content makes it a powerful tool for manipulation—ideal for radicalization efforts and coordinating attacks. As we delve deeper into 2025, the intersection of creativity in AI and user-driven content generation is a territory that requires vigilant monitoring and robust security frameworks.

Enterprise AI Solutions at Devot.ai

Amid these growing threats, forward-thinking enterprises must leverage AI solutions that prioritize both innovation and security. Devot.ai is at the forefront of developing enterprise AI applications that are not only effective in enhancing productivity but are also fortified with safety features that limit vulnerabilities. Our advanced AI models can analyze vast datasets to predict potential security threats, enabling organizations to take preemptive measures.

Real-time Monitoring: Our AI systems are designed for real-time analysis, allowing businesses to detect anomalies that may indicate malicious activities.

Predictive Analytics: By utilizing LLMs, Devot.ai can help organizations foresee potential risks stemming from AI misuse, thus providing actionable insights.

Training and Awareness: We focus on educating teams about the ethical use of AI, ensuring that staff are knowledgeable about both the opportunities and threats associated with these technologies.

Proactive Measures: Collaborating for Safety

In light of the challenges posed by AI misuse, collaboration across sectors becomes essential. Tech companies, governmental agencies, and intelligence organizations must engage in open dialogues to create standardized practices aimed at regulating the use of AI. By working together, stakeholders can establish frameworks that foster both innovation and security, ensuring that advancements in AI contribute positively to society.

Investing in Ethical AI Development

As AI technologies advance, ethical considerations must remain at the forefront of development initiatives. At Devot.ai, we champion ethical AI by embedding fairness, accountability, and transparency into our systems. By making ethical AI development a priority, we can reduce the likelihood of misuse while fostering trust among users and the general public.

Conclusion

The surge of AI use in terrorist plots is a stark reminder of the dual-edged nature of technological advancement. As we look ahead to 2025 and beyond, companies like Devot.ai are poised to lead the charge in crafting AI solutions that not only drive business success but also uphold safety standards. By prioritizing responsible AI development and incorporating robust security measures, we can collectively mitigate risks and harness the full potential of AI for good.