Open Source AI promises access. Not just demos, but the ability to read, run, and reshape what you use.

Access is not equality. The gap shows up in data, compute, and day two operations.

Executive Summary

This is a practical read on how Open Source AI behaves when you have constraints. It stays focused on the equality angle: who actually benefits, who stalls, and why.

You will see where open helps, where it doesn’t, and what to do when reality bites. No tool parade. No lab fantasies.

Why openness isn’t a magic equalizer, but still moves the floor up

Common failure patterns you can expect before production

A stepwise path that avoids widening gaps as you scale

Trade-offs between speed, control, and responsibility

Introduction

You’re asked to make something useful with tight resources. The request changes twice before lunch. Access to models and code is not the issue. The pressure is whether you can deliver a reliable loop that improves over time.

That’s where this piece sits: Equality angle not just another "AI is cool" post. The topic is Open Source AI, not as decoration, but as a way to shift control back toward teams that need it.

It’s trending because costs and lock-in are starting to pinch. It’s becoming necessary because many are realizing that trust, adaptability, and governance can’t be outsourced forever.

Where openness helps, and where equality still breaks

Open Source AI lowers the doorframe. You can inspect weights, adapt behaviors, and ship without a permission slip. Yet the same projects stall when data quality is uneven, compute ceilings are hard, or operational drag piles up. Community model paths and bottlenecks

In early tests, openness speeds iteration. You can audit prompts, change tokenization quirks, adjust small adapters, and keep a straight face when someone asks how it works.

But day two arrives. Boundary cases show up. Language variants. Edge domain terms. Requests for explanations. Suddenly the hard parts are not model files. They are quiet costs:

Data cleaning that never ends

Evaluation that keeps moving targets

Monitoring that detects drift before a customer does

Failure patterns repeat:

Equality by download: “We all have the same model” turns into different outcomes because input data varies by team

Hidden inequality: The group that can run a quick adaptation loop pulls ahead, everyone else gets stuck in prompt gymnastics

Ops gravity: Good prototypes crumble under latency windows, memory limits, or governance reviews

The boundary is clear. Open Source AI gets you control and transparency. It does not grant operational maturity for free. Equality requires that the slowest step becomes easy enough for the average team, not just the most experienced one.

From download to daily use without widening gaps

Start narrow. Pick a task where a small win is obvious. Make the feedback loop visible. Ship a rough cut, then tighten where it matters.

Exploration that doesn’t paint you into a corner

Keep your first pass simple. Use small, cheap iterations that reveal where the real problem sits. When results wobble, ask whether the issue is data coverage, prompt shape, or an evaluation blind spot. Don’t escalate compute before you fix the basics.

Friction shows up as unplanned handoffs

The moment your prototype needs a review, latency budget, or sign-off, you’ll feel the drag. That’s normal. Reduce handoffs by capturing decisions inline. What changed. Why it changed. How it was tested. Short write-ups beat long decks here.

Scaling changes who owns what

As usage grows, responsibilities shift from a single builder to a set of owners. You need shared ground rules for updates, rollbacks, and tracing. Equality improves when the rules reduce surprises, not when they promise perfection.

Watch for three cliffs:

Volume cliff: a pattern that looked cheap becomes costly when requests spike

Complexity cliff: corner cases multiply after success, not before

Governance cliff: a review that was occasional becomes continuous

Cross those cliffs by tightening the slowest link, not by widening the stack. Shorten evaluation loops. Keep adaptation methods modest. Reserve heavy changes for high-signal wins, not curiosity.

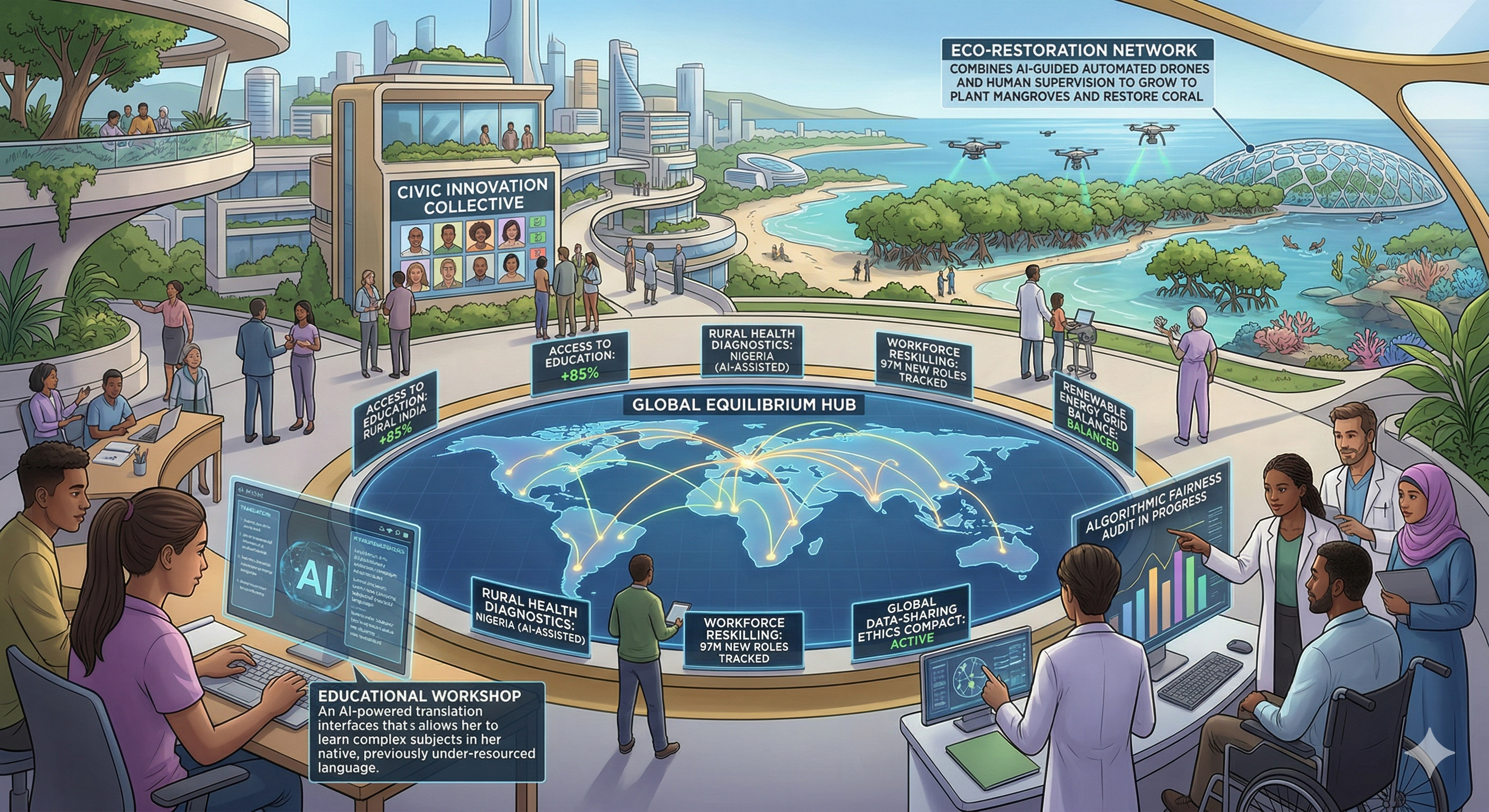

Examples and applications with real friction

Scenario 1. A team builds a classification helper using Open Source AI. Early tests shine. Then a surge of ambiguous inputs reduces accuracy. They try more prompts. Gains stall. The fix comes from a tiny data cleanup step plus a narrow re-labeling guideline. Equality improves because the solution didn’t require bigger models, just shared rules that others can follow.

Scenario 2. A group adds a lightweight adaptation to handle niche terms. It works in the lab. In use, memory limits force smaller batches. Performance drops at peak times. They back off to a simpler approach with caching and a stricter evaluation gate. Throughput recovers. Not perfect, but stable.

Scenario 3. An internal chatbot ships on an open base. Feedback is positive until users start asking for explanations. The team adds traceable reasoning snippets. Response time goes up. Pressure mounts. They split paths: fast answers for common questions, slower answers when explanation is requested. Satisfaction rises even though average latency increases. Equality improves because users can choose the path they need.

None of these outcomes are glamorous. They are small, directed moves. The equality angle shows up in how easy it is for other teams to repeat them without special favors.

Tables and comparisons that clarify differences

Open Source AI lowers barriers, but practice separates outcomes. Here’s how approaches differ when experience grows.

Aspect Students/Beginners Experienced Practitioners Pattern spottingFocus on model swaps and prompt tweaksFocus on data shape, evaluation gaps, and rollout risksData handlingAssume data is “good enough”Treat data as the main lever for stabilityGuardrailsAdd after the demoDesign for safe failure from the startIteration speedFast early, slows sharply under reviewSteady pace with fewer reversalsEquality mindsetAssumes access equals parityDesigns for the slowest step to be easy

What this looks like in real environments

Open Source AI shines when constraints are explicit. If your compute budget is tight, you pick smaller models and smarter evaluation. If your data is messy, you invest in labels and guardrails before you scale.

Boundaries matter. If you cannot trace outputs to inputs, you can’t improve fairness. If you can’t reproduce a result, you can’t claim a stable process. If your adaptation logic is a tangle of one-off fixes, you’ve traded equality for heroics.

Failure is not final. It’s only expensive if you learn slowly. Make it cheap to learn. Keep changes reviewable. Capture context next to code and prompts. When someone new joins, they should climb the same staircase, not a different mountain.

FAQ

Does Open Source AI guarantee lower cost?

Not by itself. Openness cuts license risk, but data work, evaluation, and operations still drive spend.

Is openness enough for trust?

Transparency helps, but trust comes from repeatability and clear guardrails more than license terms.

How do I start without overcommitting?

Pick one task with clear feedback. Ship small, measure, then only scale what proves stable.

What if my team lacks specialized skills?

Design for simple loops. Favor approaches that others can maintain. Equality grows when the process is teachable.

When is closed a better choice?

When speed to a specific capability beats the need for control. Revisit later if dependency risk grows.

Responsibility is shifting from “which model” to “who owns the loop”

The pressure is moving. Less on picking models, more on designing loops that tolerate change and still behave. Open Source AI helps because you can shape those loops on your terms.

Equality rises when the process is boring in the right places. Reproducible. Auditable. Easy to roll back. That’s not flashy, but it’s how teams with fewer resources stay in the game and keep improving.