Quietly, GPT-5.3 Codex is crossing a line. It’s no longer only about completing code. It’s coordinating tasks, reading context, and making decisions that land outside the editor.

Executive Summary

Teams want leverage without adding headcount. The path from code autocomplete to an agent that handles real work is feasible, but only if you respect constraints.

This piece maps how that shift happens, what breaks, and how to scale without gambling on outcomes.

See how GPT-5.3 Codex behaves when work spans code, tickets, and approvals.

Recognize boundaries: permissions, ambiguity, latency, and policy drift.

Adopt a stepwise flow that contains risk while compounding autonomy.

Learn where friction appears first, and how it changes at scale.

Introduction

Picture a sprint week. A broken integration, a half-written feature, a stakeholder waiting on a brief, and a compliance note that can’t slip. The usual fix is to throw bodies at the fire. Instead, someone routes the mess to an agent built on GPT-5.3 Codex.

It picks up a failing test, checks an API change log, drafts a rollback plan, and pings the right person for an approval. Not science fiction—just a careful evolution: from coding assistant to general work agent.

Why this is trending: models are better at understanding intent, navigating structure, and maintaining short chains of reasoning. Why it’s becoming necessary: the work surface has widened. Delivering value means crossing tools, roles, and policies. A code-only helper stalls at handoffs. An agent that understands the job can move it forward.

When a coding model starts touching real work, the rules change

In controlled prompts, GPT-5.3 Codex feels crisp. In production, ambiguity climbs. Inputs contain partial context. Deadlines are soft until they aren’t. Policies exist but are unevenly enforced. The agent must decide what’s safe to infer and when to stop.

Boundaries show up fast. Permissions constrain action, not thought. The model can advise on a database migration but shouldn’t run one without a change window. If scopes are too tight, the agent thrashes—asking humans for every small step. Too loose, and you risk silent side effects.

Failure patterns repeat: objective drift when tickets are under-specified; overconfident plans that ignore rate limits; cautious loops that time out; hallucinated file paths; missing rollback steps; status updates that calm stakeholders but conceal unresolved dependencies.

Another edge: non-determinism under pressure. Two similar inputs produce different plans. That can be fine—creative routing finds shortcuts—but it’s painful in audit trails. You need intent pinning: lock the goal, the constraints, and the policy gates before exploration begins.

Finally, context cost. The more sources you feed, the more expensive and fragile the decision process becomes. High recall of irrelevant detail dilutes signal. Curate aggressively. The agent’s strength is synthesis, not hoarding.

From helper to agent you can trust: the stepwise path

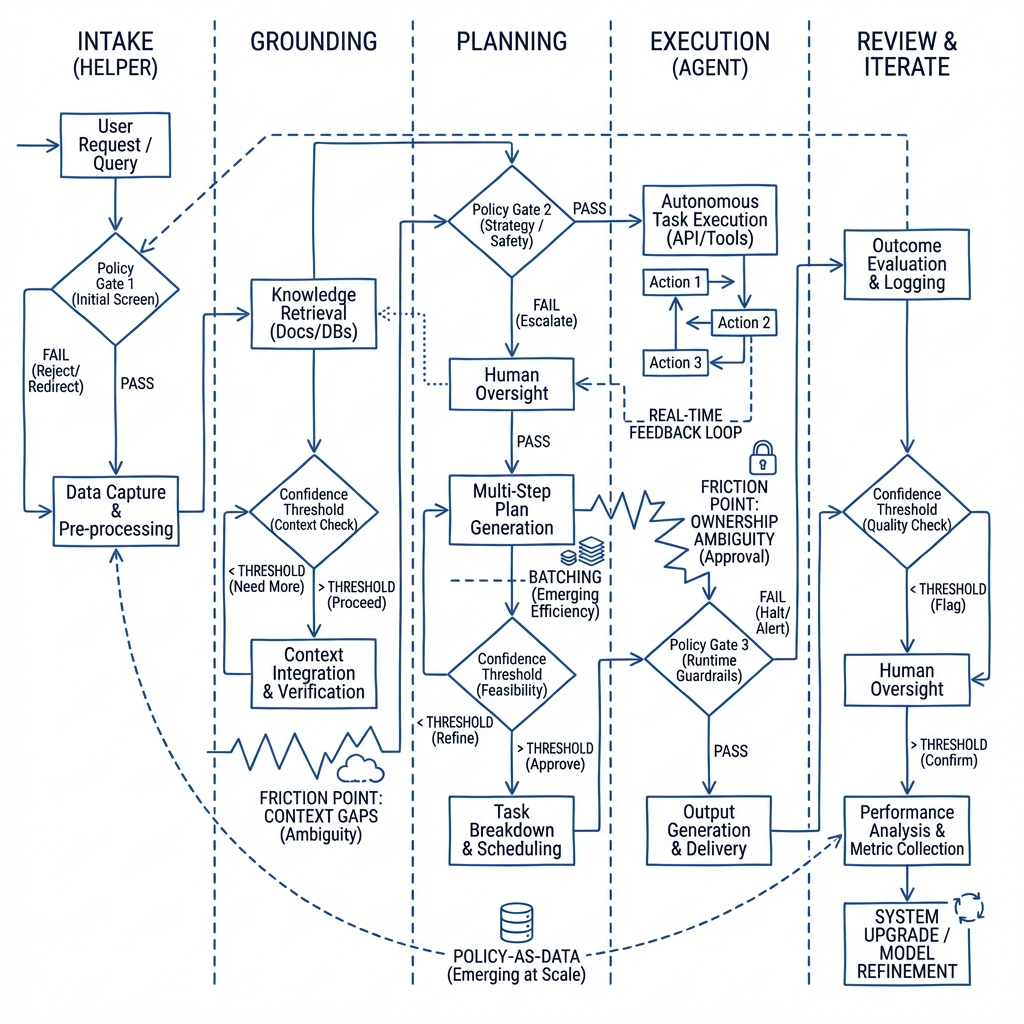

Start with intake. Force tasks into a minimal schema: goal, constraints, deadline, data locations, and who approves what. The model is good at extracting structure from messy inputs; make it do that before planning.

Ground intent. Translate user text into a contract the system understands. Example: turn “fix the flaky webhook” into “stabilize retries to within X, with logging at Y, and no schema changes.” Without grounding, plans balloon.

Plan with checkpoints. The agent proposes a path, highlights unknowns, and marks policy intersections. Humans review only the deltas: risky calls, external changes, irreversible steps.

Execute with reversible moves first. Prefer dry runs, drafts, sandboxes. When confidence is high and scope is narrow, let the agent commit small changes end to end.

Close the loop. Log inputs, decisions, artifacts, and outcomes. Score quality on the signals that matter: cycle time, rework rate, and variance under load. Don’t chase vanity metrics.

Where friction appears: unclear ownership (who approves), fragmented context (docs, tickets, chat), and hidden policies. At small scale, humans paper over gaps. At larger scale, those gaps amplify. Codify them or the agent invents rules.

What changes when scaled: batching beats one-off actions; policy becomes data, not tribal knowledge; memory shifts from chat history to structured state; and cost control requires routing easy tasks down shorter paths.

Guardrails that keep autonomy productive, not risky

Scope control beats post-hoc review

Limit the agent to bounded domains with clear rollback steps. Expansion comes after stable wins. Review is a backstop, not a strategy.

Human checkpoints where they reduce variance

Insert approval only at high-impact junctures. Too many gates increase lead time and degrade trust in the agent’s momentum.

Telemetry that earns its keep

Collect fewer, better signals. Track how often plans change mid-execution, not just how fast tasks close. Volatility predicts hidden risk.

Examples and applications

Scenario: cross-system bug. The agent sees failing tests, correlates them with a recent dependency bump, and drafts a rollback PR. It also prepares a short note explaining trade-offs. Imperfection: it proposes a rollback that conflicts with a minor feature. A human spots it at the checkpoint. Outcome: small delay, but no outage.

Scenario: recurring manual report. The agent pulls data, composes a summary, and schedules delivery. Imperfection: it misreads a filter, overcounting a segment. Because the report had an automated reasonableness check, it flags the anomaly and halts delivery. The fix becomes a new guardrail.

Scenario: policy-heavy request. The agent drafts steps, cites relevant rules, and routes approvals. Imperfection: it over-applies an outdated rule because a newer version lives in a forgotten doc. The postmortem is simple: keep policy sources canonical, or accept slowdown.

None of these require exotic infrastructure. They require crisp task intake, permission boundaries, and a culture that treats the agent as a colleague with strengths and limits.

Tables and comparisons

Aspect Beginners Experienced Practitioners Task framing Describe tasks loosely; rely on the model to infer Pin intent, constraints, and success criteria upfront Context strategy Attach all related docs Curate minimal, authoritative sources only Checkpoints Review everything Gate only high-impact or irreversible steps Error handling Fix issues after they surface Design reversible first moves and anomaly checks Scaling approach Increase agent scope rapidly Scale by batch patterns and policy-as-data

FAQ

How does GPT-5.3 Codex differ when acting as an agent?

It plans, executes, and revises across tools. You must define scope, access, and checkpoints.

What’s the first failure to expect?

Objective drift from vague tasks. Force structured intake before any action.

How do I measure progress without gaming it?

Track rework rate and variance under load, not just speed.

Where should autonomy start?

Reversible tasks with clear policy. Expand after stable wins.

Do I need complex orchestration?

Not at first. Start with intent grounding, simple gates, and tight scopes.

When the agent carries the responsibility you used to own

As GPT-5.3 Codex steps beyond the editor, responsibility shifts from individuals to systems. That shift only works when intent, policy, and checkpoints are explicit and small failures are cheap.

The progression is simple but unforgiving: constrain, learn, then expand. The agent gains autonomy by earning it—one contained domain at a time.