AI cold email outreach is no longer about blasting templates. It’s about building a system that learns, protects reputation, and earns replies without collapsing under volume pressure.

Executive Summary

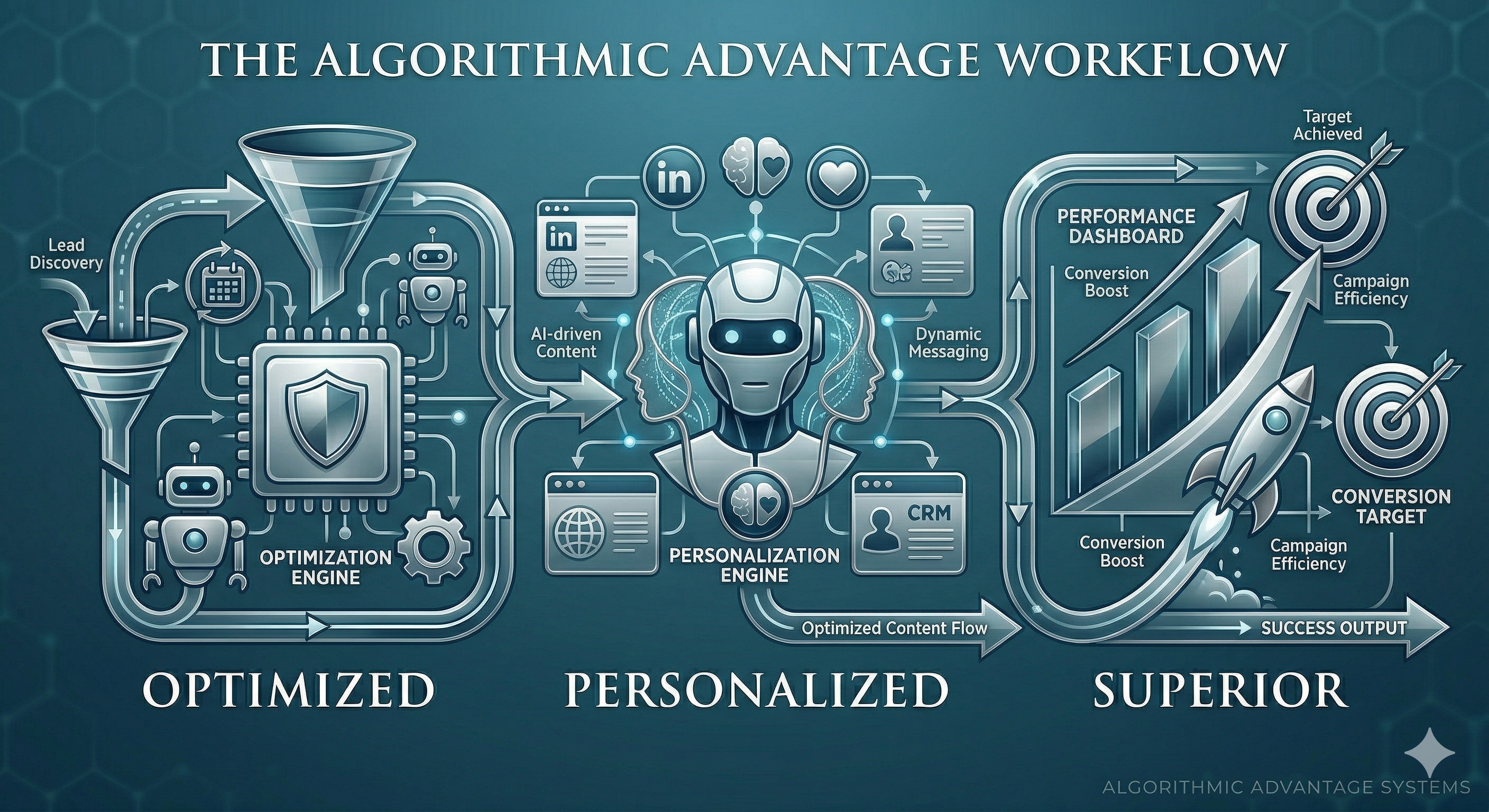

AI now shapes prospecting from data intake to reply handling. The upside is faster learning and tighter personalization. The downside is fragility when signals are weak or feedback loops are noisy.

This piece shows how these systems behave under real constraints, the boundaries that matter, and the pathway from prototype to dependable results.

Understand where automation helps and when it harms deliverability.

Design guardrails that prevent over-personalization and fabricated context.

Scale with measured cadence, selective enrichment, and feedback you can trust.

Introduction

You have a list, a quota, and a domain already warmed just enough to be dangerous. Leadership wants pipeline. Legal wants control. Prospects are numb to sameness. The old playbook can’t carry the weight.

How AI Is Transforming Cold Email Outreach in 2026: Automation, Personalization, and Results is not a future headline. It’s the daily environment where AI orchestrates who to contact, what to say, and when to back off. AI cold email outreach is trending because it compresses experimentation cycles. It’s becoming necessary because inbox filters, buyer expectations, and team capacity moved faster than manual tactics could keep up.

Where AI outreach actually bends and breaks

In production, AI behaves like a powerful optimizer that goes off the rails when inputs drift. It can write an on-target line and, the next minute, hallucinate context from a stale profile. The system swings between high-reply streaks and sudden deliverability slides if you chase short-term wins.

Boundaries form around data quality and cadence discipline. If enrichment lags a quarter behind reality, personalization gets creepy or wrong. If send windows compress to hit a goal, thin content and repetitive syntax trip filters. And if you score success on opens rather than starts-of-conversation, the model learns to bait rather than earn.

Failure patterns repeat. Over-personalization that anchors on a minor detail and misses the job-to-be-done. Sequences that adapt too slowly and burn through a segment before a course correction. Models fine-tuned on biased win data that overfit to a single persona and ignore new signals. Human review that only samples happy-path outputs and misses edge cases. Each shows up as a measurable dip: rising spam complaints, flat reply rate, more unsubscribes, more bounces.

There is also the quiet failure. Messages that look fine but arrive at the wrong time, to the wrong role, with an ask too large for a first touch. The system considers it a send. The recipient considers it noise. That gap is where most teams lose the plot.

From prototype to a loop you can trust

Implementation starts simple. You seed a small segment with reliable data. You define a few message patterns tied to clear intents. You put lightweight gates in front of sends. Early wins come from structure, not cleverness.

Friction appears when volume climbs. Generation latency expands the send window and offsets timing. Minor template churn multiplies into dozens of variants with no lineage. Reviewers get numb to flaws and miss drift. Monitoring lags behind sends, so by the time you see a negative trend, domains already took a hit.

The loop that holds up under pressure has bones. Intake that rejects uncertain records rather than stuffing gaps with guesses. Segmentation that fits the ask to the likely authority and maturity. Message generation constrained by claims you can verify and personalization that ties to a public fact or a first-party signal. Gates that sample outputs continuously, not just at launch. Cadence that keeps sending density stable. Feedback that scores threads by conversational progress rather than raw replies. Updates that change one variable at a time.

At scale, tiny choices matter. A single aggressive call-to-action across a large batch can tilt spam reports. Re-using a high-performing subject line too broadly triggers pattern detection. Reply handling that auto-sends follow-ups after a soft decline inflates annoyance. The system needs memory, but it also needs brakes. Add guardrails that cap novelty per send batch and pause when negative signals spike.

As the loop matures, you will hear a quiet click. Fewer last-minute edits. Fewer 11pm domain resets. More stable reply-to-meeting ratios. That stability comes from refusing to let the model chase metrics that do not correlate with outcomes you actually want.

Examples and applications under real constraints

Entering a new segment with thin signals

You have titles but little context. The system proposes high personalization. You set a boundary: only reference facts verified in two sources, otherwise keep the opener neutral and focus on the job-to-be-done. Replies are lower in week one but quality improves. The lesson is dull but durable. When signals are thin, reduce specificity, not standards.

Event-triggered outreach with uneven data

Alerts spike when companies change leadership or tech stacks. The outreach loop tries to move fast. A misfire references an outdated change. You trim the trigger to require a recency check and add a fallback opener for stale cases. Volume drops. Complaints drop faster. The trade-off favors trust over speed.

Mid-campaign course correction after bounce creep

Bounces rise on day three. The instinct is to push harder. You pause two sending lanes, re-verify a slice, and rotate to a different segment while the root cause is fixed. Meetings dip that week. The domain avoids long-term damage. Small retreat, larger save.

Personalization that overshoots

An email names a minor blog post and draws the wrong conclusion. The recipient calls it out. You rewrite the rule: only anchor on material that aligns with the recipient’s scope of authority. The error rate drops because the model stops grabbing trivia to seem clever.

Tables and comparisons

Practice Students/Beginners Experienced Practitioners Success metric Opens and positive replies Starts-of-conversation leading to next step Personalization Deep references per contact Selective, verifiable, tied to the ask Data gaps Filled with guesswork Rejected or routed to neutral messaging Cadence Front-load volume to hit targets Stable send density with pause thresholds Testing Many changes at once One variable at a time with lineage Review Launch-only spot checks Continuous sampling and drift alarms Model updates Chasing recent winners Guardrailed updates tied to verified lift

FAQ

How much personalization is enough?

Enough to prove relevance without inventing facts. One grounded tie-in beats three shaky specifics.

What should I track beyond opens and replies?

Thread depth, soft declines, meeting acceptance, and negative signals like complaints and unsubscribes.

Can I run AI cold email outreach without human review?

Not responsibly. Keep at least a sampling gate, especially when segments or prompts change.

How do I prevent hallucinated context?

Restrict claims to verified fields and public facts. Add a rule that any unverified slot defaults to neutral language.

What’s the fastest way to recover from a deliverability dip?

Pause the riskiest lanes, verify a subset, lower send density, and resume with safer segments while you fix root causes.

Accountability is shifting from copy to systems thinking

The pressure is moving away from clever subject lines and toward how your loop handles uncertainty, novelty, and risk. Teams are judged by the steadiness of outcomes more than the peaks.

If there is a progression here, it’s from writing emails to managing a learning system. The work looks quieter. The results last longer.