Prompt Engineering is still on job boards in 2026. The titles are louder than the work. The work is broader than the titles.

Executive Summary

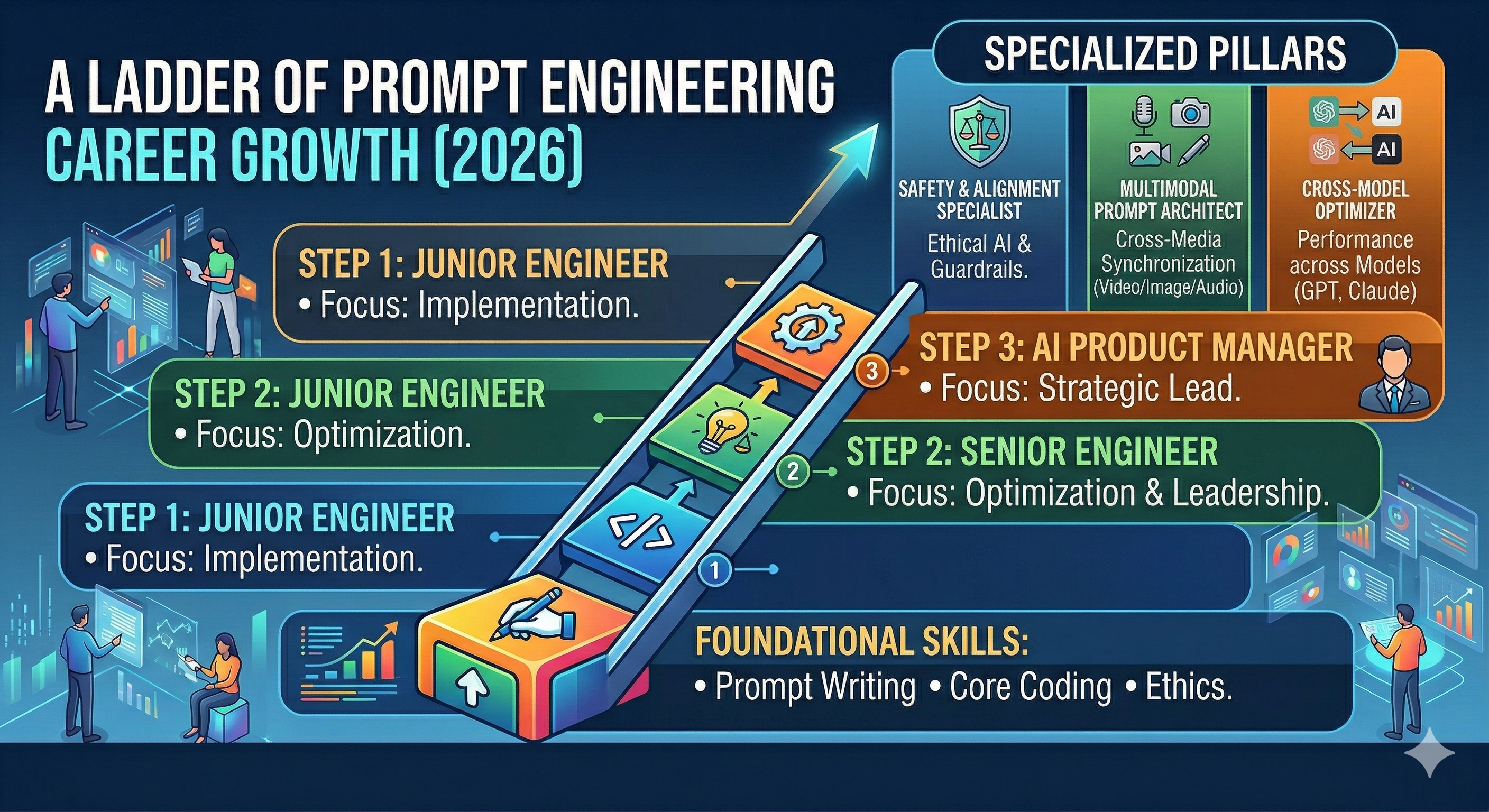

Job postings mentioning prompt engineering are real, but they split into three patterns: standalone titles, hybrid roles, and embedded responsibilities. The last two dominate.

The core signal across postings: companies want reliability and speed with GenAI features, not just clever prompts. Evaluation and cross-team handoffs decide outcomes.

Standalone “Prompt Engineer” titles appear, though limited and often research-leaning.

Most postings blend prompt work with product, data, or platform responsibilities.

Career durability hinges on systems thinking, not trick prompts.

Job ads over-index on creativity; day-one work over-indexes on measurement and change control.

Readers will leave with a clear sense of how postings map to real work, where failure shows up, and how to evaluate fit.

Introduction

Picture a team under a quarterly deadline. A feature needs a natural-language interface. A manager scans resumes for someone who can “make the model behave.” A candidate reads a posting that promises impact, fast learning, and ownership of prompts. Both are hoping the other will solve uncertainty.

This is where prompt engineering sits now. It’s the craft of shaping model behavior with instructions, examples, and constraints. It’s trending because GenAI launched fast, then ran into reliability, safety, and cost. It is becoming necessary because teams don’t get second chances on user trust, and models don’t always cooperate.

So, Is Prompt Engineering a Real Career in 2026? What Job Boards Are Actually Showing is a mixed picture. The phrase travels well in headlines and requisitions. In practice, the role flexes with product scope, data maturity, and how a company treats evaluation as a discipline. The keyword gets you an interview. The system you build keeps the job.

Where the title fits and where it breaks inside real teams

Across current postings, three shapes repeat: a pure “Prompt Engineer” focusing on instruction craft and experiments; a hybrid that owns prompts plus adjacent duties; and an embedded responsibility inside product or data roles. The boundaries are fuzzy, the outcomes aren’t.

How the role fragments across teams

Boundaries that matter:

Product vs research: Some postings want fast prototypes; others expect production-grade guardrails. The same title can mean very different expectations.

Individual contribution vs platform stewardship: A job may start with one feature and drift into maintaining libraries and conventions for multiple teams.

Discovery vs maintenance: Early wins come from clever prompt structures. Long-term wins come from tracking changes and measuring impact.

Failure patterns that show up regardless of title:

Brittle prompts that degrade when data shifts or when models update.

Untracked prompt edits that quietly change user behavior without oversight.

Evaluation debt: teams ship without tests, then spend cycles debugging behavior they can’t reproduce.

Practical boundaries for the role:

Ownership of behavior must include an evaluation loop, not just authoring text.

“Creativity” must coexist with a change process. If no change process exists, you will build one.

If the posting separates prompt work from data and product decisions, expect friction. If it acknowledges collaboration, you have a path.

So, yes, prompt engineering appears as a career in 2026, but the durable version is a function that pairs instruction design with measurement, governance, and an understanding of how the broader product works. Titles vary. The work converges.

From job ad to day one: how the work really unfolds

Most postings read aspirational. The implementation looks like this in practice:

The first weeks are about alignment, not magic prompts

You translate a vague requirement into testable behaviors. You define success criteria small enough to measure. You collect messy examples, surface edge cases, and set a baseline before changing anything.

Prototyping is cheap, but evaluation is the bottleneck

Drafts come quickly. The wall appears when you need to prove consistency. You’ll assemble a lightweight evaluation set and run iterations until variance calms down. Without this loop, you’re guessing.

Friction shows up at handoffs

Legal and safety reviews ask for traceability. Product asks for latency and cost guardrails. Support wants failure modes that users understand. If your prompt changes, who approves it? How is roll-forward or roll-back tracked?

Scale changes the job

When one feature becomes many, you move from bespoke prompts to shared patterns. You enforce naming, versioning, and reuse. You add a light governance layer so experiments don’t break production behavior.

At scale, your value is not a bag of clever techniques. It’s a reliable path from idea to behavior to shipped change with minimal surprise.

Examples and applications with the good, the messy, and the rework

Search augmentation in a content-heavy product

A posting promises ownership of “prompt pipelines.” You join and discover three teams each running their own versions. You standardize examples, add a basic evaluation set, and align on a modest success metric. Results improve, though a minor update derails a subset of queries. The fix isn’t a new prompt, it’s adding guardrails for ambiguous cases.

Automated drafting for user-facing outputs

The ad emphasizes creativity. Day one, support escalations flag tone mismatches. You introduce a constrained style pattern and a small feedback channel to collect failures. Output is less flashy but more dependable. Leadership asks for more coverage. You push for quality thresholds before expanding scope.

Internal assistant for team workflows

The role reads like greenfield. In reality, the assistant must work with existing systems and permissions. Early demos land well. Adoption stalls when a niche case fails. You route niche scenarios to fallbacks and improve guidance to users. The assistant grows gradually, not by trying to be universal on day one.

Tables and comparisons

How beginners and experienced practitioners approach the same responsibilities:

Area Students/Beginners Experienced Practitioners Scoping Start building quickly with broad goals. Pin down narrow behaviors first, define measurable outcomes. Prompt Design Rely on clever phrasing and examples. Design for drift, modularize patterns, plan for change. Evaluation Manual spot checks, subjective judgments. Small but consistent tests, tracked over time. Failure Handling Keep iterating prompts when results wobble. Add fallbacks, clarify inputs, adjust scope before rewriting. Collaboration Work in isolation until a demo is ready. Align early with product, data, and review processes.

FAQ

Are standalone “Prompt Engineer” jobs still posted?

Yes, though the majority of roles blend prompt work with adjacent responsibilities.

What’s the quickest signal a posting is realistic?

It mentions evaluation, change control, and collaboration expectations, not just creativity.

Do I need deep model expertise?

Not always. What’s essential is a reliable process for shaping behavior and measuring it.

Where do these roles usually sit?

Often with product or data-aligned teams, sometimes as a small platform function.

How do I stand out when applying?

Show a repeatable path from problem framing to stable outcomes, with trade-offs explained.

Rising pressure: from prompt craft to behavior accountability

The pressure is shifting from inventing prompts to owning behavior. Teams want fewer surprises and clearer rollback paths. Job boards hint at this in the fine print.

As responsibilities expand, the career becomes less about singular expertise and more about stewarding a small system: scoped behaviors, shared patterns, measurable changes. That’s where prompt engineering stops being a novelty and starts looking like durable work.