Let’s be real: building a demo that uses an LLM is easy. You grab an API key, write twenty lines of Python, and suddenly you have a chatbot that writes poems about your SaaS product.

But taking that "cool demo" and turning it into a production-grade LLM architecture? That’s where the wheels usually fall off.

At DEVOT AI, we’ve seen plenty of founders and CTOs get stuck in what we call the "RAG Valley of Death." Their local prototype works great, but the moment it hits 1,000 concurrent users or complex enterprise data, it becomes slow, expensive, and hallucination-prone.

If you want to build AI that actually moves the needle, you need more than a prompt. You need an engineering-first architecture. This guide breaks down how to design an LLM system that scales, stays secure, and doesn't bankrupt you in token costs.

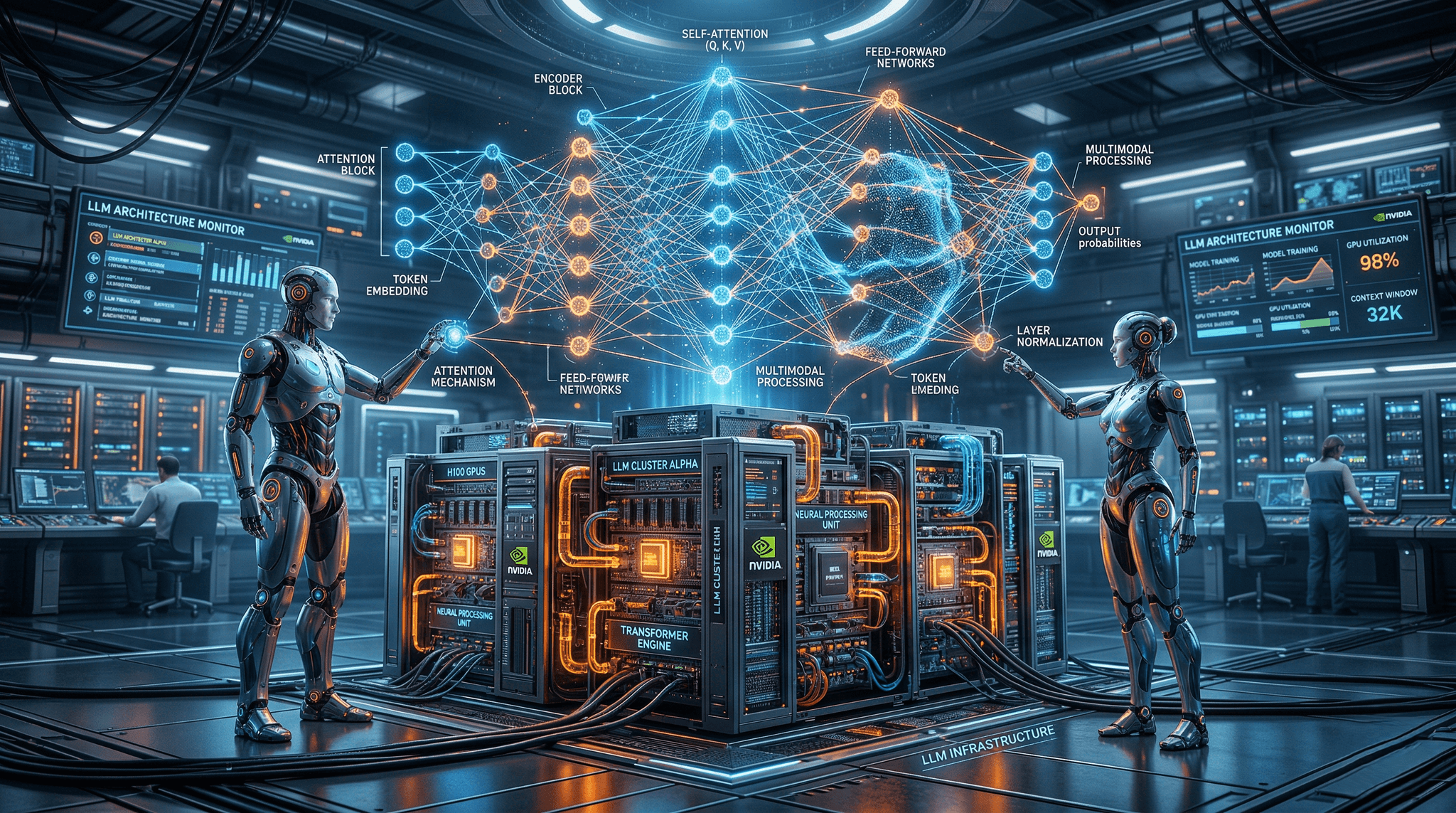

1. The Anatomy of a Production LLM Architecture

Before we talk about scaling, we need to understand the structural foundation. Most people think of an LLM as a "black box," but for an engineer, it’s a series of modular components.

The Foundation: Transformer Blocks The backbone of modern LLM architecture is the Transformer. Unlike older neural networks that processed data sequentially, Transformers use a self-attention mechanism. This allows the model to process entire sequences of text in parallel, understanding the relationship between words regardless of how far apart they are in a sentence.

The Embedding Layer This is where the magic starts. The embedding layer converts raw text into high-dimensional vectors. For instance, GPT-3 uses over 12,024 dimensions for each word. These vectors capture the semantic meaning of the input, allowing the model to understand that "bank" in a financial context is different from a "river bank."

The "Brain": Mixture-of-Experts (MoE) If you’re looking at top-tier models like GPT-4 or Mixtral, you’re likely dealing with a Mixture-of-Experts architecture. Instead of one massive, dense model, MoE uses multiple "specialist" sub-networks. When a query comes in, a router sends it to the most relevant experts.

The Benefit: You get the performance of a massive model with the speed and cost-efficiency of a smaller one.

2. Moving from Experimental RAG to Production-Grade Systems

Retrieval-Augmented Generation (RAG) is the industry standard for giving LLMs access to private data. However, a "naive" RAG setup—where you just dump PDFs into a vector database—will fail in production.

The "Spec-Driven" Approach to Data At DEVOT AI, we advocate for Spec-Driven AI. Before we write a single line of code, we define the data specs. How is the data chunked? What is the overlap? Are we using metadata filtering?

In a production-grade LLM architecture, your RAG pipeline should include:

Pre-processing: Cleaning noisy enterprise data (Slack logs, messy Confluence pages).

Advanced Chunking: Using semantic chunking rather than just fixed character counts.

Hybrid Search: Combining vector search (for meaning) with keyword search (for specific terms like product IDs).

Re-ranking: Using a secondary model to verify the top results before feeding them to the LLM.

3. Engineering Rigor: The DEVOT AI USP

Most AI agencies are "prompt engineers." We are AI Software Engineers.

When we talk about LLM architecture, we aren't just talking about the model. We’re talking about the infrastructure surrounding it. This is what we call "Production-Grade Engineering."

The Spec-Driven Development Cycle We don't do "guess and check." We use a rigorous spec-driven process:

System Architecture Specs: Defining every API endpoint, database schema, and LLM fallback strategy.

Fixed-Price Certainty: Because we spend so much time on the specs, we can offer fixed prices and clear timelines. No "infinite loops" of development.

Performance Benchmarking: We test latency, throughput, and accuracy before shipping.

4. Scalability and Cost Optimization

Token costs can kill a startup faster than a lack of product-market fit. An unoptimized LLM architecture is basically a blank check to OpenAI or Anthropic.

Token Management Strategies

Semantic Caching: If a user asks a question that was asked 5 minutes ago, why hit the LLM again? We implement caching layers (like Redis) to store and retrieve previously generated answers.

Model Distillation: You don't need GPT-4o to summarize a 200-word email. A production-grade architecture uses "router logic" to send simple tasks to smaller, cheaper models (like Llama 3 or Haiku) and reserves the "big guns" for complex reasoning.

Quantization: For self-hosted models, quantization reduces the precision of model weights, allowing you to run massive models on much cheaper hardware without a noticeable hit to accuracy.

5. Security and Enterprise Readiness

CTOs lose sleep over data privacy. If your LLM architecture involves sending raw customer PII (Personally Identifiable Information) to a third-party API, you have a problem.

The Security Layer A robust LLM architecture must include:

PII Masking: Automatically detecting and scrubbing names, emails, and credit card numbers before they leave your VPC.

Prompt Injection Defense: Implementing "guardrail" models that sit in front of your main LLM to catch malicious inputs.

SOC2 & Privacy Compliance: Ensuring all data handling meets enterprise standards.

6. The Future: Agentic Workflows

We are moving away from "chatbots" and toward AI Agents. In an agentic LLM architecture, the model doesn't just talk: it does.

An agentic architecture includes:

Tool Use (Function Calling): The LLM can decide to call an API, query a SQL database, or browse the web.

Memory Layers: Giving the agent "long-term memory" so it remembers a user’s preferences across different sessions.

Iterative Loops: The agent can check its own work, realize it made a mistake, and correct it before the user ever sees the output.

7. Why Architecture Trumps "Smart" Models

Models get updated every three months. GPT-5 will eventually replace GPT-4. If your entire business logic is baked into a specific prompt for a specific model, your system is fragile.

A Production-Grade LLM Architecture is model-agnostic. It treats the LLM as a swappable component. By building a robust layer of middleware, vector databases, and evaluation frameworks, you future-proof your tech stack.

Ready to Build Your Production-Grade AI? Stop playing with demos and start building software that scales. Whether you need a sophisticated RAG system, an autonomous agentic workflow, or a full-scale enterprise AI transformation, DEVOT AI has the engineering rigor to make it happen.

Contact Us today to discuss your project. 🤖