Prompt-first building is crawling out of prototypes and into day-to-day delivery. The pull is speed. The risk is everything else.

Executive Summary

Teams are pushing from prompt to production because it shortens discovery and demo loops. That works until ambiguity, data boundaries, and reliability show up.

This piece breaks down how vibe coding behaves under pressure, where it fails, and what it takes to ship responsibly without burying the speed advantage.

Where vibe coding shines for exploration and internal tools

Failure patterns that surface at integration, test, and scale

A practical path from first prompt to a production gate

Real examples with imperfect outcomes and trade-offs

Simple comparisons of novice and seasoned approaches

Introduction

A product request lands late. Requirements are fuzzy, the deadline is not. Someone writes a prompt, hits generate, and a working stub appears. It passes the demo. The room nods. Then real data arrives, and the edges fray.

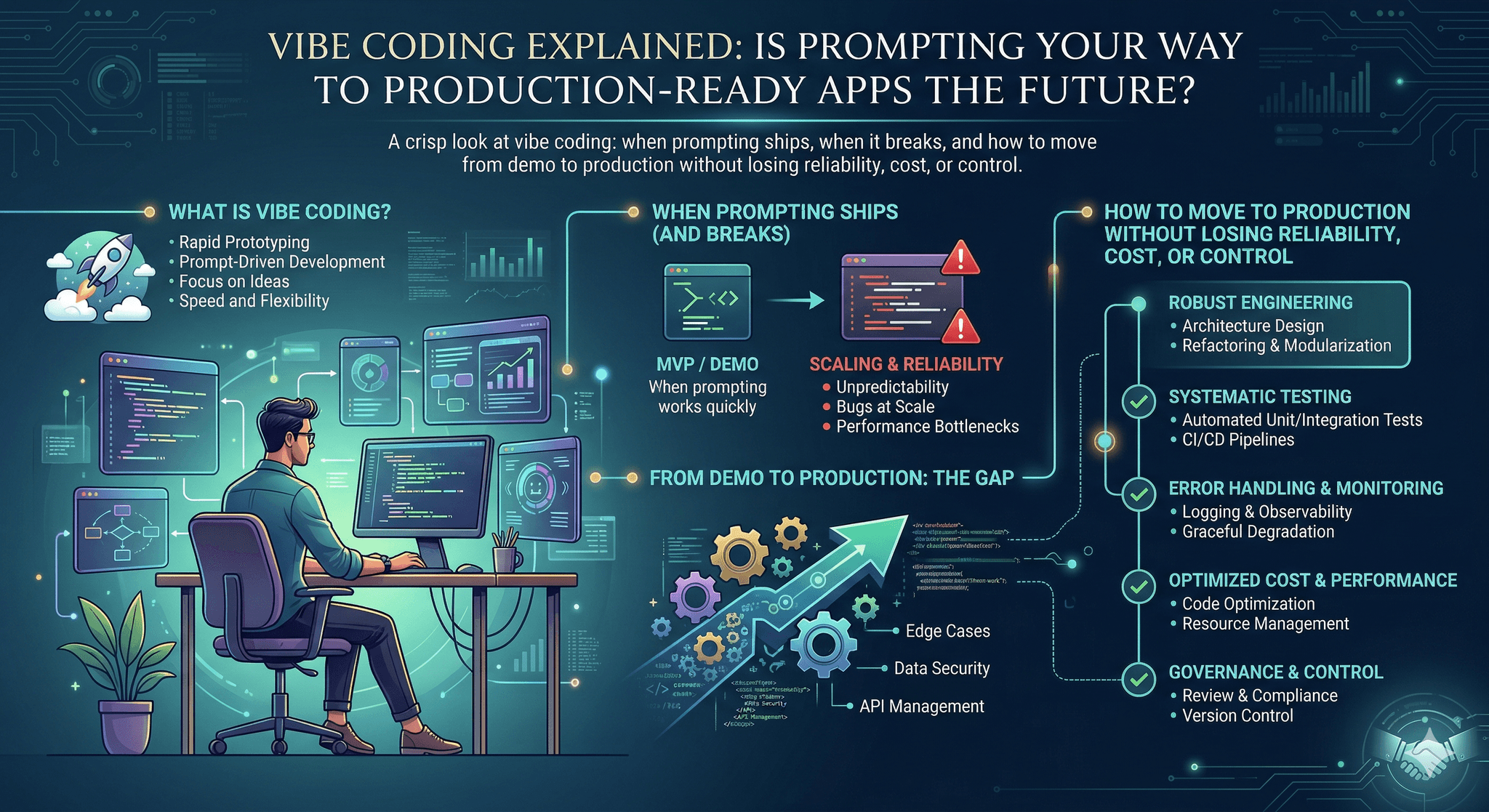

That rhythm is why Vibe Coding is trending. It lets teams turn intent into working flows quickly, even when inputs are incomplete or shifting. The question behind Vibe Coding Explained: Is Prompting Your Way to Production-Ready Apps the Future? is not about hype. It’s about whether a prompt-led path can survive contact with real traffic, governance, and on-call accountability.

Vibe coding is becoming necessary because backlogs outpace staffing, expectations keep rising, and the cost of waiting for perfect specs is higher than the cost of iterating on a rough, working thing. The trick is keeping speed without turning production into a coin flip.

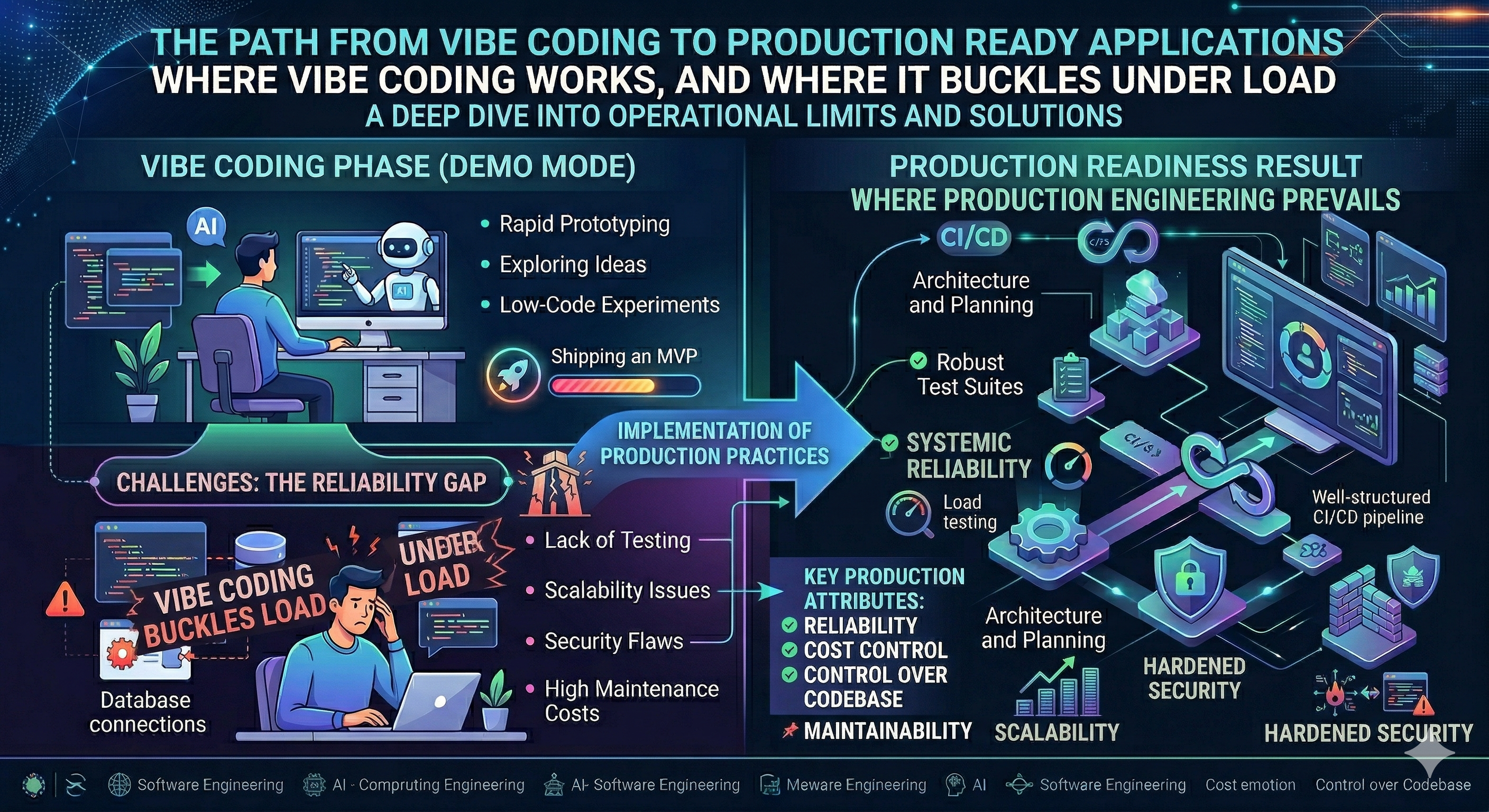

Where vibe coding works, and where it buckles under load

In live environments, vibe coding behaves like a fast solvent. It dissolves ambiguity and reveals shape quickly. It’s great for internal tools, orchestration glue, and exploratory interfaces. The failure mode appears when non-determinism meets strict interfaces, data variance, and performance budgets. Operational map of vibe coding pressure points

Boundaries start with data. Prompts tuned on clean samples drift when real records contain odd formatting, mixed languages, or edge-case spellings. If the prompt assumes ideal inputs, you’ll see quiet failure: polite responses that are wrong but plausible.

Another boundary is determinism. Systems that must produce the same output for the same input do not like stochastic models. You can pin settings, craft stricter instructions, and apply post-processing, but you’re still negotiating with probability.

Failure patterns are predictable:

Eval mismatch: Teams validate on curated examples, then break on live traffic that doesn’t match the prompt’s implied assumptions.

Spec drift: Prompts accrete constraints over time, becoming brittle and contradictory. Small edits trigger large behavioral shifts.

Guardrail gaps: Lack of input validation, output schemas, and safe defaults causes silent data loss or malformed responses that look fine until downstream systems reject them.

Cost surprises: A prototype running ad hoc calls behaves cheaply. Steady volume under production load changes the bill and the latency curve.

Observability blind spots: Without signatures, traces, and example capture, debugging a weird output becomes guesswork.

Vibe coding thrives when humans remain in the loop and failure is low-cost. It struggles when the environment demands strict contracts, low variance, and fast recovery under stress.

From first prompt to production gate: what actually happens

Step 1: Shape the intent. Clarify success criteria, even if loosely. Decide what “good enough” looks like for the first pass and how to tell if it’s off course.

Step 2: Draft a prompt and scaffold a tiny interface. This is where the speed shows. Expect to flip between prompt, examples, and quick checks.

Friction: Hidden assumptions creep in. A phrase like “be concise” looks harmless until a downstream component expects longer explanations.

Step 3: Create an evaluation harness. Start with a small, representative set of inputs and expected behaviors. Capture failures as new tests. Keep it living, not static.

Friction: Evals that mirror the happy path give false confidence. Mix in real edge cases early, not later.

Step 4: Wrap with shape checks. Define input validation, output schema checks, and fallback behaviors. Decide how to fail: block, retry, or escalate.

Friction: Overly strict checks lead to bounce loops. Too loose, and you ship nonsense downstream.

Step 5: Add safety and privacy controls. Bound the context. Decide what never leaves the system. Trim prompts to the minimum required data.

Friction: The fastest prompt often includes too much context. Later, this becomes a review blocker.

Step 6: Measure cost and latency. Run small load tests. Track variance, not just averages. Decide acceptable budgets for both.

Friction: Latency spikes appear under concurrent bursts. What felt fine on a desk stalls under real traffic.

Step 7: Seek a production gate. Present evals, guardrails, and rollback plans. Treat the prompt like code: reviewed, versioned, and reversible.

Friction: The prompt looks readable, so peers under-review it. Later, a minor wording change slips in and shifts behavior.

Step 8: Instrument for learning. Capture representative inputs and outputs for continuous evals. Store prompt versions alongside metrics.

Friction: Storage fills with noise. Without sampling strategy, you chase the wrong anomalies.

Step 9: Scale carefully. As usage grows, revisit budgets, caching strategies, and off-ramps. Small inefficiencies compound at volume.

Friction: A convenience regex, harmless at prototype scale, becomes a hotspot in production.

Step 10: Keep a refinement loop. Treat unexpected outputs as test cases, not anecdotes. Tighten prompts and checks with evidence, not vibes.

Examples that look clean in demos, messy on Monday

Routing support requests

A prompt assigns incoming messages to categories. It works in a demo batch. Live traffic brings short messages, mixed languages, and sarcasm. Accuracy drops on certain queues. Adding examples improves one category but degrades another. The fix was not a bigger prompt. It was a pre-processor to normalize inputs, plus a fallback to manual review when confidence dipped.

Summarizing long updates

The generator condenses status notes into bullet points. Early wins feel great. Then a sensitive term slips through, because the prompt rewarded specificity. Guardrails add redaction, but summaries feel thin. The balance required explicit content filters, clear length ranges, and a structured output shape that downstream tools could rely on.

Generating small code snippets

Speed is intoxicating until a snippet follows a style that a different service rejects. Tests catch format differences late. The remedy was agreeing on a schema and adding a simple linter in the pipeline. The prompt got shorter. The checks did more of the work.

Who thrives with vibe coding, and who gets trapped by it

Aspect Students/Beginners Experienced Practitioners Prompt style Verbose, many instructions, shifting tone Minimal, explicit constraints, stable tone Testing approach Manual spot checks on happy paths Small eval sets, edge cases, regression tracking Error handling Retry and hope Validate, coerce, fallback, escalate Data boundaries Context includes everything by default Principled minimization with clear exclusions Shipping criterion Looks good in a demo Meets defined metrics and rollback readiness

FAQ

Can vibe coding replace traditional development?

It can accelerate it, especially for intent-heavy tasks and glue work. For strict contracts and low-variance flows, combine it with checks and deterministic components.

What’s the quickest way to test a prompt-led feature?

Assemble a small, messy eval set that reflects real inputs. Automate it. Treat every surprising output as a new test case.

What skills matter most?

Clear spec thinking, ruthless scoping, and an instinct for where non-determinism is acceptable. Prompt craft helps, but systems thinking carries the day.

How do we prevent prompt rot over time?

Version prompts, link them to evals, and review diffs like code. Keep prompts short. Offload rules to validators and post-processors.

What should we log?

Prompt versions, sampled inputs and outputs, validation results, and latency and cost per call. Enough to reproduce, not enough to leak.

The responsibility shift: from clever prompts to measurable systems

Vibe coding gets features moving, but ownership moves fast from the prompt to the surrounding system. The work shifts toward boundaries, evals, and recovery plans that make stochastic parts safe to run.

The progression is simple: explore with prompts, harden with constraints, and prove with evidence. The future here is not about more words in a prompt. It’s about fewer surprises in production.